Andrew Bonello

21K posts

Andrew Bonello

@AndrewBonello

AI & Software. Comedy, Voice, Acting & Improv. Film Analysis & Reviews @FilmTagger_com. @Google @DWAnimation @Cinesite @StHughsCollege alum. Love #Golang.

The most unnerving part of the DeepSeek reaction online has been seeing folks take it as a sign that AI capability growth is not real It signals the opposite, large improvements are possible, and is almost certain to kick off an acceleration in AI development through competition

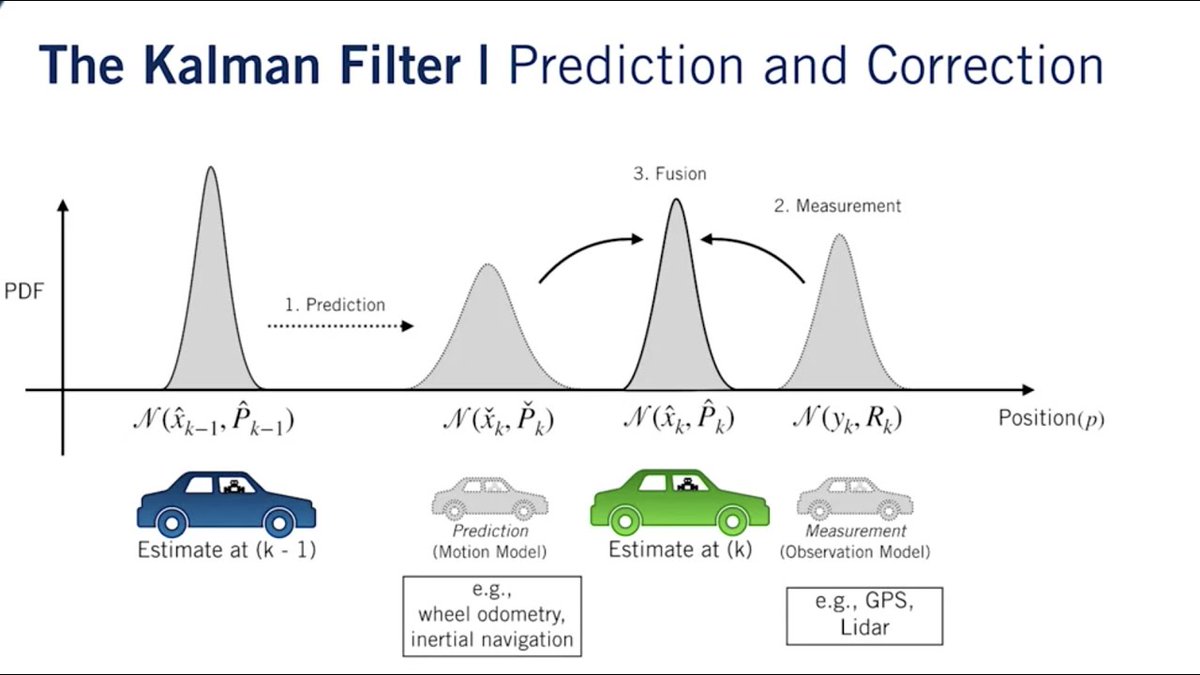

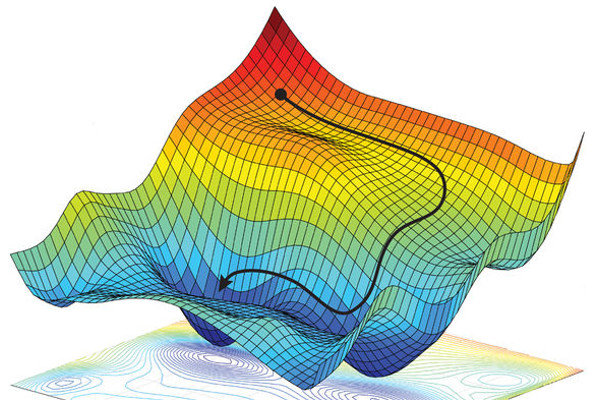

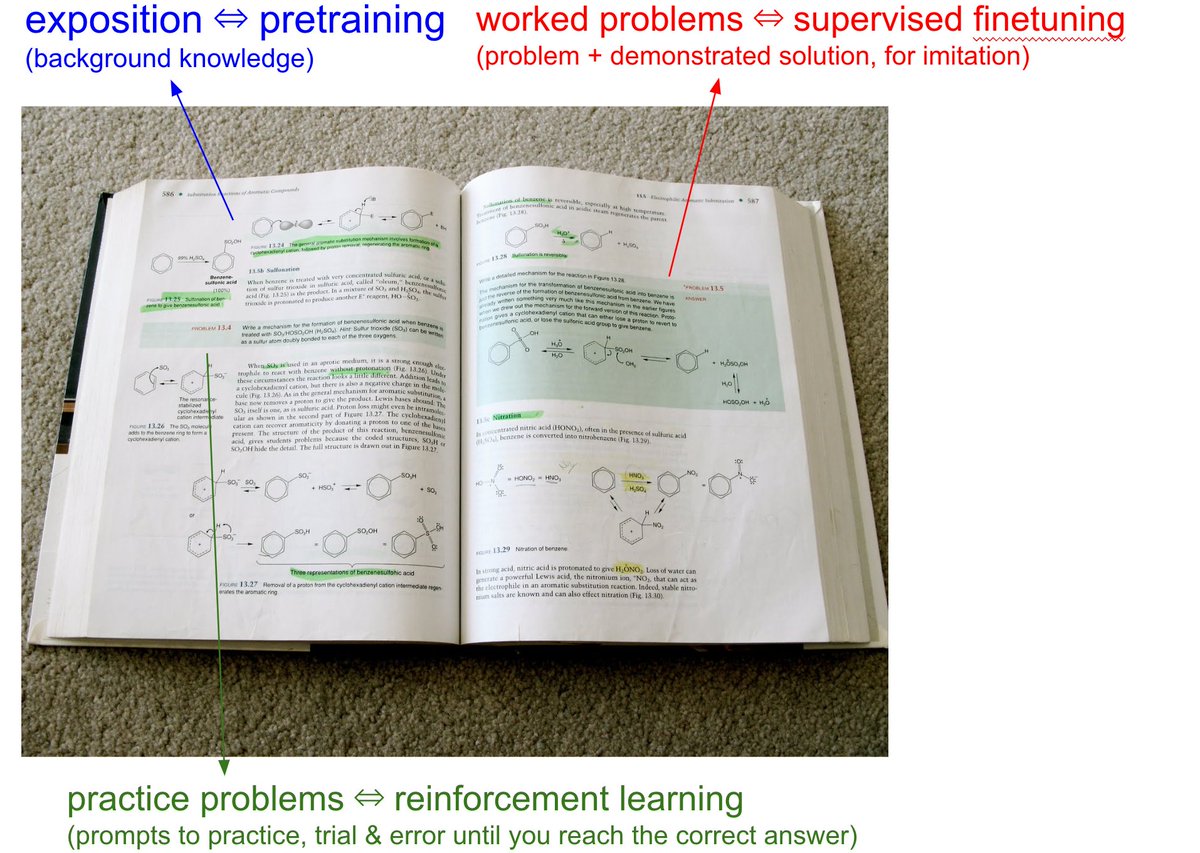

I think the AIs are reasonably good at debate and modify their position based on logical reasoning. The AI models are not perfect machines. So you need to fine tune results to fill in logical gaps. They first became emergent (able to perform unexpected tasks) with the Transformer architecture and the first LLM (ChatGTP). A LLM Large Language Model has 6 to 10 billion parameters. They are growing ten fold per year. The importance of carefully designed prompts implies that reasoning in LLMs isn't just a matter of retrieving the "right" data point. The prompt guides the LLM's reasoning process by establishing context and desired outcomes. The research community is still playing catchup. As no one fully understands why they work so well, even with an accuracy of 72%.