Anthony GX-Chen

96 posts

@AntChen_

PhD student @CILVRatNYU. Student Researcher @GoogleDeepMind || Prev: @Meta, @Mila_Quebec, @mcgillu || RL, ML, Neuroscience.

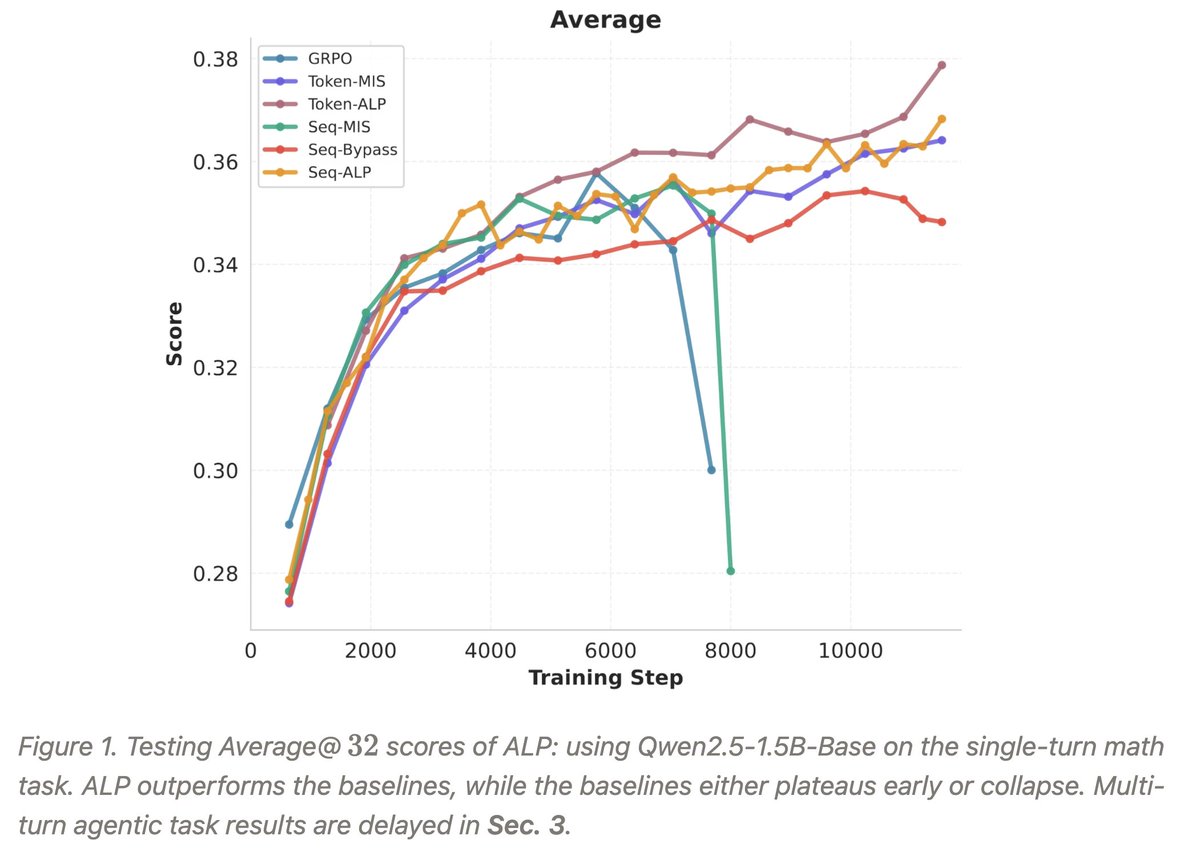

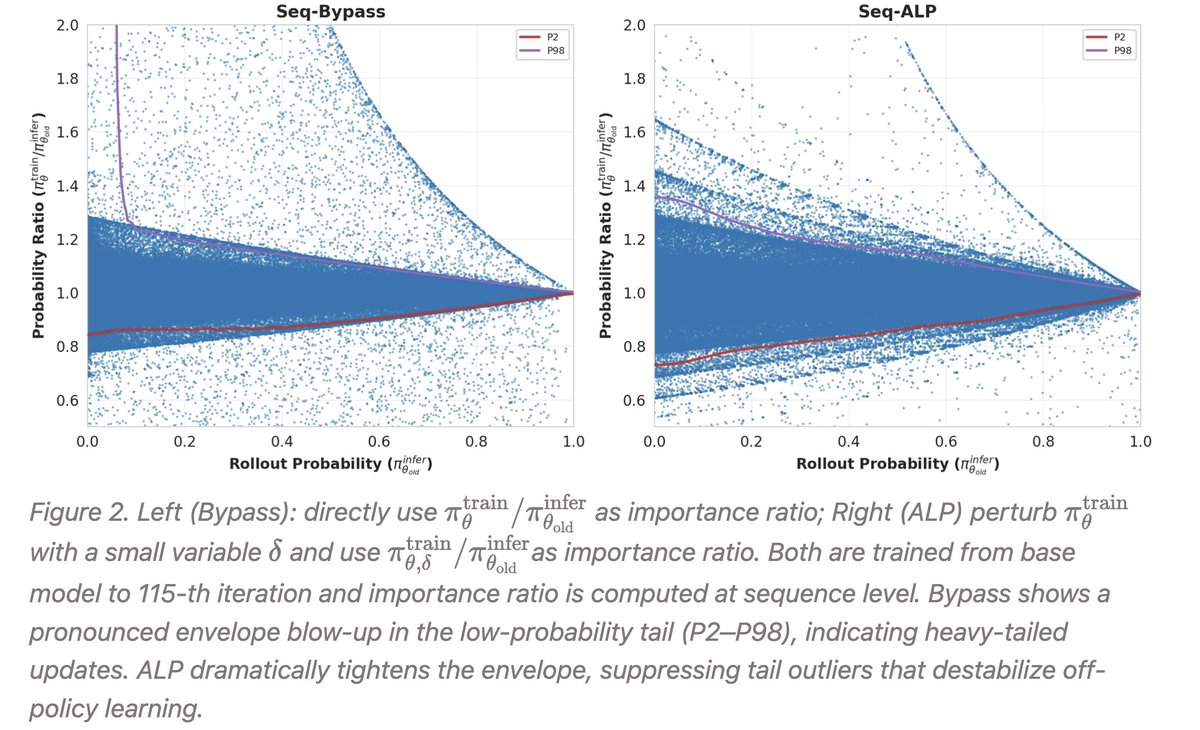

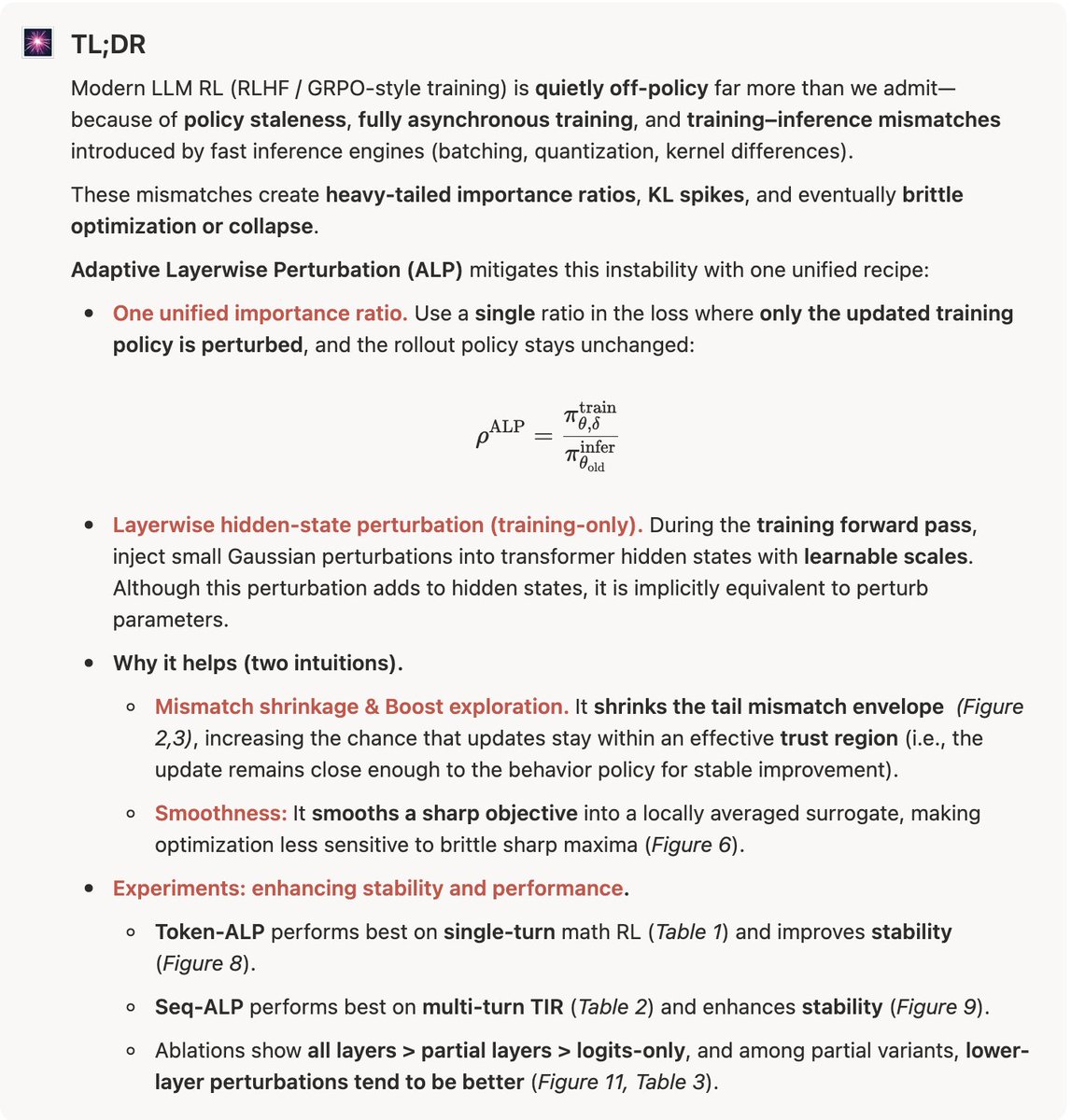

RL causing diversity collapse in generative models (e.g. LLMs) is *not* a training failure. It’s what KL / entropy-regularized RL is provably *designed* to do. The good news: we have a simple, principled fix. Accepted to #ICLR2026 🧵🔽 [1/n]

RL causing diversity collapse in generative models (e.g. LLMs) is *not* a training failure. It’s what KL / entropy-regularized RL is provably *designed* to do. The good news: we have a simple, principled fix. Accepted to #ICLR2026 🧵🔽 [1/n]

RL causing diversity collapse in generative models (e.g. LLMs) is *not* a training failure. It’s what KL / entropy-regularized RL is provably *designed* to do. The good news: we have a simple, principled fix. Accepted to #ICLR2026 🧵🔽 [1/n]

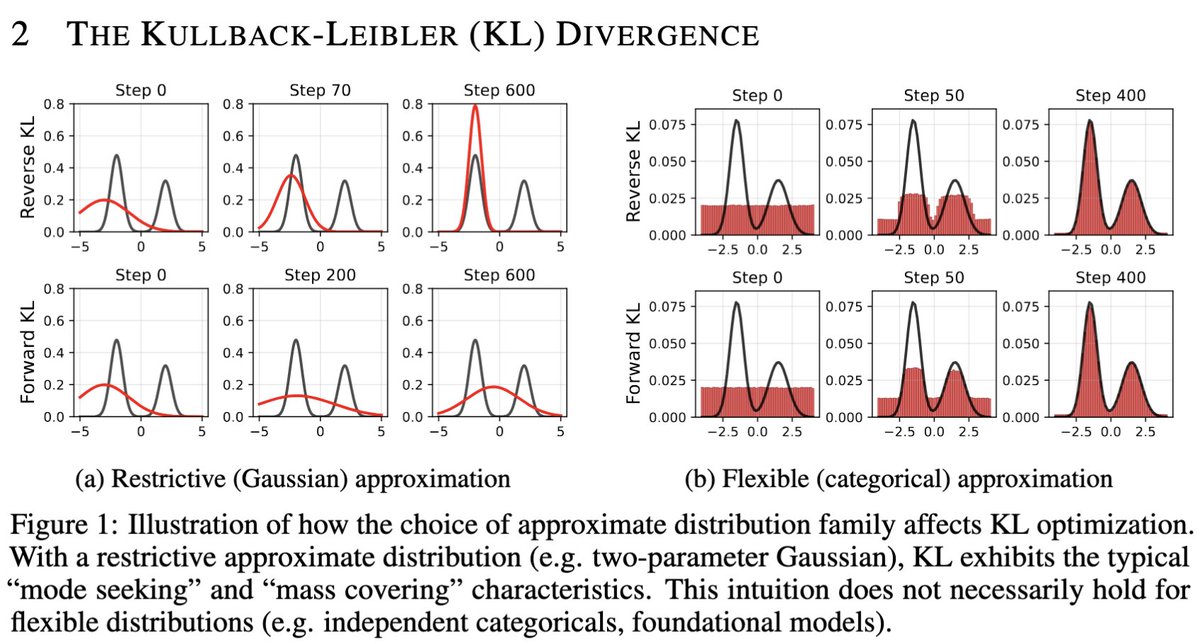

First, linear differences in rewards -> exponential differences in probabilities. With low KL reg (e.g. 1e-3), we effectively have a single solution (no diversity). N.B. Entropy regularization has this problem as well. [6/n]

RL causing diversity collapse in generative models (e.g. LLMs) is *not* a training failure. It’s what KL / entropy-regularized RL is provably *designed* to do. The good news: we have a simple, principled fix. Accepted to #ICLR2026 🧵🔽 [1/n]