Antoine Yang

160 posts

Antoine Yang

@AntoineYang2

Senior Research Scientist @GoogleDeepMind, Gemini video 💎. Prev: PhD @Inria & @ENS_ULM, MEng @Polytechnique.

Introducing Ego2Web from Google DeepMind and UNC Chapel Hill, accepted to #CVPR2026. AI agents can browse the web. But can they act based on what you see? Existing benchmarks focus only on web interaction while ignoring the real world. Ego2Web bridges egocentric video perception and web execution, enabling agents that can see through first-person video, understand real-world context, and take actions on the web grounded in the egocentric video. This opens a path toward AI assistants that operate seamlessly across physical and digital environments. We hope Ego2Web serves as an important step for building more capable, perception-driven agents. 🧵👇

Introducing Ego2Web from Google DeepMind and UNC Chapel Hill, accepted to #CVPR2026. AI agents can browse the web. But can they act based on what you see? Existing benchmarks focus only on web interaction while ignoring the real world. Ego2Web bridges egocentric video perception and web execution, enabling agents that can see through first-person video, understand real-world context, and take actions on the web grounded in the egocentric video. This opens a path toward AI assistants that operate seamlessly across physical and digital environments. We hope Ego2Web serves as an important step for building more capable, perception-driven agents. 🧵👇

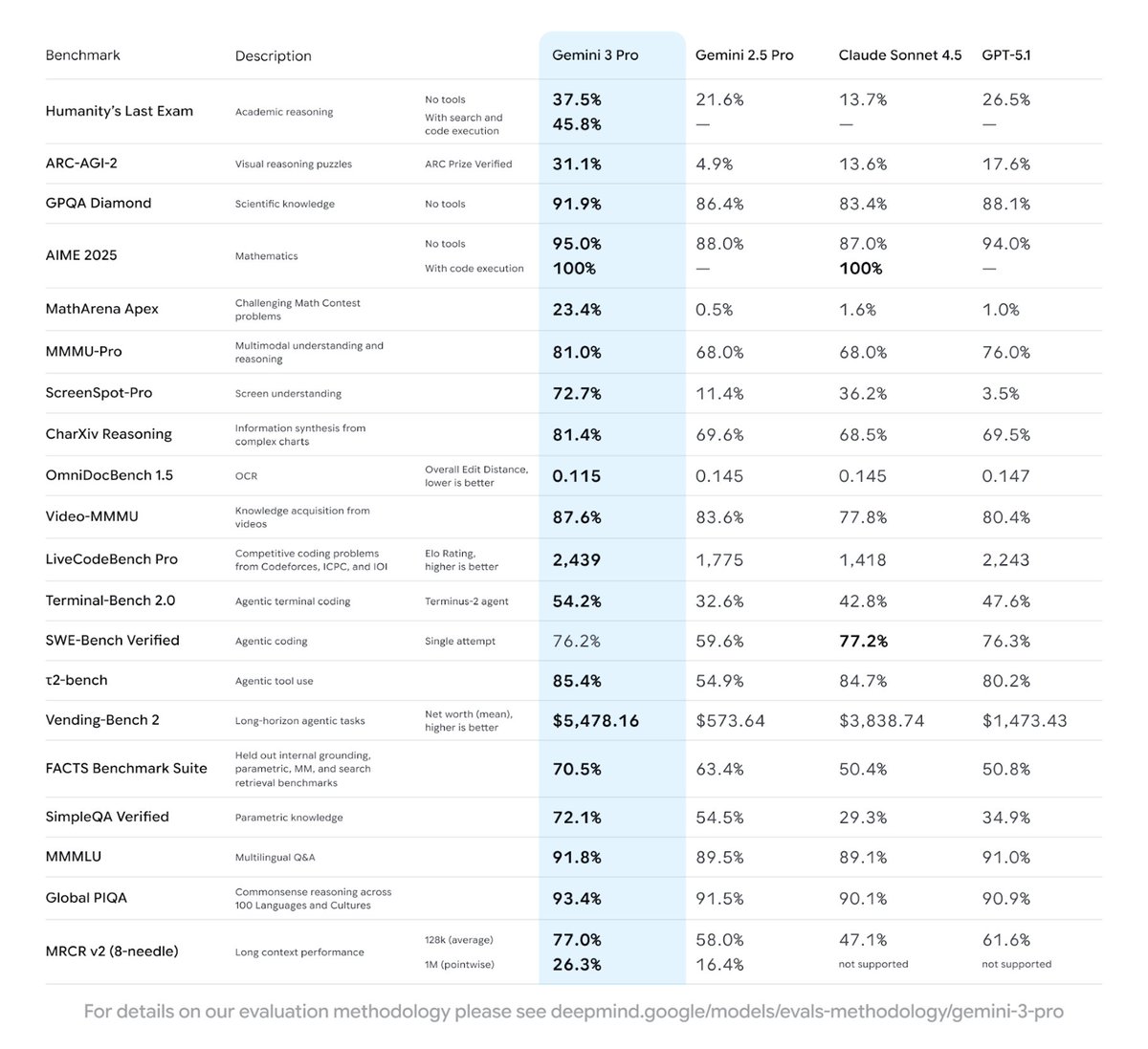

Gemini 3.1 Flash-Lite has landed. It’s our most cost-efficient Gemini 3 series model yet, built for intelligence at scale. Here’s what’s new 🧵

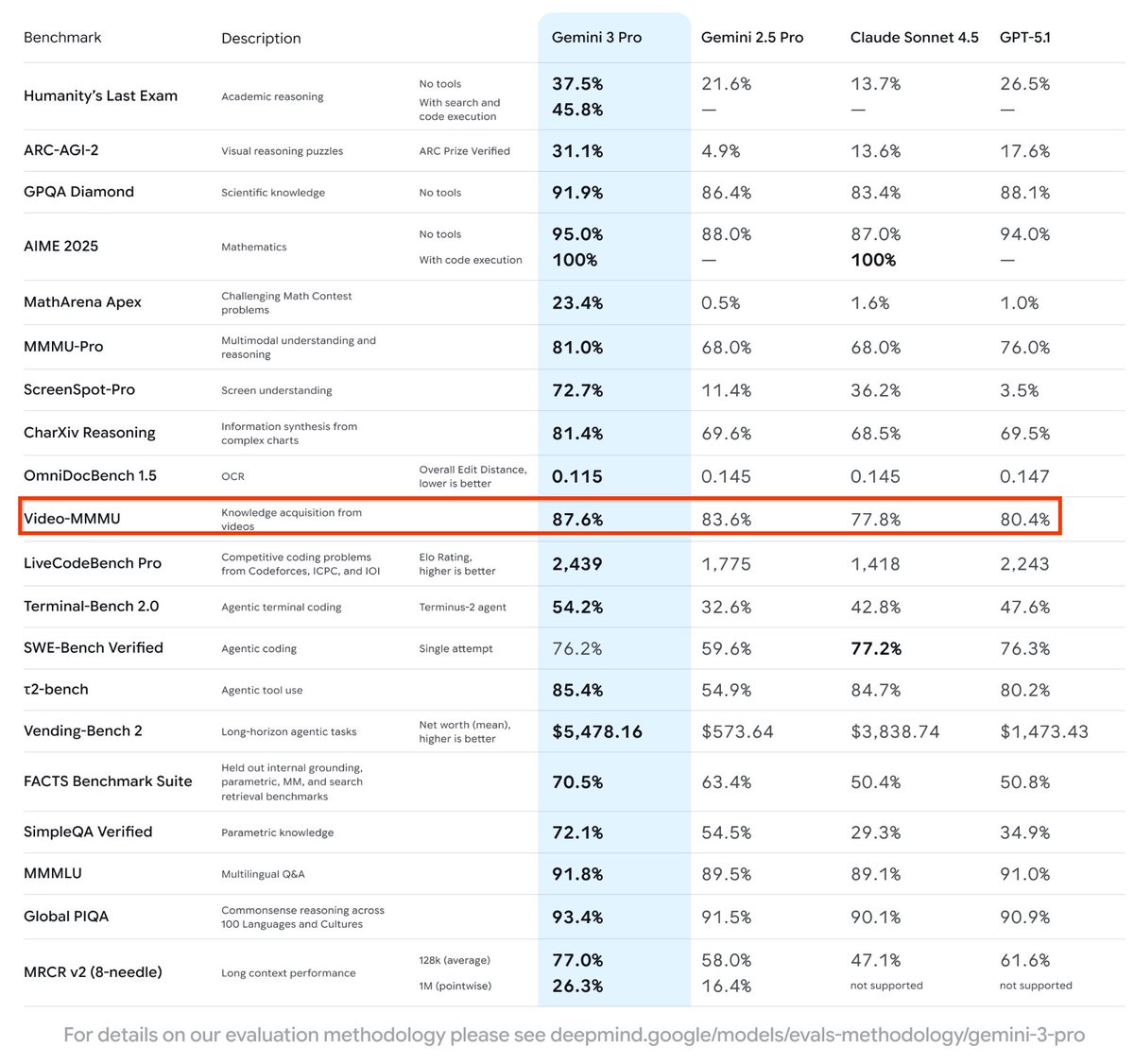

3 Flash delivers frontier performance on benchmarks like GPQA Diamond - evaluating PhD-level reasoning – and Humanity’s Last Exam – testing broad expert knowledge. It’s state-of-the-art on MMMU Pro, with a score comparable to 3 Pro - easily analyzing inputs across videos and images, not just text. And it handles complex tasks significantly faster than 2.5 Pro at a lower cost, using fewer tokens - or units of information - to save time.

Hot Gemini updates off the press. 🚀 Anyone can now use 2.5 Flash and Pro to build and scale production-ready AI applications. 🙌 We’re also launching 2.5 Flash-Lite in preview: the fastest model in the 2.5 family to respond to requests, with the lowest cost too. 🧵