Sabitlenmiş Tweet

Arcee.ai

798 posts

Arcee.ai

@arcee_ai

American made open-source AI https://t.co/6gcSwU5nk3

San Francisco Katılım Eylül 2023

438 Takip Edilen9.2K Takipçiler

Check out the blog for a walkthrough on setting up the Hermes Agent powered by Trinity-Large-Thinking across Linux, macOS, and Windows.

arcee.ai/blog/how-to-us…

English

Huge thanks to @carlfranzen for covering the Trinity-Large-Thinking release for @VentureBeat.

We are incredibly proud of what our team accomplished with this release and we cannot wait to see what the open source community builds with it.

venturebeat.com/technology/arc…

English

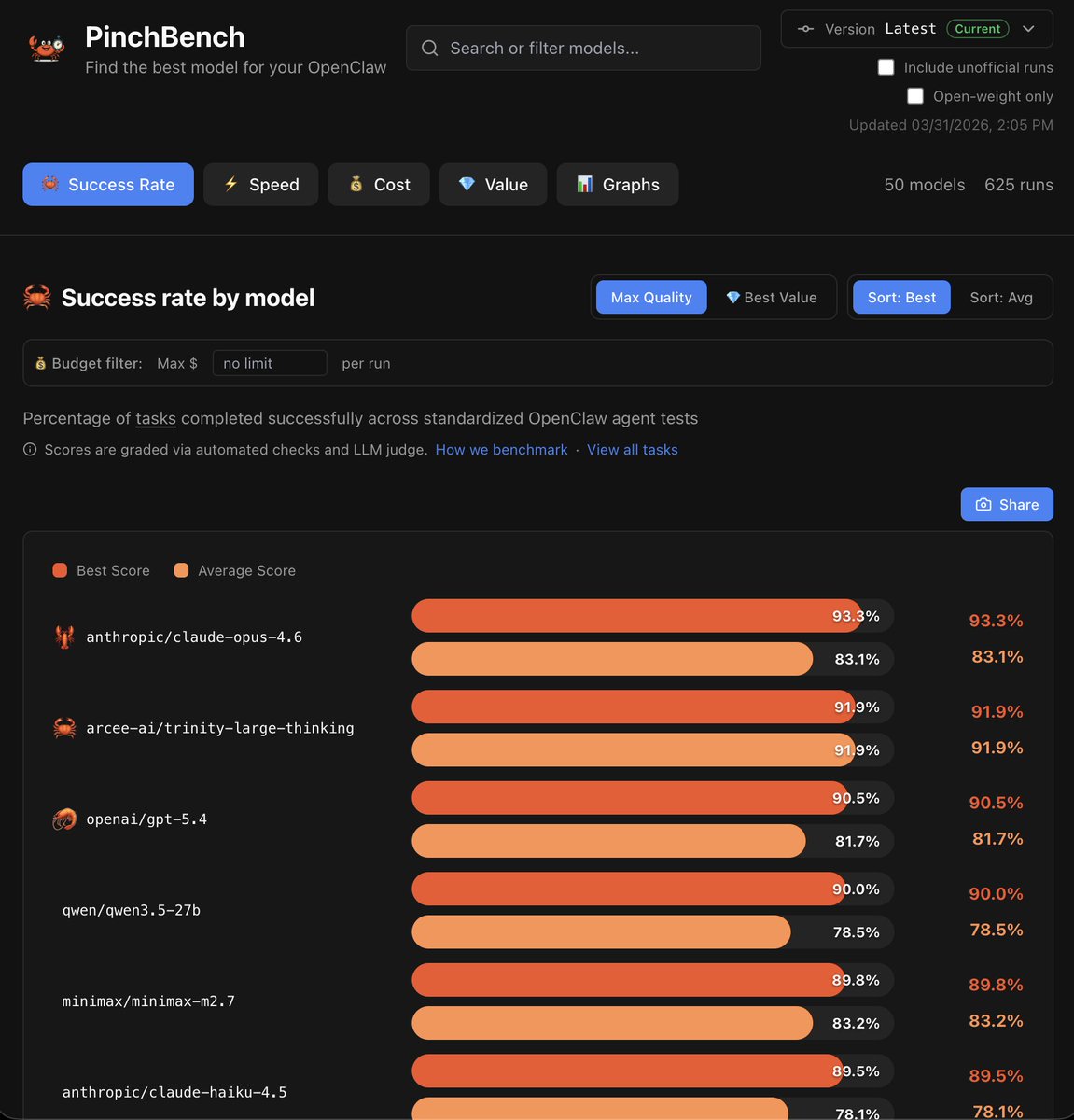

Jensen showcased PinchBench (by @kilocode) on stage at NVIDIA GTC as the new standard for evaluating @openclaw agent capability.

Trinity-Large-Thinking just hit #2 on @pinchbench globally (91.9%) behind only Claude Opus 4.6 (93.3%), which costs ~20x more per token. That's an expensive percentage point...

To celebrate the ongoing Trinity partnership, @OpenRouter is running a promotion to make Trinity-Large-Thinking free to use for OpenClaw until Sunday, April 5th.

Even when the promo ends, the Trinity economics are pretty incredible.

Trinity is $0.25/m input tokens (OpenClaw uses A LOT of input tokens), but with a 60%-90% cache hit rate at $0.06/m cache tokens the average input cost nets out to $0.087/m.

You no longer have to compromise on logic to keep your agent infrastructure affordable.

We're excited to see what you build.

English

Arcee.ai retweetledi

Shoutout to @OpenRouter, @digitalocean, @huggingface, @datalogyai, and @PrimeIntellect.

Great models need great distribution, great data, and great infrastructure. We are grateful to be building with partners who understand that.

English

Arcee.ai retweetledi

hf-mount

Attach any Storage Bucket, model or dataset from @huggingface as a local filesystem

This is a game changer, as it allows you to attach remote storage that is 100x bigger than your local machine's disk.

This is also perfect for Agentic storage!!

Read-write for Storage Buckets, read-only for models and datasets.

Here's an example with FineWeb-edu (a 5TB slice of the Web):

1️⃣> hf-mount start repo datasets/HuggingFaceFW/fineweb-edu /tmp/fineweb

It takes a few seconds to mount, and then:

2️⃣> du -h -d1 /tmp/fineweb

4.1T ./data

1.2T ./sample

5.3T .

🤯😮

Two backends are available:

NFS (recommended) and FUSE

Let's f**ing go 💪

English

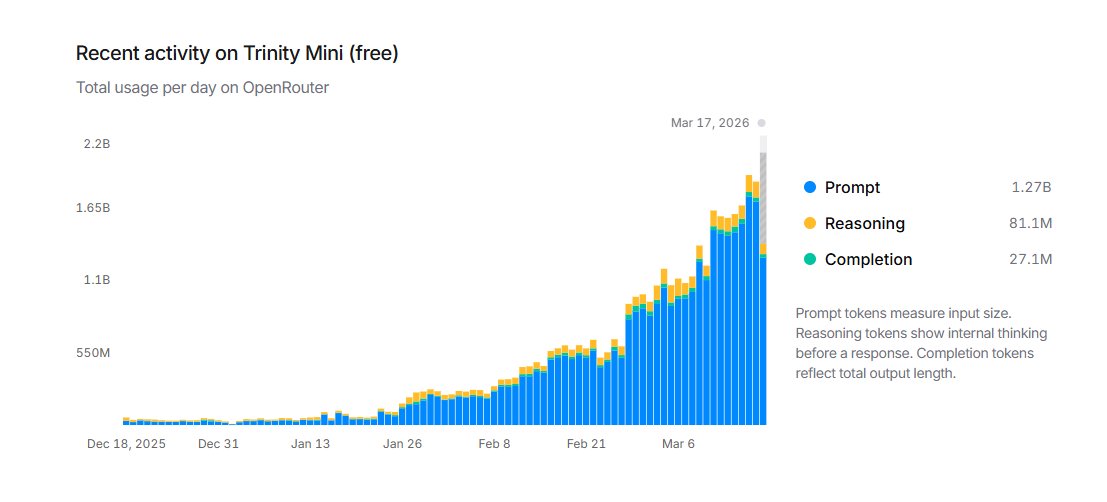

We've spent a lot of time focused on Trinity Large over the past few months. Meanwhile, Trinity Mini is quietly gaining momentum.

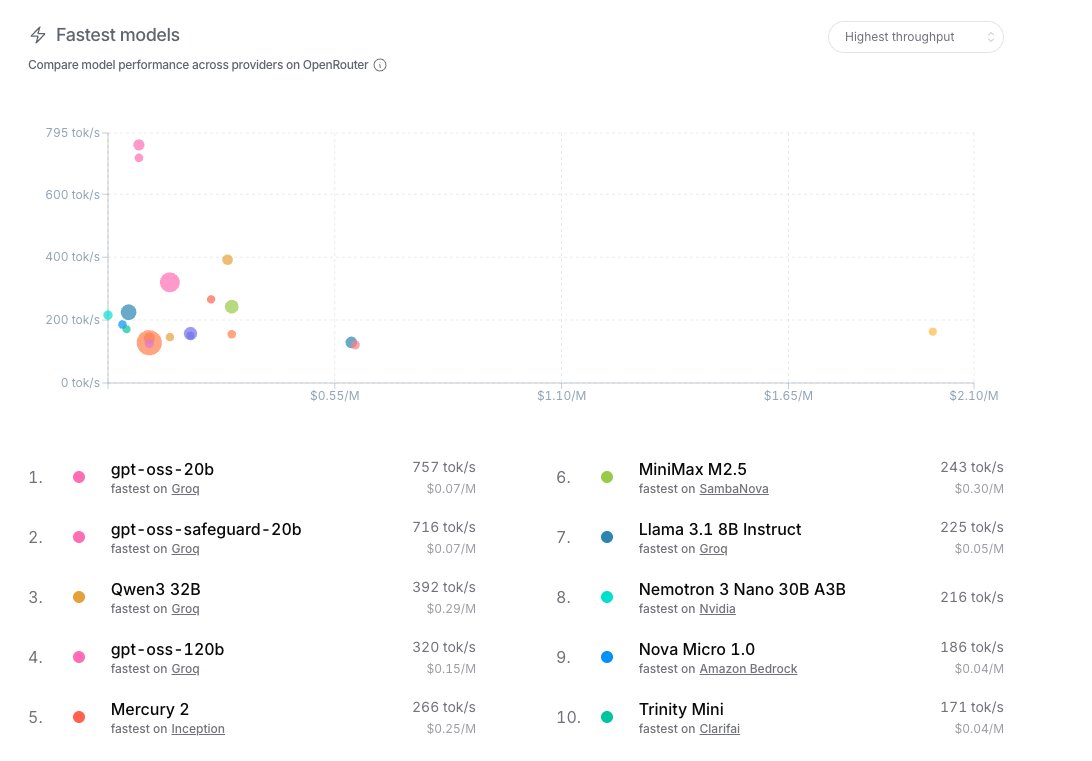

Over the last 30 days, official and community derivatives of Mini have crossed 75k downloads on @huggingface. We are also seeing a massive ramp in usage on @OpenRouter throughout March, where it is currently ranked in the top 10 fastest models at 171 tok/s.

At 26B, Mini is highly capable and exceptionally fast, making it a great fit for those who need lower latency without sacrificing reasoning.

We will continue to support Mini as a core piece of the Trinity family. If you haven't tried it yet, we encourage you to give it a run!

English