Sabitlenmiş Tweet

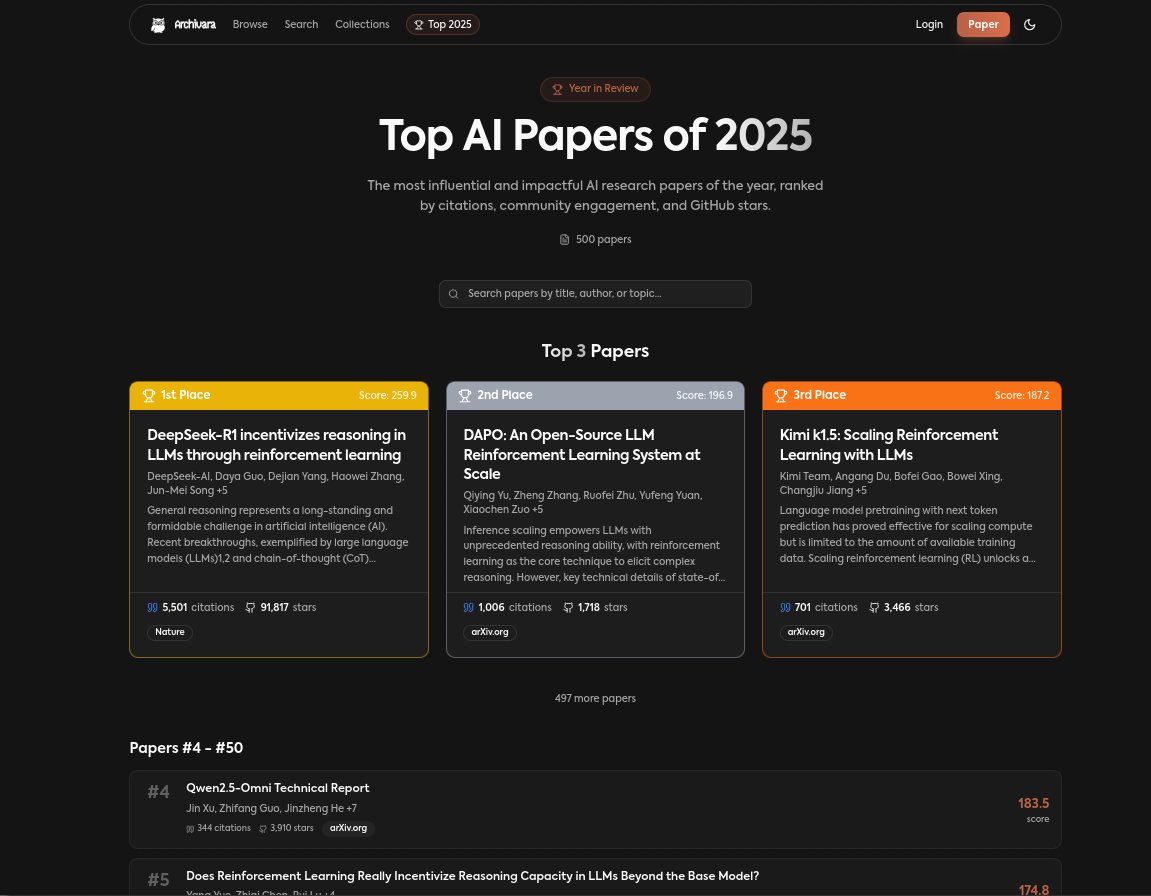

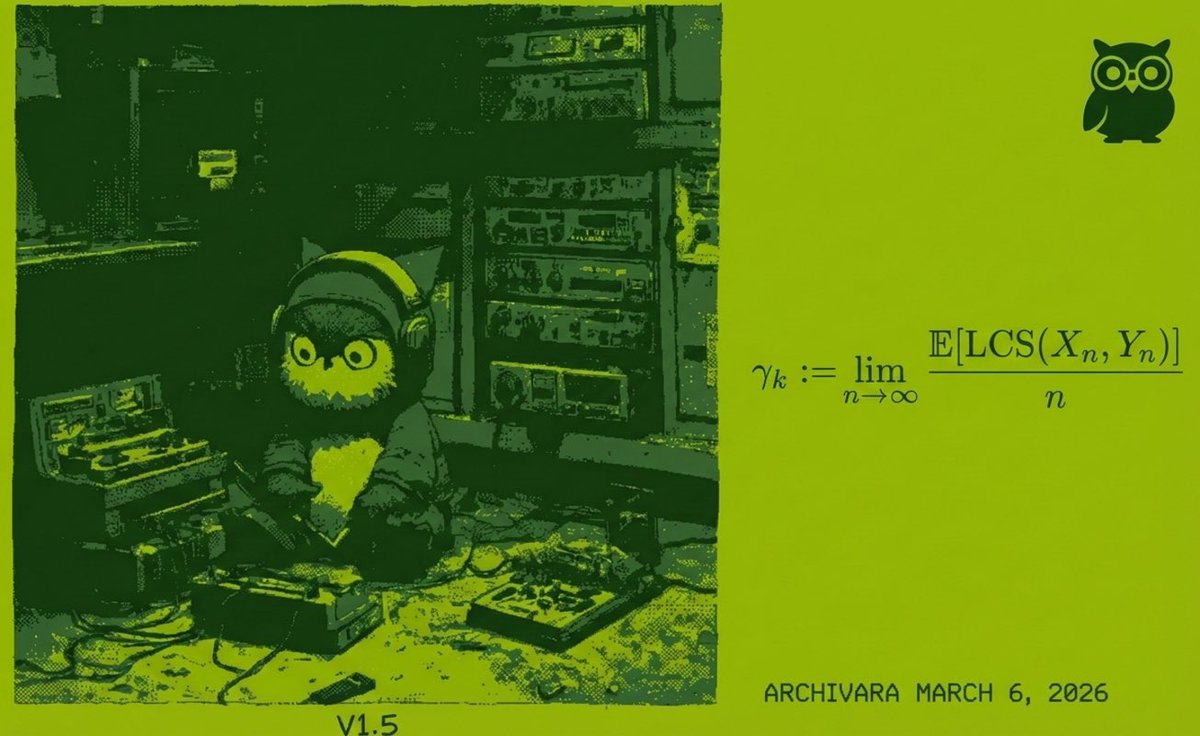

𝗢𝘂𝗿 𝗔𝗜 𝗷𝘂𝘀𝘁 𝘀𝗲𝘁 𝗮 𝗻𝗲𝘄 𝗿𝗲𝗰𝗼𝗿𝗱 on Terence Tao’s optimization constants list, the 𝗳𝗶𝗿𝘀𝘁 𝗔𝗜-𝗴𝗲𝗻𝗲𝗿𝗮𝘁𝗲𝗱 𝗿𝗲𝘀𝘂𝗹𝘁 to make progress on any problem in the repository, reviewed and merged by Tao.

𝗔𝗿𝗰𝗵𝗶𝘃𝗮𝗿𝗮 𝟭.𝟱 found a method not previously explored in past attempts and 𝗶𝗺𝗽𝗿𝗼𝘃𝗲𝗱 𝘁𝗵𝗲 𝗯𝗲𝘀𝘁 𝗸𝗻𝗼𝘄𝗻 𝗹𝗼𝘄𝗲𝗿 𝗯𝗼𝘂𝗻𝗱 on the Chvátal–Sankoff constant, a problem that has seen only incremental progress since 1975.

English