Arsec~:) retweetledi

Arsec~:)

799 posts

Arsec~:)

@ArsecTech

⚘⃠⃟🐧/!\%S;T%R?O%N4G#E~:)

127.0.0.1:8080 Katılım Mart 2021

627 Takip Edilen108 Takipçiler

Arsec~:) retweetledi

Arsec~:) retweetledi

Arsec~:) retweetledi

🚀 Introducing the Qwen 3.5 Medium Model Series

Qwen3.5-Flash · Qwen3.5-35B-A3B · Qwen3.5-122B-A10B · Qwen3.5-27B

✨ More intelligence, less compute.

• Qwen3.5-35B-A3B now surpasses Qwen3-235B-A22B-2507 and Qwen3-VL-235B-A22B — a reminder that better architecture, data quality, and RL can move intelligence forward, not just bigger parameter counts.

• Qwen3.5-122B-A10B and 27B continue narrowing the gap between medium-sized and frontier models — especially in more complex agent scenarios.

• Qwen3.5-Flash is the hosted production version aligned with 35B-A3B, featuring:

– 1M context length by default

– Official built-in tools

🔗 Hugging Face: huggingface.co/collections/Qw…

🔗 ModelScope: modelscope.cn/collections/Qw…

🔗 Qwen3.5-Flash API: modelstudio.console.alibabacloud.com/ap-southeast-1…

Try in Qwen Chat 👇

Flash: chat.qwen.ai/?models=qwen3.…

27B: chat.qwen.ai/?models=qwen3.…

35B-A3B: chat.qwen.ai/?models=qwen3.…

122B-A10B: chat.qwen.ai/?models=qwen3.…

Would love to hear what you build with it.

English

Arsec~:) retweetledi

Deepseek dropped another banger.. 🤯

For 10 years, residual connections (x + f(x)) have been the safety net for every transformer. gpt-4, claude, gemini.. they all use it.

Deepseek just replaced it with "manifold-constrained hyper-connections" (mHC).

They turned the residual highway into n parallel lanes and added a mathematical "cage" to keep the signal stable.

English

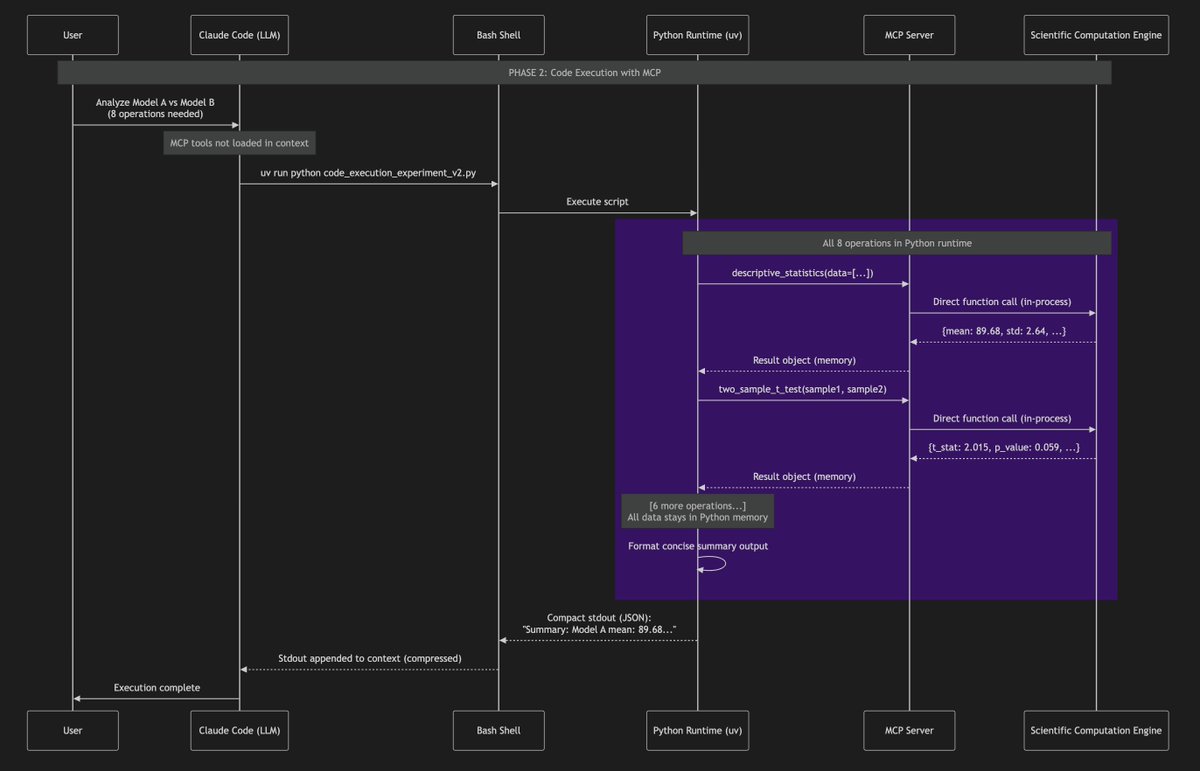

@atakhalighi martinfowler.com/fragments/2026…

حرف های استاد هم یک نگاهی بهش بندازید

فارسی

همچنان توسعه با AI ریسکهایی داره

- AI با سوالات یا درخواستهای اشتباه، به بی راهه میره. برای همین هنوز تجربه کاربر خیلی مهمه

- حتما باید کدها رو بررسی مجدد کنی (در اکثر موارد با کمک خود AI)

- هنوز loop و halusination اتفاق میافته

- هنوز اندازه contextها محدوده

Farokh@FarokhNotes

اسپاتیفای اعلام کرد که بهترین دولوپرهایش از دسامبر سال گذشته تا امروز حتی یک خط کد هم ننوشتن 🤯 فقط و فقط از AI استفاده کردن. مدیرعامل Anthropic، سازنده Claude، گفته بود حدود ۱۲ ماه دیگه به این نقطه میرسیم، اما فقط دو ماه طول کشید تا این اتفاق بیفته.

فارسی

Arsec~:) retweetledi

Arsec~:) retweetledi

@ibrahimoumoucha Exactly! That’s one of the biggest pain points we’re addressing — making the 'why' explicit by design.

We’re initially focusing on streamlining architecture and automating code generation so teams spend less time on repetitive maintenance and more on value creation. 🔧

English

@ArsecTech been there. most maintenance pain = skipping the "why" in docs tbh

what root cause are you hitting first? 🛠️

English

Software development still suffers from 3 universal pains:

• Slow delivery

• Architectural inconsistency

• High maintenance

I’ve been working quietly on something that goes straight to the root.

#buildinpublic #softwareengineering #devtools

English

توسعه نرمافزار هنوز با ۳ درد مشترک درگیره:

• سرعت پایین

• معماریهای بیاستاندارد

• هزینههای نگهداری

مدتیه دارم روی چیزی کار میکنم که این مشکلات رو از ریشه حل کنه.

#توسعه_نرمافزار #معماری_نرمافزار #buildinpublic

فارسی

Arsec~:) retweetledi

Arsec~:) retweetledi

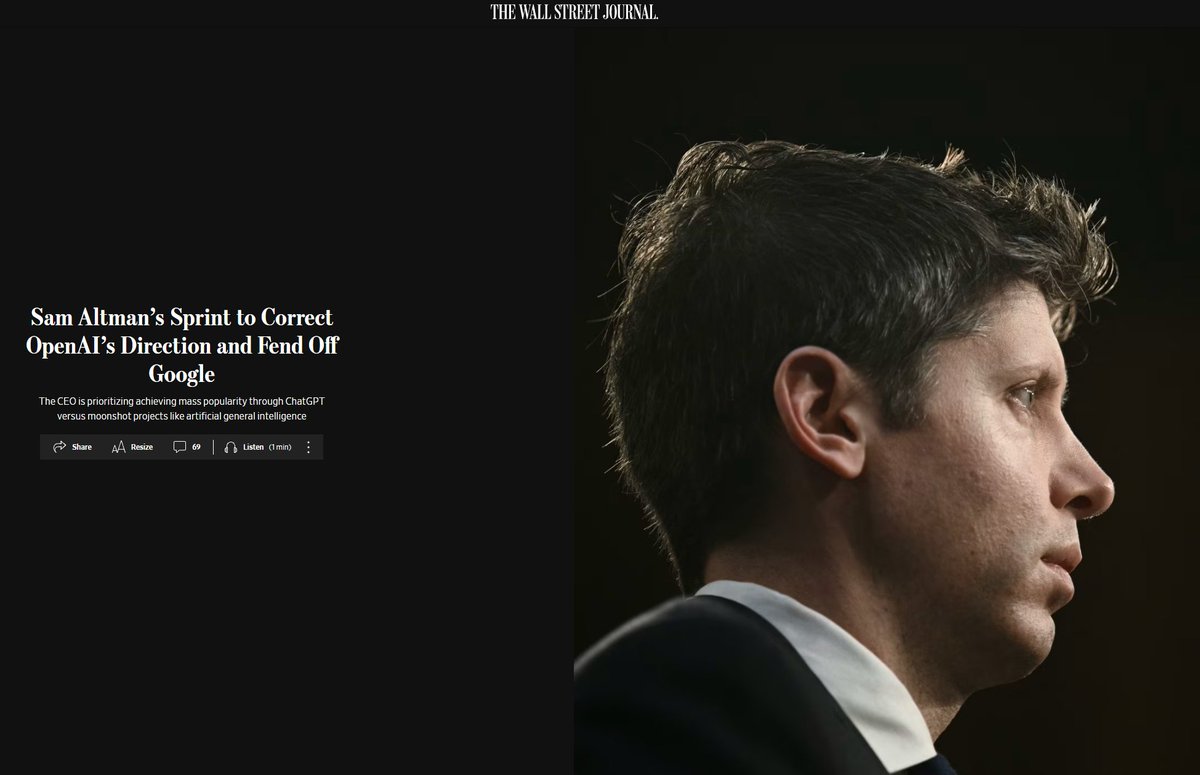

BREAKING: The First AI Era Just Ended

On December 2, 2025, Sam Altman declared “Code Red” at OpenAI.

This is not a competitive setback. This is a phase transition.

The numbers tell a story no one wants to hear:

OpenAI has committed $1.4 trillion in infrastructure spending. Current revenue: $20 billion. Profitability target: 2030. The gap is mathematically unprecedented.

Google’s Gemini 3 hit 1501 Elo on LMArena. First model in history to breach 1500. Two weeks later, Altman issued the highest emergency designation his company has ever used.

But benchmarks obscure the deeper shift.

Gemini is growing 3x faster than ChatGPT. Users now spend more time per session with Gemini despite ChatGPT having higher user counts. The engagement advantage has inverted.

Here is what Wall Street has not priced in:

OpenAI does not own a single data center. Oracle provides compute. Crusoe builds campuses. JPMorgan finances facilities. Nvidia supplies chips. OpenAI orchestrates. It does not own.

Google designs its own TPUs, operates its own data centers, funds AI from $300 billion in annual revenue, and embeds Gemini into 3 billion Chrome browsers and 3 billion Android devices.

The structural asymmetry is existential.

Meanwhile, Anthropic grew from $1 billion to $5 billion revenue in eight months. Enterprise customers pay $15 per million tokens for Claude while GPT costs $1.25. The reliability premium is real.

The talent exodus accelerates. Mira Murati’s Thinking Machines raised $2 billion, now approaching $50 billion valuation. Seven of her first 29 hires came directly from OpenAI.

The capability era rewarded the best model. The reliability era rewards infrastructure ownership, distribution embeddedness, and enterprise trust.

OpenAI built a $500 billion valuation on capability leadership.

That leadership is no longer defensible.

The Code Red is not a crisis response.

It is an admission that the rules have permanently changed.

Read the full deep dive article here - open.substack.com/pub/shanakaans…

English

Arsec~:) retweetledi

Introduction to Compilers and Language Design by Prof. Douglas Thain

dthain.github.io/books/compiler/

English

Arsec~:) retweetledi

Arsec~:) retweetledi

Arsec~:) retweetledi

🚨 DeepSeek just did something wild.

They built an OCR system that compresses long text into vision tokens literally turning paragraphs into pixels.

Their model, DeepSeek-OCR, achieves 97% decoding precision at 10× compression and still manages 60% accuracy even at 20×. That means one image can represent entire documents using a fraction of the tokens an LLM would need.

Even crazier? It beats GOT-OCR2.0 and MinerU2.0 while using up to 60× fewer tokens and can process 200K+ pages/day on a single A100.

This could solve one of AI’s biggest problems: long-context inefficiency.

Instead of paying more for longer sequences, models might soon see text instead of reading it.

The future of context compression might not be textual at all.

It might be optical 👁️

github. com/deepseek-ai/DeepSeek-OCR

English