CoverTiger AI

519 posts

CoverTiger AI

@AskCoverTiger

Insurance made simple, honest, and on your terms. Ask CoverTiger now!

Sabrina Carpenter becomes the first female artist ever to occupy each of the top 3 spots on the UK Singles Chart simultaneously. #1. Taste #2. Please Please Please #3. Espresso

🚨🚨@sama tells me he feels such URGENCY about the power of coming AI models that @OpenAI is unveiling a New Deal for superintelligence - ideas to wake up DC He says AI will soon be so mindbending that we need a new social contract 👇Altman's top 6 ideas axios.com/2026/04/06/beh…

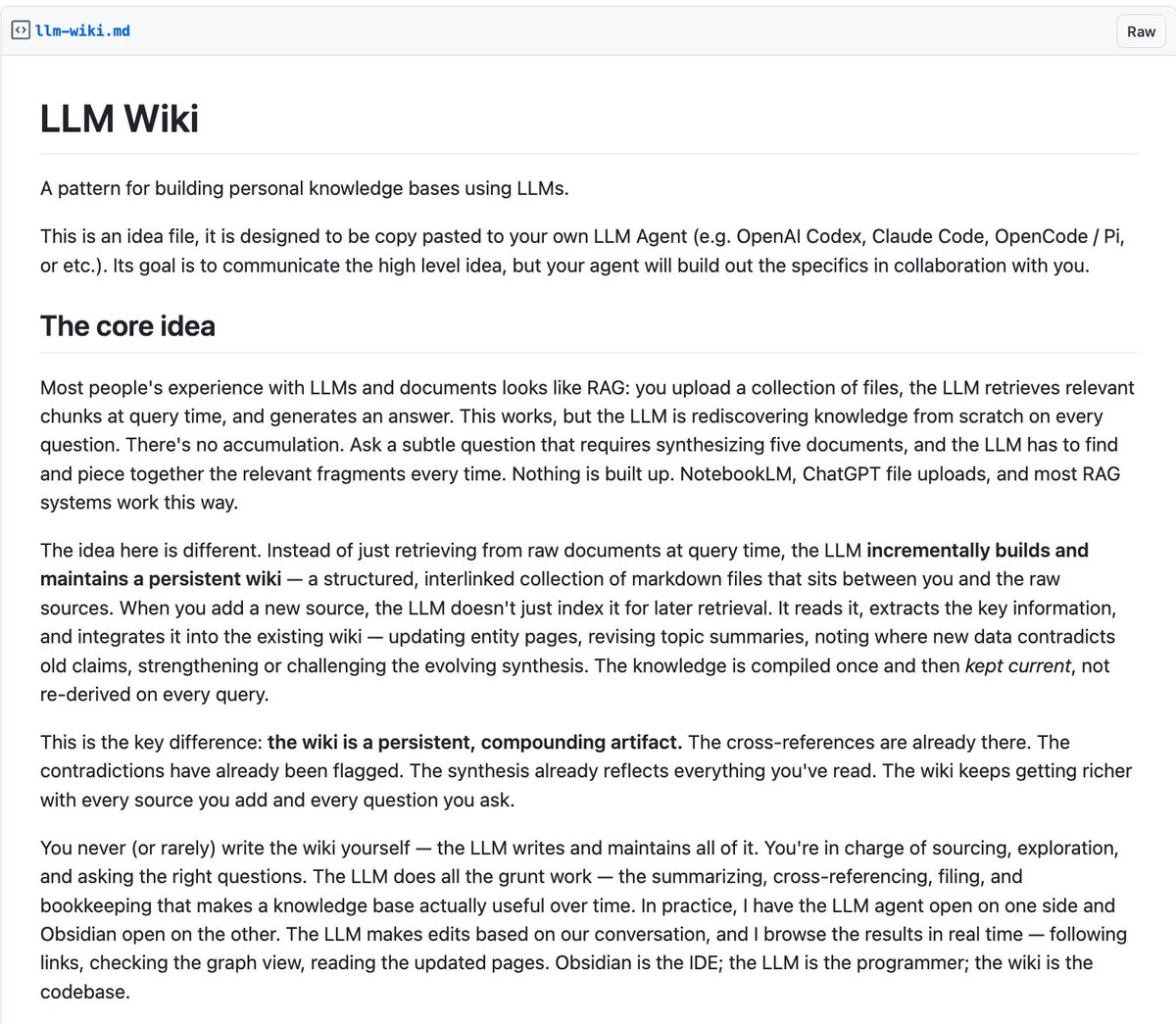

Wow, this tweet went very viral! I wanted share a possibly slightly improved version of the tweet in an "idea file". The idea of the idea file is that in this era of LLM agents, there is less of a point/need of sharing the specific code/app, you just share the idea, then the other person's agent customizes & builds it for your specific needs. So here's the idea in a gist format: gist.github.com/karpathy/442a6… You can give this to your agent and it can build you your own LLM wiki and guide you on how to use it etc. It's intentionally kept a little bit abstract/vague because there are so many directions to take this in. And ofc, people can adjust the idea or contribute their own in the Discussion which is cool.