Nolan Koblischke

445 posts

Nolan Koblischke

@astro_nolan

Language models and astrophysics. PhD student @UofT, formerly @UBC, @EPFL. Interned at @PolymathicAI.

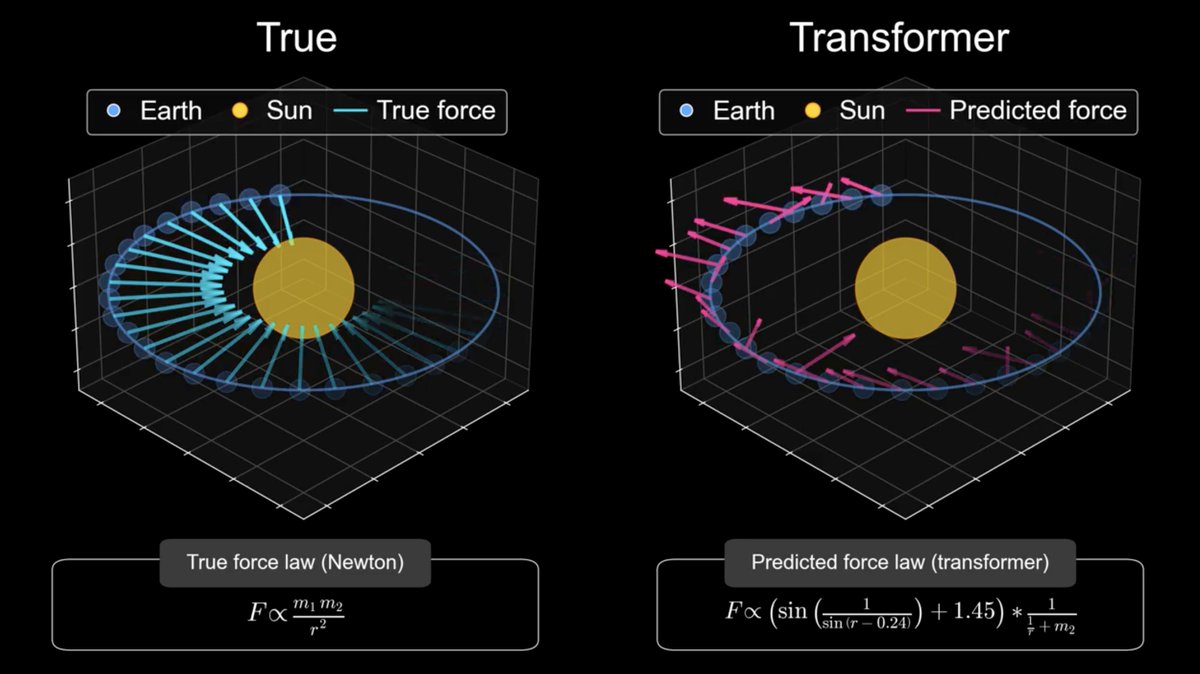

GPT-5.2 derived a new result in theoretical physics. We’re releasing the result in a preprint with researchers from @the_IAS, @VanderbiltU, @Cambridge_Uni, and @Harvard. It shows that a gluon interaction many physicists expected would not occur can arise under specific conditions. openai.com/index/new-resu…

Knowing which questions to ask is often the hardest part of science. Today we're releasing AutoDiscovery in AstaLabs, an AI system that starts with your data and generates its own hypotheses. 🧪

Much of today’s scientific tooling has remained unchanged for decades. Prism changes that. @ALupsasca joins @kevinweil and @vicapow to walk through what it looks like when GPT-5.2 works inside a LaTeX project with full paper context.

Now you can also create animations like @3blue1brown 👉🏻 excited to introduce manim_skills $ npx skills add adithya-s-k/manim_skill This animation was created just by prompting 👇