Sabitlenmiş Tweet

Autark

18.4K posts

Autark

@Aut4rk

God-Emperor of Deterministic Codegen. First of His Name.

Builder Cove Katılım Temmuz 2023

1.4K Takip Edilen1.2K Takipçiler

thinking out loud. every model gets math wrong. 7B, 9B, 70B. doesn't matter. pattern matching is not computation.

hermes agent has code_execution which spins up a full python sandbox with RPC over unix sockets. powerful but heavy. a 9B isn't going to navigate that reliably for basic arithmetic.

what if there was a lightweight calc tool built in. model hits a math question, calls the tool, gets the exact answer computed on your hardware. no interpreter overhead. sandboxed. simple enough schema that a 9B can call it every time.

the accuracy problem stops being a model problem and becomes an infrastructure problem. and infrastructure is solvable.

@Teknium would this belong in hermes agent or is code_execution enough?

English

@creativedrewy @alightinastorm @threejs Sorry had other sidequests but got back to it, Android support is green.

English

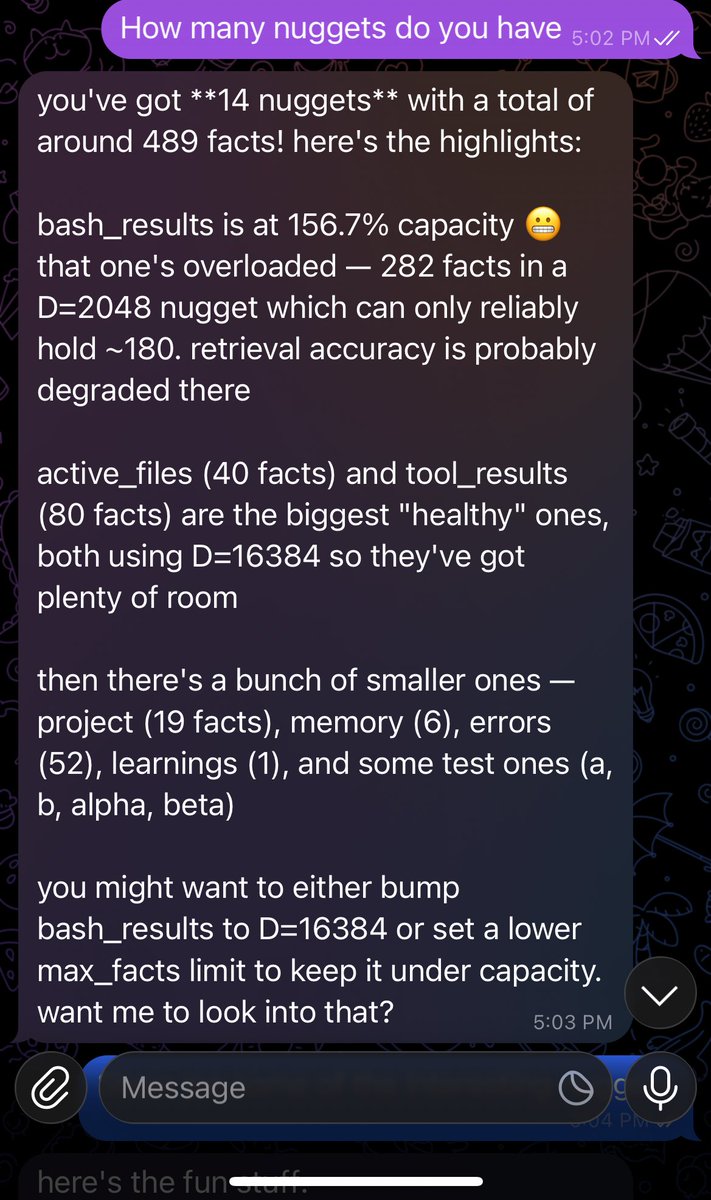

@MnemosyneV4o @3rdEyeVisuals @BLUECOW009 Yeah I went way the other way with it lol github.com/mattneel/vxdb

English

i've caught the bug for pushing 1B-class models toward something closer to coherent reasoning on the cheapest consumer hardware.

Qwen2.5 and SmolLM2 GGUFs are already running on-device via llama.cpp on Android, so the inference path exists

the question for me is the reasoning ceiling at this scale

anyone experimenting with fine-tuning or prompting strategies to get more structured/compositional reasoning out of models this small?

English

@scheminglunatic I'll do you one better. x.com/Aut4rk/status/…

Autark@Aut4rk

Yeah, I said it. Fite me.

English

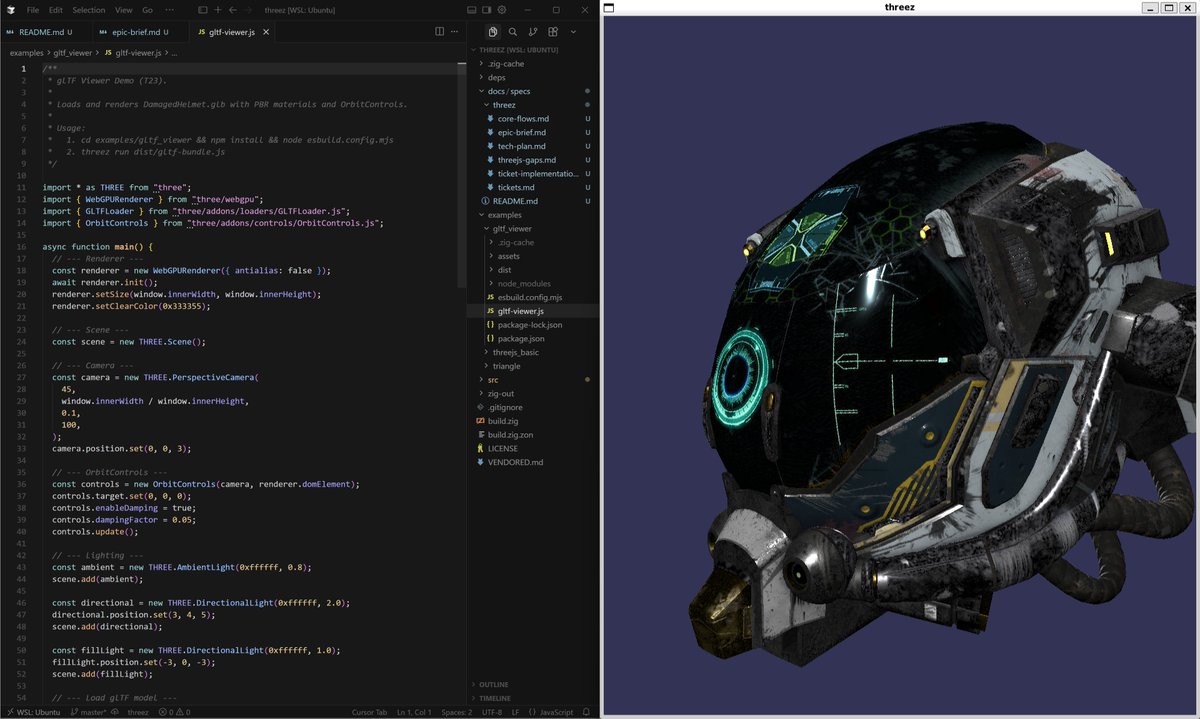

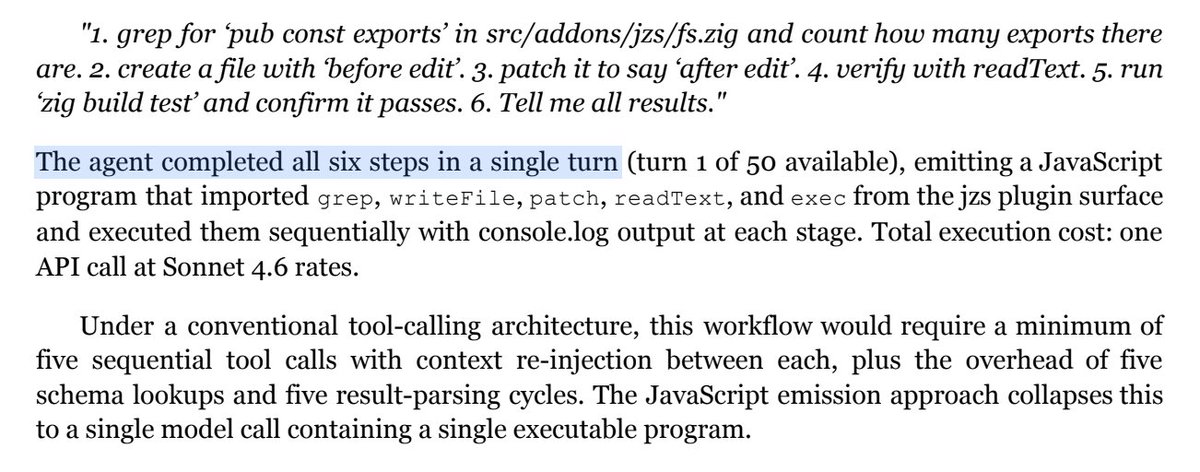

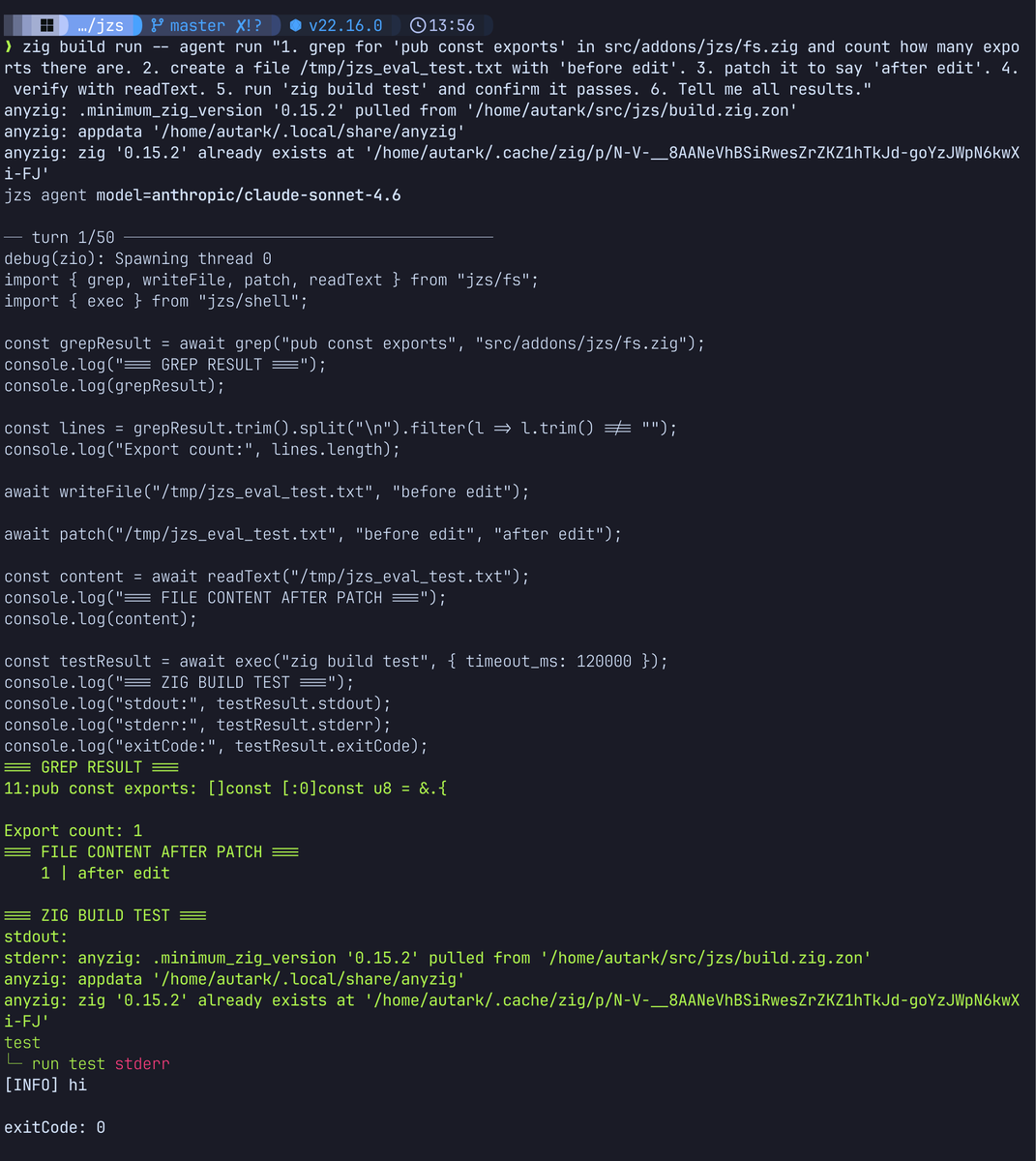

@platonovadim Slightly contrived example, sure, but the point is that instead of waiting on multiple tool call returns, multiple rounds, burning exponentially more time and tokens, the model can just write a miniature program to do it.

English

Just have the agent harness write code in JS sandbox instead of juggling external tools. Feels like should work great, except the models might get in the way because they're not trained for it...

Autark@Aut4rk

@effectfully @pjay_in Here I'll read yours, you read mine fam.

English

@platonovadim Javascript is the single biggest training signal they have. They're coding agents.

Let them write code.

English

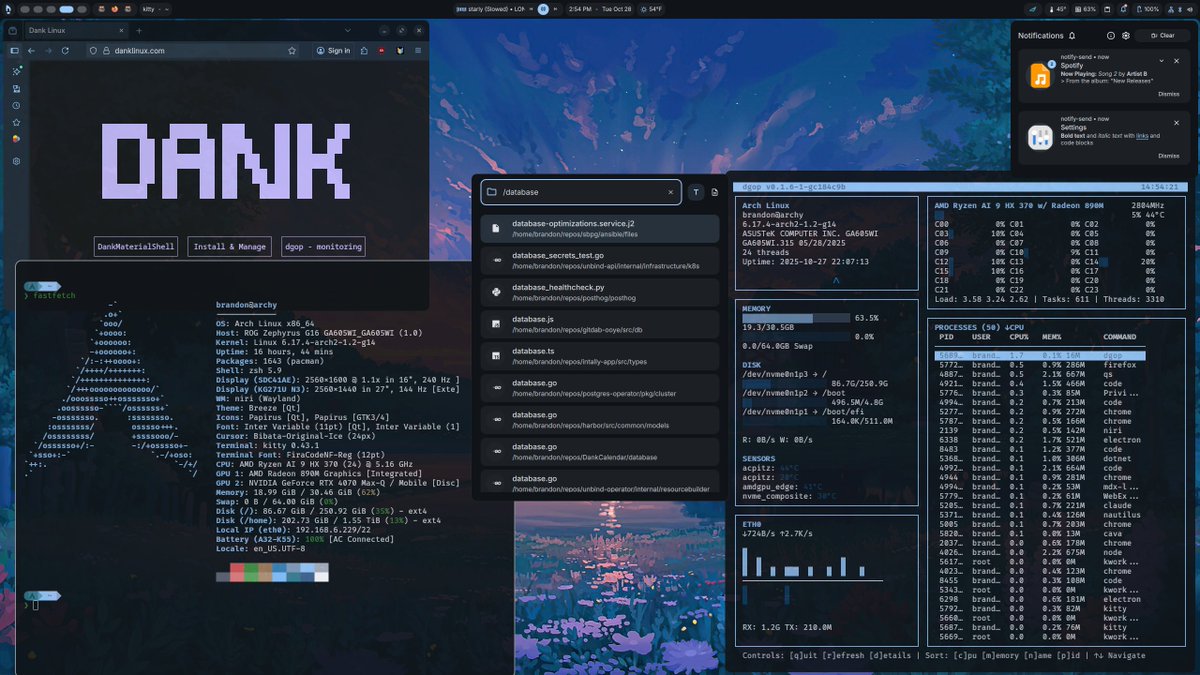

@LottoLabs Yeah Blackwell is pretty sick. Crazy what I can do on this laptop lol

English

I don't really use Hermes, I have my own harness (speaks Javascript directly instead of tool call schema nonsense, an entire category of problems I don't have to worry about). Using structured outputs works so I figure it's the same schema constriction mechanism with vLLM. I don't get denials but I never really did to begin with.

English

you don't understand anon. i'm on a mission to find the collection of best small models that run full context on consumer hardware.

because when you can orchestrate your own thinking across physical nodes locally, that's not a tool anymore. that's an extension of your mind.

that's exactly where we are headed as a civilization. and most people haven't felt it yet.

English

@LottoLabs I wouldn't use anything other than vLLM, lol. Not going to get any faster (when properly configured).

English

@LottoLabs It's just the model compiler this guy used. I didn't quant any of this myself. For me it helps shave off some headroom on the 24GB Laptop 5090.

English

@LottoLabs I've been using both the NVFP4 27B and 35B (and 0.8B, etc) since they came out. No issues. Laptop RTX 5090.

English