AI Authority

3.6K posts

@Authority_AI

Empowering businesses to integrate AI. We offer AI audits, strategy, tools, training & support to boost efficiency & stay ahead of the curve.

Rumor is that Q-Star figured out a way to break encryption, and OpenAI tried to warn the NSA about it. Here’s a Google doc link to a compilation of (allegedly) leaked documents and compelling analysis docs.google.com/document/u/0/d…

@stubailey900 @bindureddy @GaryMarcus Actually, we should be thrilled with a 3% error rate in doctors. The actual misdiagnosis rate is between 10-15%. Goes to show that our intuition about the significance of a 3% error rate is completely off. ncbi.nlm.nih.gov/books/NBK49995….

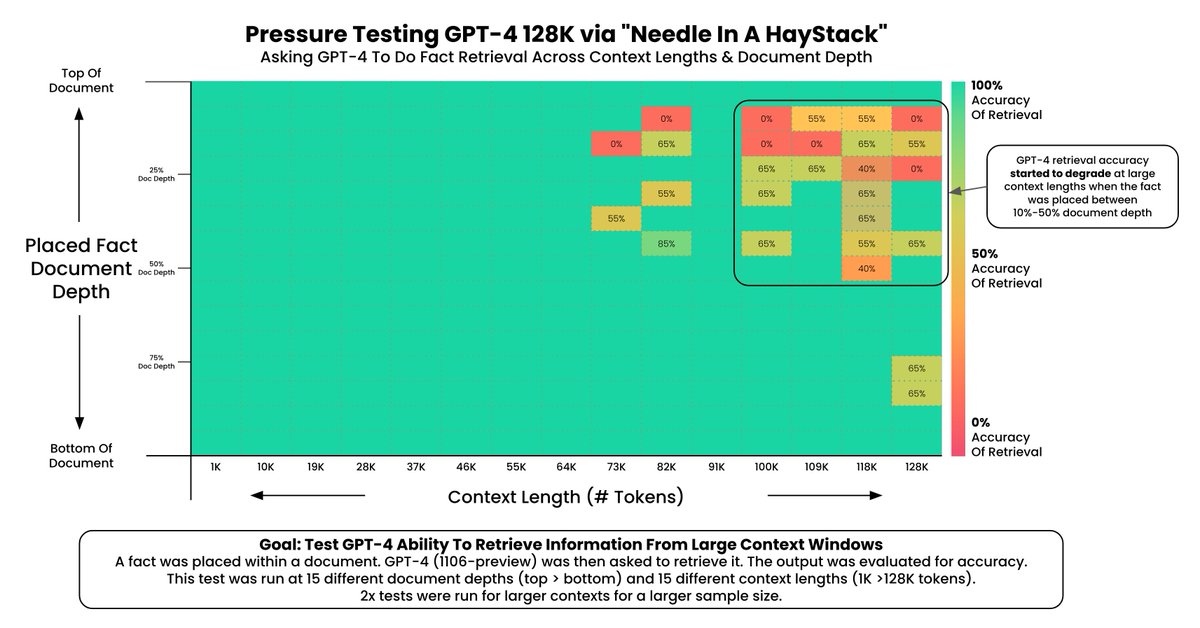

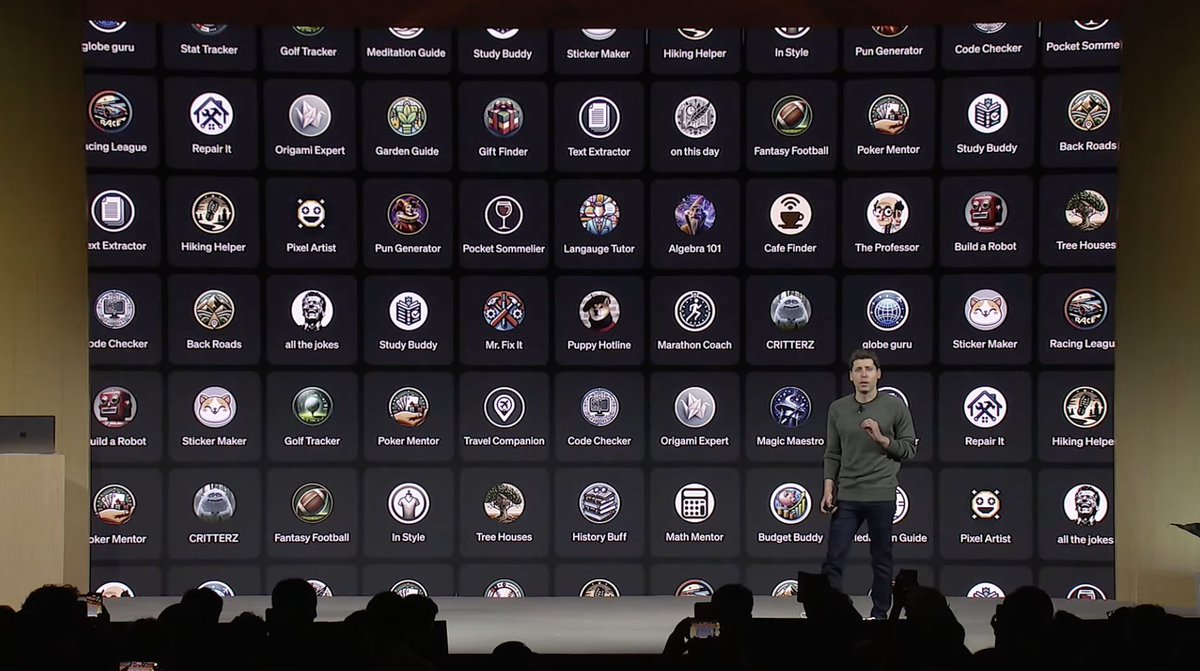

OpenAI confirms GPT-4 Turbo.