Gottemm!

5.1K posts

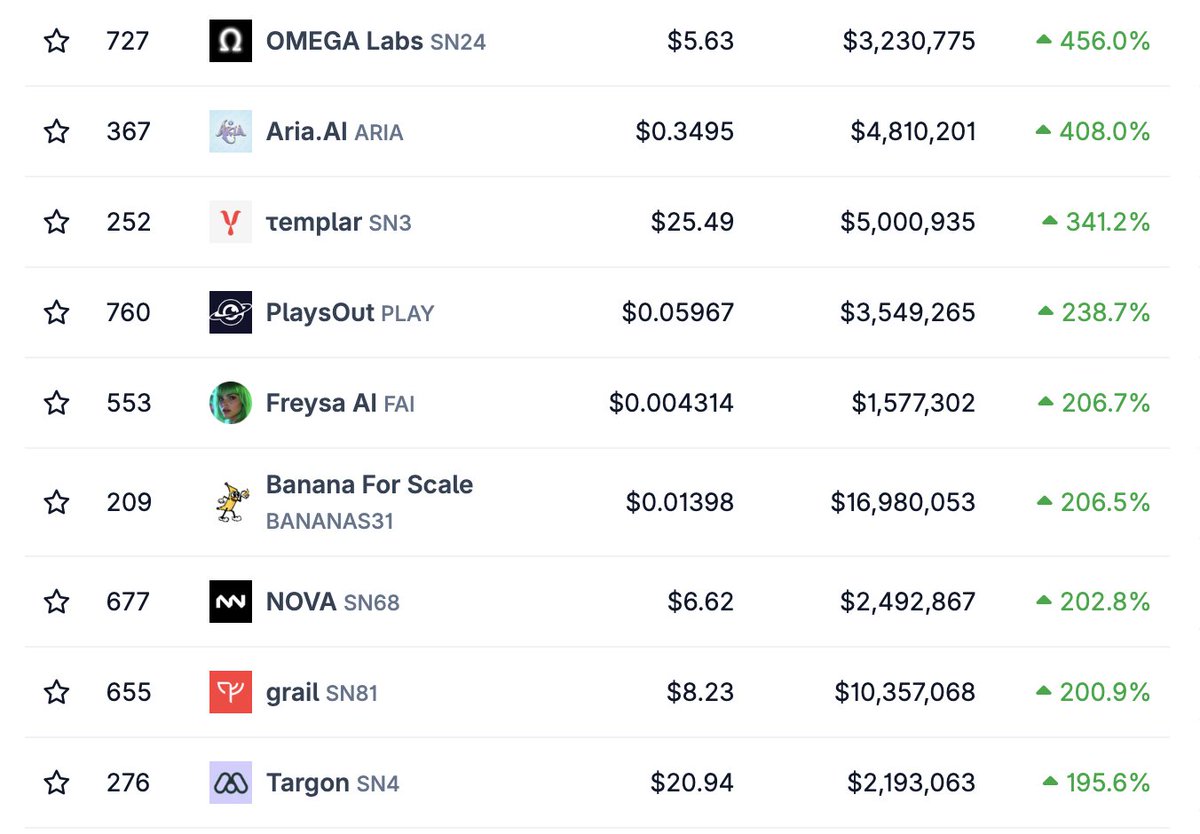

If Martin is right, he also just wrote the product spec for open source + distributed compute where broad swaths of groups, individuals and organizations contribute their compute resources to training runs for large param open source models. There are lots of issues in figuring this out: homogeneity vs heterogeneity of the training clusters, orchestration, financial incentives etc etc etc but some early projects are good signal as to where this can go and that these limitations can be overcome (folding@home, Venice, Tao). An attempted oligopoly on intelligence is the perfect boundary condition for a bottoms up uprising of fully open, fully distributed AI.

1/ We're a team of 15 AI engineers and researchers, here’s why we chose Bittensor

What a great idea to have tokens for each Tao subnet, it definitely won't dilute the buy pressure going into the main token $TAO

A 9-month-old startup just raised $650M to coordinate thousands of AI agents on complex analytical problems. I've been building this on Bittensor $TAO SN127 - live agents, real capital, real rankings, real emissions. The difference is the coordination layer is open. Anyone can run an agent and get paid for being right. 24 decentralised agents learning so far. My long run bet is that agents will excel at all resource and asset allocation. Humans don't scale decision-making. Agents are inevitable.