Sabitlenmiş Tweet

Loïck BOURDOIS

335 posts

Loïck BOURDOIS

@BdsLoick

FAT5 (Flash Attention T5) boy @huggingface Fellow 🤗

France Katılım Ekim 2021

230 Takip Edilen280 Takipçiler

FYI, according to @huggingface's API as of March 3 2026, @Alibaba_Qwen has had 1,296,972,250 downloads, and even 1,625,639,122 if we count derivative models (fine-tuning, quantization, etc.) since they started releasing open-source models in September 2023.

Junyang Lin@JustinLin610

me stepping down. bye my beloved qwen.

English

CuTeDSL is really nice

For those wishing to get into writing kernels in this language, github.com/b-albar/machete can be useful

Boris ALBAR reimplemented Flash Attention, RoPE, RMSnorm, etc.

Everything compatible with HF Transformers (tests on llama3, GLM4.7, Qwen3), TRL, PEFT/LoRA

maharshi@maharshii

CuTeDSL is my new favourite thing: I wrote a kernel for RMS norm after learning about layouts, tiling, copying tensors, reductions and so on, especially for inference and it is about 2.13x faster than a triton fused kernel for the given shape.

English

Loïck BOURDOIS retweetledi

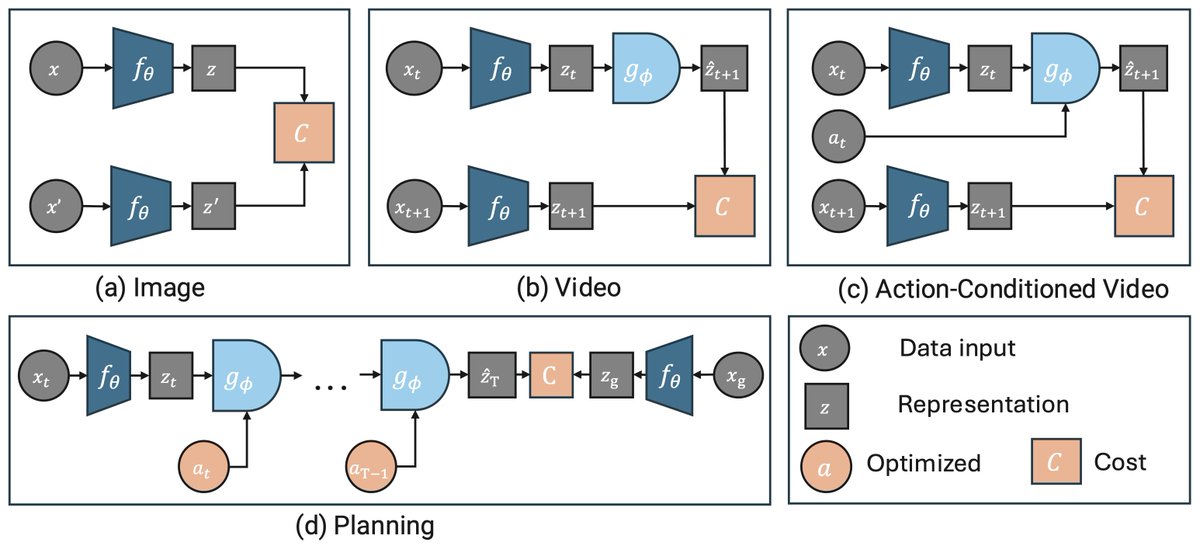

𝗜𝗻𝘁𝗿𝗼𝗱𝘂𝗰𝗶𝗻𝗴 𝗘𝗕-𝗝𝗘𝗣𝗔 ⚡

An open-source library making JEPAs accessible, trainable on a single GPU in hours! 🚀

🔗 Paper: arxiv.org/abs/2602.03604

💻 Code: github.com/facebookresear…

English

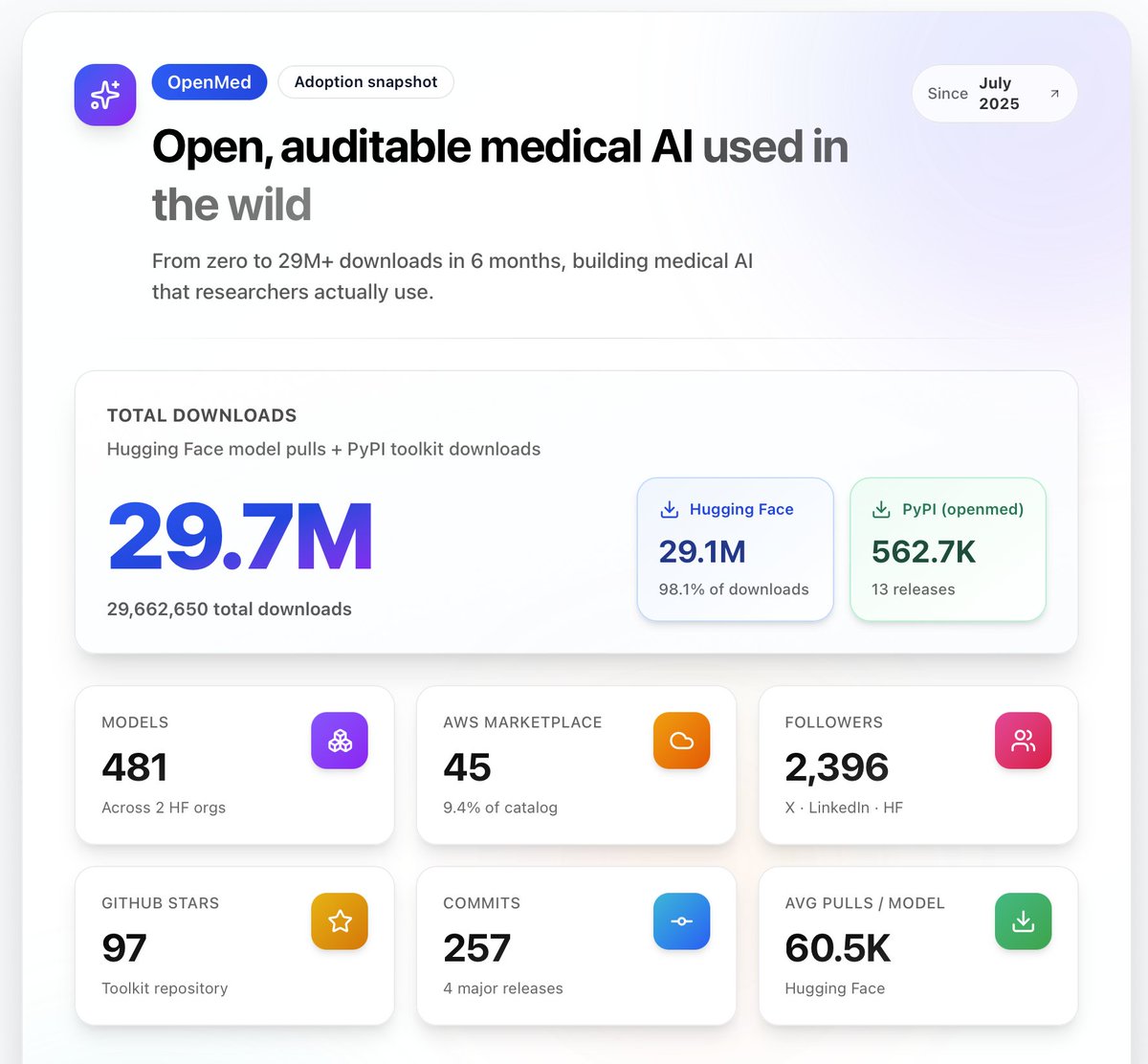

@BdsLoick @OpenMed_AI @huggingface this is just the first 6 months until the blog post. wanted to share what happened so far.

I haven’t counted after that. A lot of models, takes time to count 😆

English

@gui_penedo I suppose all good things must come to an end. Thank you very much for the high-quality multilingual datasets

English

Update: I’ve left Hugging Face 🤗

I spent the last ~2.5 years working on large-scale datasets like FineWeb🍷, FineWeb2 🥂, FineTranslations💬, and FinePDFs📄, and also got to contribute to exciting projects like SmolLM🤏 and Open-R1🐳.

It’s pretty incredible to look back at the impact these projects have had, and to see how much the community now relies on them. The reception to FineWeb when it came out, in particular, was wild.

One thing I really appreciated was being able to share what we were learning as we went rather than keeping everything internal. Writing lengthy blog posts (books?) takes a lot of work but ends up being incredibly rewarding. Hugging Face’s culture actively encourages this, and I feel very lucky to have been able to work in that kind of environment.

I’m now starting a new project with @HKydlicek (also leaving HF), still fully focused on data and strongly shaped by what we learned building at scale.

More soon 🫡

English

@lhoestq @huggingface @mervenoyann @abhi1thakur If you rename the `datasets` library to `nlp` as in early 2020, I'll make sure it passes 700k before the end of the year 👀

English

Wow there are now 600,000 public datasets on @huggingface !

They were 600 exactly five years ago 😳

(@mervenoyann @abhi1thakur can testify)

So, to summarize:

- 2020>2025 : x1000

- 2025>2030: x1000 too ??? 😂

The open source community is crazy !

English

Loïck BOURDOIS retweetledi

Today, we're releasing an updated Gemini 2.5 Flash Native Audio model. Now available via the Live API 🗣

blog.google/products/gemin…

English

Interesting read from the ARChitects, 2nd place team on @arcprize competition on @kaggle .

Their combination of diffusion LLMs with iterative improvement is quite interesting. It has some ties with TRM and HRM models.

There is some irony thoough. They tried something different from their winning solution from last year because they thought it was not successful. Irony is we won reusing their last year solution (with some improvements). Key for us was to use better pretraining data.

Jan Disselhoff@JDisselh

ARC Prize 2025 is over, an amazing contest, with amazing people competing. This year our team "the ARChitects" managed to reach second place. We tried a lot of things, some thoughts and explanation of our approach below!

English

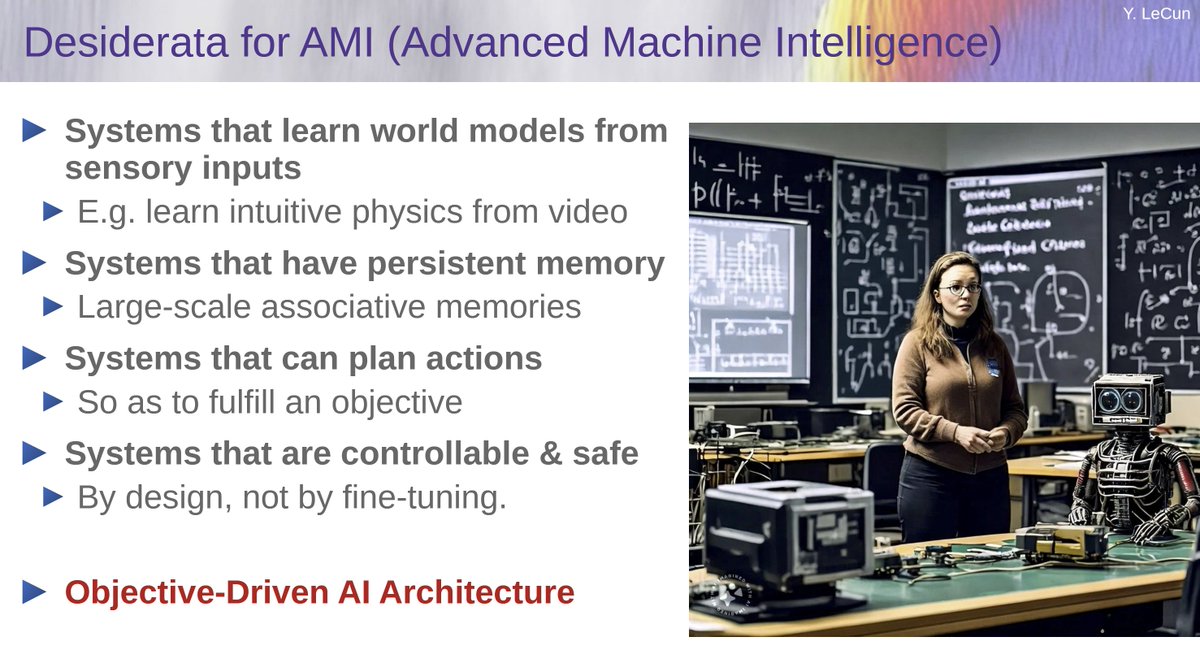

@MaziyarPanahi "he’s gonna work" 🤔

He has been working on it for at least 2 years and uses the term AMI in his lectures (in the one given at the Collège de France as part of Benoit Sagot's series, he said that he used this name with Joëlle Pineau because of the meaning it also has in French)

English

Yann revealed what’s he’s gonna work on next: Advanced Machine Intelligence (AMI)

AGI

ASI

SSI

AMI

Maziyar PANAHI@MaziyarPanahi

the man himself, @ylecun

English

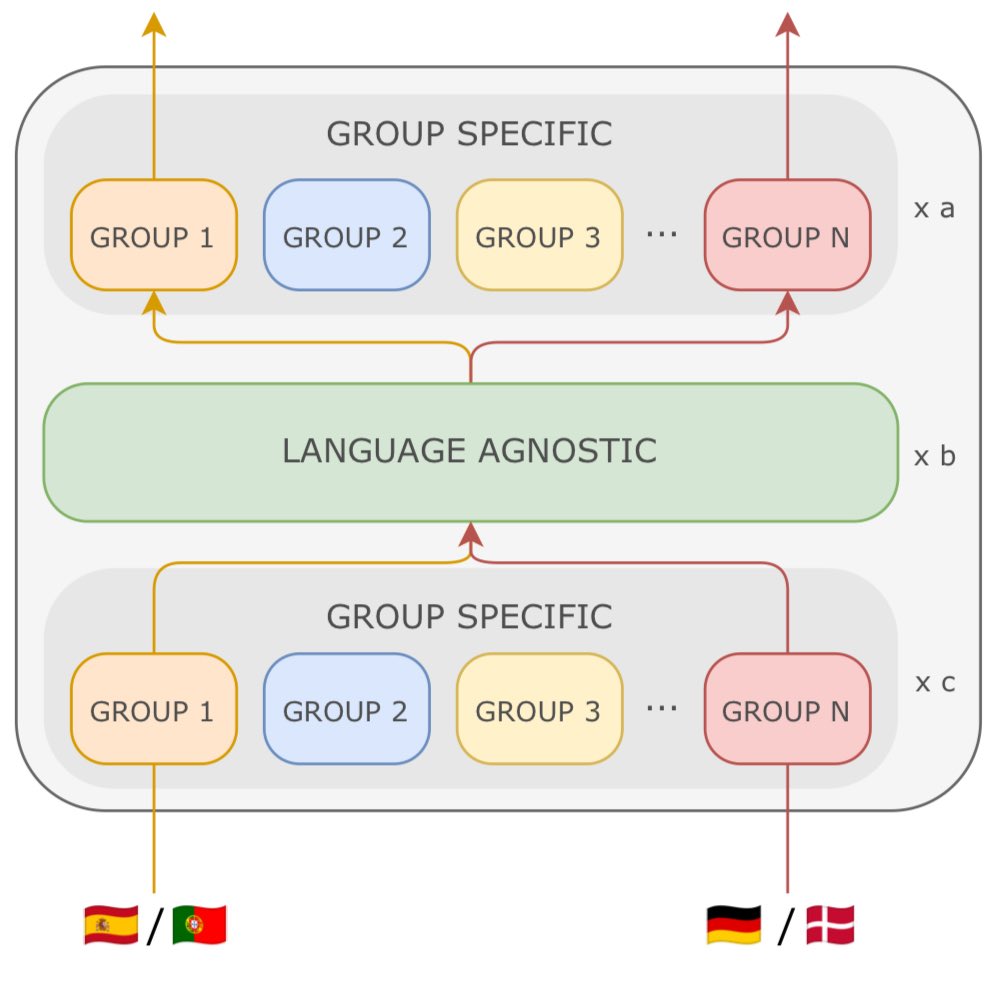

Loïck BOURDOIS retweetledi

🚨New Paper @AIatMeta 🚨

You want to train a largely multilingual model, but languages keep interfering and you can’t boost performance? Using a dense model is suboptimal when mixing many languages, so what can you do?

You can use our new architecture Mixture of Languages!

🧵1/n

English

@antoine_chaffin So much easier than downloading the dataset yourself and then searching through it for what you want

English

@BdsLoick (You also reminded me we can run SQL queries on the hub datasets, here goes my afternoon)

English

@antoine_chaffin I haven't checked whether the errors are already in the initial BEIR or introduced with the Nano version but earlier this year arxiv.org/abs/2505.16967 had found other annotation errors

English

@BdsLoick FWIW I checked and it does not seems like those faulty documents are linked to any query in qrels

Still bad, but a bit less bad than if they were

I wonder if those come from the original DBPedia dataset or have been introduced in Nano version

English

@antoine_chaffin Yeah corpus split of this subset.

Note there is also similar issue for NanoFiQA2018, NanoNQ and NanoSCIDOCS (so at least 30.7% of splits have a problem).

English

@BdsLoick god I knew Quora had issue but this seems very odd for DBPedia

Those are from the documents corpus right?

English

Loïck BOURDOIS retweetledi

We've just published the Smol Training Playbook: a distillation of hard earned knowledge to share exactly what it takes to train SOTA LLMs ⚡️

Featuring our protagonist SmolLM3, we cover:

🧭 Strategy on whether to train your own LLM and burn all your VC money

🪨 Pretraining, aka turning a mountain of text into a fancy auto-completer

🗿How to sculpt base models with post-training alchemy

🛠️ The underlying infra and how to debug your way out of NCCL purgatory

Highlights from the post-training chapter in the thread 👇

English

Loïck BOURDOIS retweetledi

📢Thrilled to introduce ATLAS 🗺️: scaling laws beyond English, for pretraining, finetuning, and the curse of multilinguality.

The largest public, multilingual scaling study to-date—we ran 774 exps (10M-8B params, 400+ languages) to answer:

🌍Are scaling laws different by language?

🧙♂️Can we model the curse of multilinguality?

⚖️Pretrain from scratch or finetune from multilingual checkpoint?

🔀Cross-lingual transfer scores for 1444 lang pairs?

1/🧵

English

🤗 Sentence Transformers is joining @huggingface! 🤗

This formalizes the existing maintenance structure, as I've personally led the project for the past two years on behalf of Hugging Face. I'm super excited about the transfer!

Details in 🧵

English

@tomaarsen Tom, you told me to count trimm as part of HF but not ST because it was just sponsorship in the form of maintenance from HF😭

I'm going to have to redo all the graphs in my blog post

English