Sabitlenmiş Tweet

AlexC

191 posts

AlexC

@Beeg_Brain

PhD Student@Ecole Centrale 🇫🇷 📩 [email protected]

France Katılım Kasım 2021

196 Takip Edilen107 Takipçiler

@huggingface I was preparing something around Lyon🇫🇷 I think this comes at a perfect timing !

English

AlexC retweetledi

STORM: Slot-based Task-aware Object-centric Representation for robotic Manipulation

Alexandre Chapin (LIRIS), Emmanuel Dellandréa (LIRIS), Liming Chen (LIRIS)

arxiv.org/abs/2601.20381 [𝚌𝚜.𝚁𝙾]

English

AlexC retweetledi

Spotlighting Task-Relevant Features: Object-Centric Representations for Better Generalization in Robotic Manipulation

Alexandre Chapin (LIRIS), Bruno Machado (LIRIS), Emmanuel Dellandréa (LIRIS), Liming Chen (LIRIS)

arxiv.org/abs/2601.21416 [𝚌𝚜.𝚁𝙾]

English

AlexC retweetledi

@RemiCadene Congrats and thank you for the amazing work on LeRobot, it has been a game changer for robotic accessibility ! 🙂 Good luck for your new projects, I'm sure something amazing will come out of it💪

English

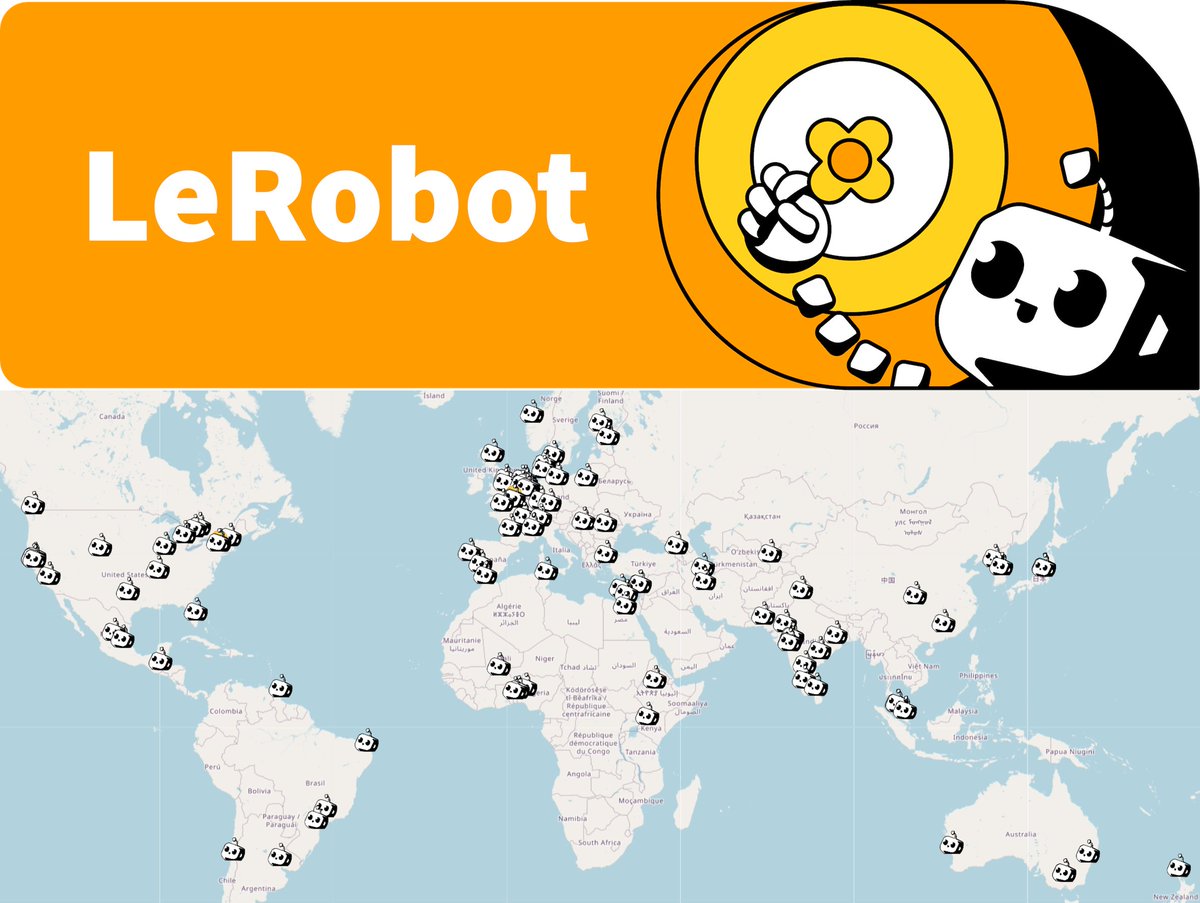

I am starting a venture on top of LeRobot!

We’re at a pivotal time. AI is moving beyond digital to the physical world. Embodied AI will change our surroundings in ways we can barely imagine. This technology holds the potential to empower everyone. It must not be controlled by just a few.

This conviction led me to propose an ambitious open-source AI robotics project to Thom, Clem, and Julien back in 2024. Hugging Face, home to a community of millions of AI builders and a team of experts who brought us transformers, datasets, and the Hugging Face Hub, was the perfect place to launch LeRobot.

I’m incredibly grateful for all the support that allowed me to build LeRobot alongside an amazing team and community. In such a short time, we built one of the most adopted open-source robotics platforms, used by startups, universities, and research labs. It is helping countless people take their first steps in robotics. Together, we’ve even assembled the world’s largest open robotics dataset. And this is only the beginning for LeRobot!

Building on this momentum, I now feel the urgency to start something new on top of LeRobot. It will push the limit of what robots are capable of and commoditize them within society. Like LeRobot, it will start in Paris, leveraging its vibrant international AI scene. Stay tuned!

As LeRobot continues to expand, it’s now in the best possible hands with @AractingiMichel, @pepijn2233 and Steven Palma taking the lead. Watching the team deliver exceptional results over the last weeks has been one of the most rewarding experiences. Their creativity, dedication, and capability to ship fast is proving just how strong the team is today!

I am extremely grateful to the many people who contributed to making LeRobot at Hugging Face and within its powerful community. Many thanks to Thom, Clem, Julien, Simon, Rob, Michel, Pepijn, Steven, Gloria, Adil, Martino, Caroline, Marine, Mishig, Guillaume, Pablo, Lysandre, Arthur, Quentin, Florent, Brigitte, Victor, Marina, Mustafa, Francesco, Jess, Jade, Ville, Leo, Max, Julien, Alexander, Flavien, Raphael, Adina, Tao, Dana, Batu, Olivier, Matthieu, Eugene, Theo, Guilherme, Hynek, Loubna, Clémentine, Merve, Vaibhav, Anna, Jeff, Adrien, Emily, Johanne, Adrien and others. There are too many of you to be all named!

Thanks again and see you soon!!! :)

~ Remi

English

AlexC retweetledi

btw if anyone wants to contribute an impactful piece of code to @LeRobotHF the whole team is fairly swamped rn and I could use some help to ship a way to process 2TB of data quickly! DMs are open :)

English

AlexC retweetledi

LeRobot@LeRobotHF

We just merged LeRobotDataset v3.0 + Streaming 🔥 A important change of our dataset format: > 📦Chunked episodes for massive scale (OXE-level) > 📽️ Efficient video storage + streaming > ⚡️ Faster loading > 📈Unified parquet metadata (no more scattered JSONs) This makes LeRobot ready for the next wave of large-scale robot learning. Read more about it in our blogpost 👉 huggingface.co/blog/lerobot-d… Open source robotics keeps leveling up!

ZXX

And this is just the beginning, we’re already working on the next version of the paper with expanded experiments and analysis. Stay tuned! 🚧📑 #lerobot #imitationlearning #robotics #benchmark #visionl

English

AlexC retweetledi

Robotic Manipulation via Imitation Learning: Taxonomy, Evolution, Benchmark, and Challenges. arxiv.org/abs/2508.17449

English

@DominiqueCAPaul Yeah of course ! (That's actually the main use case of people working with these sensors in my lab)

English

@Beeg_Brain That's a really good point I hadn't even thought of! Also it would probably help a lot with deformable objects.

English

@DominiqueCAPaul There are indeed really cool use case with such technologies. Such as model more aware of object physics: if you let the gripper "feel" the effect of gravity when grasping something, you can in a way feel the weight of objects to better adapt the way you interact

English

@Beeg_Brain Cool! I'm thinking that the mere fact that the robot has feedback on whether it's got contact with the object (vs inferring from pure visual input) could go a long way.

English