Ben Eisner

282 posts

Ben Eisner

@BenAEisner

ML/Robotics Researcher. Ph.D. Student in Robotics at CMU. Learning for Manipulation.

We’re kicking off the start of our Gemini 2.0 era with Gemini 2.0 Flash, which outperforms 1.5 Pro on key benchmarks at 2X speed (see chart below). I’m especially excited to see the fast progress on coding, with more to come. Developers can try an experimental version in AI Studio and Vertex AI today. It is also available to try in @GeminiApp on the web today, mobile coming soon.

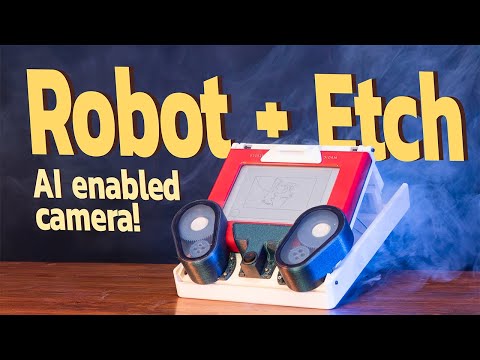

(1/N) How can we get robots to make precise placement predictions when solving rearrangement tasks, just by watching demonstrations? In our ICLR 2024 paper, “Deep SE(3)-Equivariant Geometric Reasoning for Precise Placement Tasks”, we do just that! Paper: arxiv.org/abs/2404.13478