brady 🌴

25.5K posts

brady 🌴

@bmgentile

🧻shit posts & bad tech takes 🌐 helping decentralize the web at @bonzo_finance 💼 prev. PMM @cloudflare @hedera

JUST IN: 🇺🇸 "Crypto Bros" appear to be dodging reporting sales to IRS, Bloomberg reports.

Found a paper that suggests we may have spent years training agents to become hunters of proxy reward when the more basic thing intelligence craves is not a reward at all, but to not run out of viable futures. The paper proposes that behavior is best understood as maximizing future action-state path occupancy, which collapses mathematically into a discounted entropy objective. The agent doesn’t necessarily want to GET something, but rather is trying to keep as many meaningful trajectories alive as possible. The obvious objection is “so it just does random shit? fuck around and find out?” No, this is where it gets pretty beautiful. The agent is variable when variation is cheap and becomes surgically goal-oriented the moment an absorbing state (death, starvation, falling over, etc) gets close enough to threaten its future path space. Variability is the same drive as goal-directedness, just operating under different constraints. The demos are kinda wild: - A cartpole (classic move a cart to keep a pole from falling control task) that doesn’t merely balance but dances and swings through a huge range of angles and positions because why not? The whole point is occupying state space, and rigid balance is a voluntarily impoverished life. - A prey-predator gridworld where the mouse PLAYS with the cat, teasing it and using both clockwise and counterclockwise routes around obstacles to lure it away from the food source before slipping in to eat, using both routes roughly equally. A reward-maximizing agent would collapse to one strategy and exploit it. Here, the agent keeps its behavioral repertoire - A quadruped trained with Soft Actor-Critic and ZERO external reward that learns to walk, jump, spin, and stabilize, and then makes a beeline for food only when its internal energy drops low enough that starvation becomes a real threat The thing that hit me hardest is the comparison to empowerment and free energy principle agents. Both collapse to near-deterministic policies with almost no behavioral variability. This paper’s agents find the highest-empowerment state and exploit it. FEP agents converge to classical reward maximizers. As far as I’m aware, this is the only framework that produces agents you could describe as being “alive.” The AI implication here is that we undertrain for behavioral repertoire. Most systems hit the benchmark by collapsing onto a narrow attractor basin of good-enough trajectories. They’re competent for sure, but brittle too, with one viable plan, executed until the world shifts and leaves them with nothing. The thing I increasingly want from agents isn’t competence per se, but option-preserving competence. I want agents with the ability to keep multiple viable plans alive and switch between them without catastrophe. We’ve been so focused on teaching agents what to want that we never stopped to ask what happens if wanting isn’t the point, if the deepest drive isn’t necessarily toward anything, but away from the walls closing in. paper: nature.com/articles/s4146…

Found a paper that suggests we may have spent years training agents to become hunters of proxy reward when the more basic thing intelligence craves is not a reward at all, but to not run out of viable futures. The paper proposes that behavior is best understood as maximizing future action-state path occupancy, which collapses mathematically into a discounted entropy objective. The agent doesn’t necessarily want to GET something, but rather is trying to keep as many meaningful trajectories alive as possible. The obvious objection is “so it just does random shit? fuck around and find out?” No, this is where it gets pretty beautiful. The agent is variable when variation is cheap and becomes surgically goal-oriented the moment an absorbing state (death, starvation, falling over, etc) gets close enough to threaten its future path space. Variability is the same drive as goal-directedness, just operating under different constraints. The demos are kinda wild: - A cartpole (classic move a cart to keep a pole from falling control task) that doesn’t merely balance but dances and swings through a huge range of angles and positions because why not? The whole point is occupying state space, and rigid balance is a voluntarily impoverished life. - A prey-predator gridworld where the mouse PLAYS with the cat, teasing it and using both clockwise and counterclockwise routes around obstacles to lure it away from the food source before slipping in to eat, using both routes roughly equally. A reward-maximizing agent would collapse to one strategy and exploit it. Here, the agent keeps its behavioral repertoire - A quadruped trained with Soft Actor-Critic and ZERO external reward that learns to walk, jump, spin, and stabilize, and then makes a beeline for food only when its internal energy drops low enough that starvation becomes a real threat The thing that hit me hardest is the comparison to empowerment and free energy principle agents. Both collapse to near-deterministic policies with almost no behavioral variability. This paper’s agents find the highest-empowerment state and exploit it. FEP agents converge to classical reward maximizers. As far as I’m aware, this is the only framework that produces agents you could describe as being “alive.” The AI implication here is that we undertrain for behavioral repertoire. Most systems hit the benchmark by collapsing onto a narrow attractor basin of good-enough trajectories. They’re competent for sure, but brittle too, with one viable plan, executed until the world shifts and leaves them with nothing. The thing I increasingly want from agents isn’t competence per se, but option-preserving competence. I want agents with the ability to keep multiple viable plans alive and switch between them without catastrophe. We’ve been so focused on teaching agents what to want that we never stopped to ask what happens if wanting isn’t the point, if the deepest drive isn’t necessarily toward anything, but away from the walls closing in. paper: nature.com/articles/s4146…

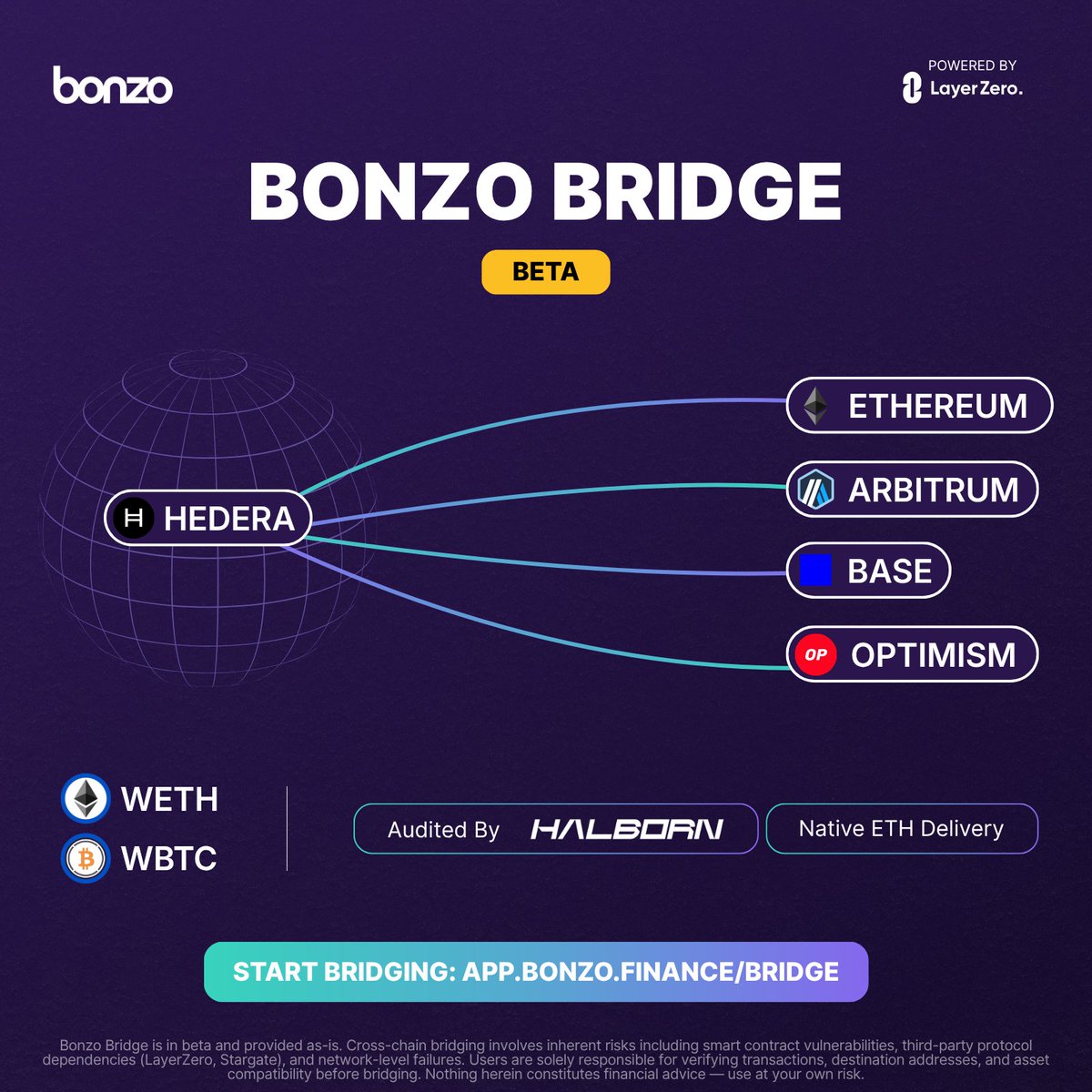

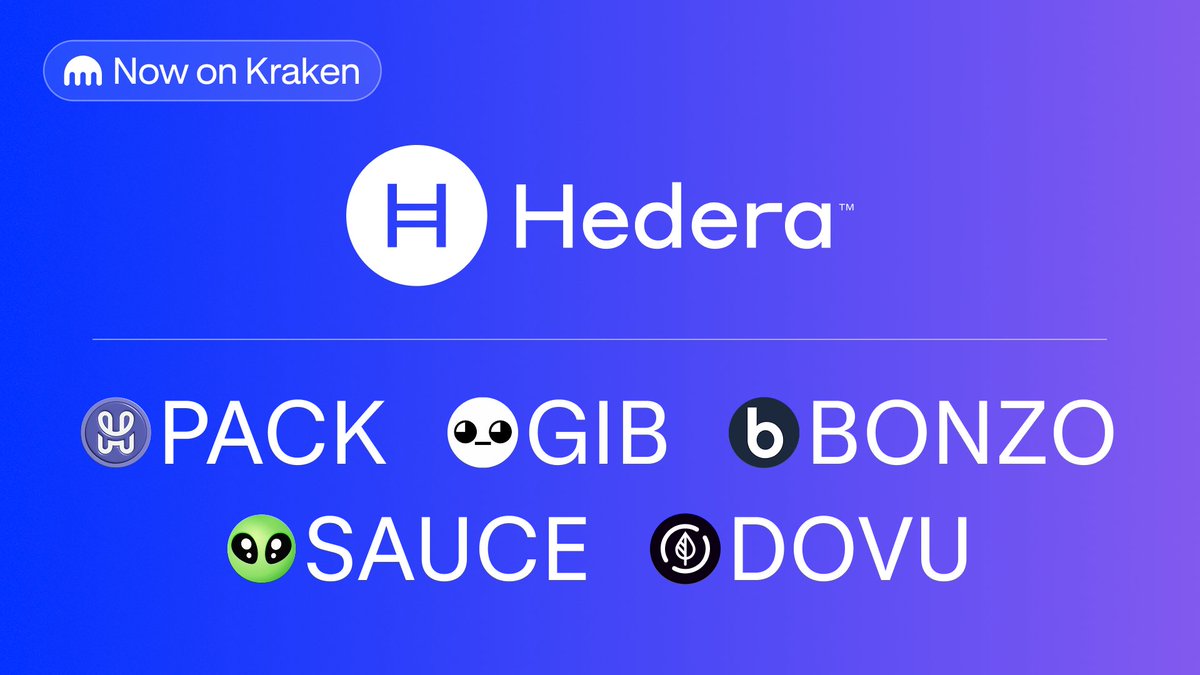

Now live: $BONZO @bonzo_finance is the liquidity layer of Hedera, enabling permissionless lending and borrowing with Aave v2-based smart contracts audited by Halborn. Start trading today → app.kraken.com/JDNW/BONZO