Javi Cabrera, MD/PhD

571 posts

Javi Cabrera, MD/PhD

@CD4cell

Primed by @columbiaBME, @UMNmedschool, @BCM_Pediatrics, @BWHAllergy; boosted at @sloan_kettering, @NIHClinicalCtr, @CFI_UMN, @RagonInstitute. Tweets=mine.

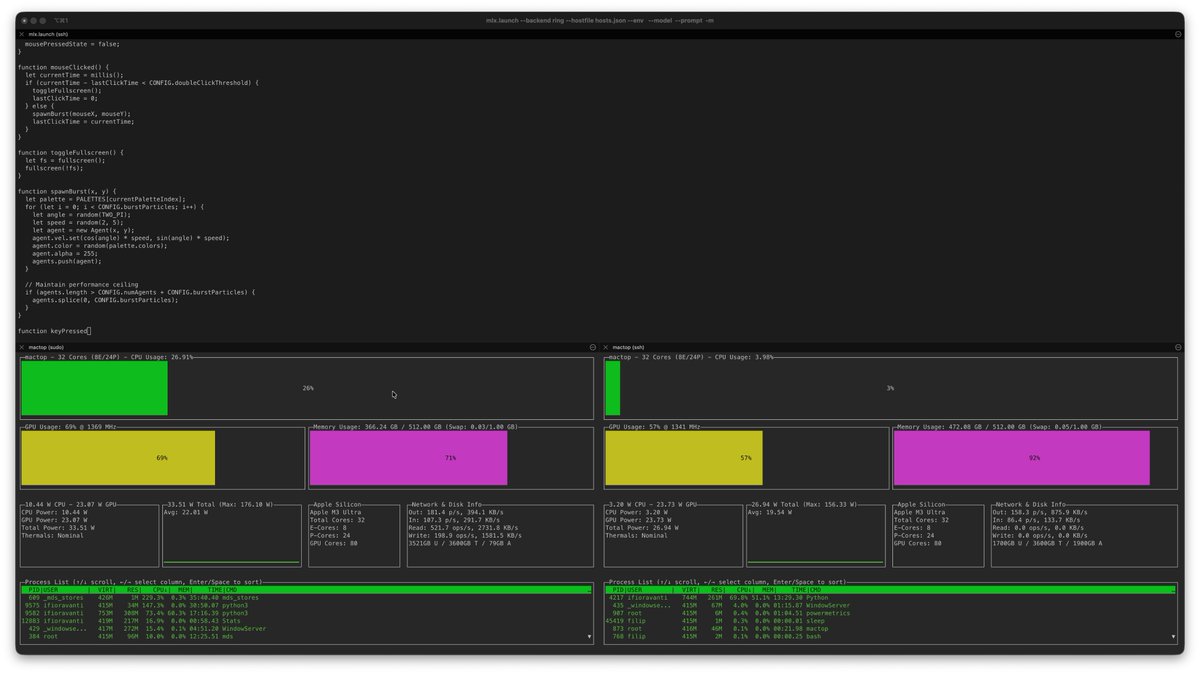

Clustering NVIDIA DGX Spark + M3 Ultra Mac Studio for 4x faster LLM inference. DGX Spark: 128GB @ 273GB/s, 100 TFLOPS (fp16), $3,999 M3 Ultra: 256GB @ 819GB/s, 26 TFLOPS (fp16), $5,599 The DGX Spark has 3x less memory bandwidth than the M3 Ultra but 4x more FLOPS. By running compute-bound prefill on the DGX Spark, memory-bound decode on the M3 Ultra, and streaming the KV cache over 10GbE, we are able to get the best of both hardware with massive speedups. Short explanation in this thread & link to full blog post below.

GLM-4.7-8bit (350GB) running at 19 toks/s on two M3 Ultra 512GB using Tensor Parallelism with EXO - MLX, versus 14 toks/s with single node. 🚀 Now context benchmarking & then OpenCode tests 🔥 Note: this is from sources, I had to change things to run it.

I won't be at NeurIPS, but there will be some fun MLX demos at the Apple booth: - Image generation on M5 iPad - Fast, distributed text generation on multiple M3 Ultras - FastVLM real-time on an iPhone

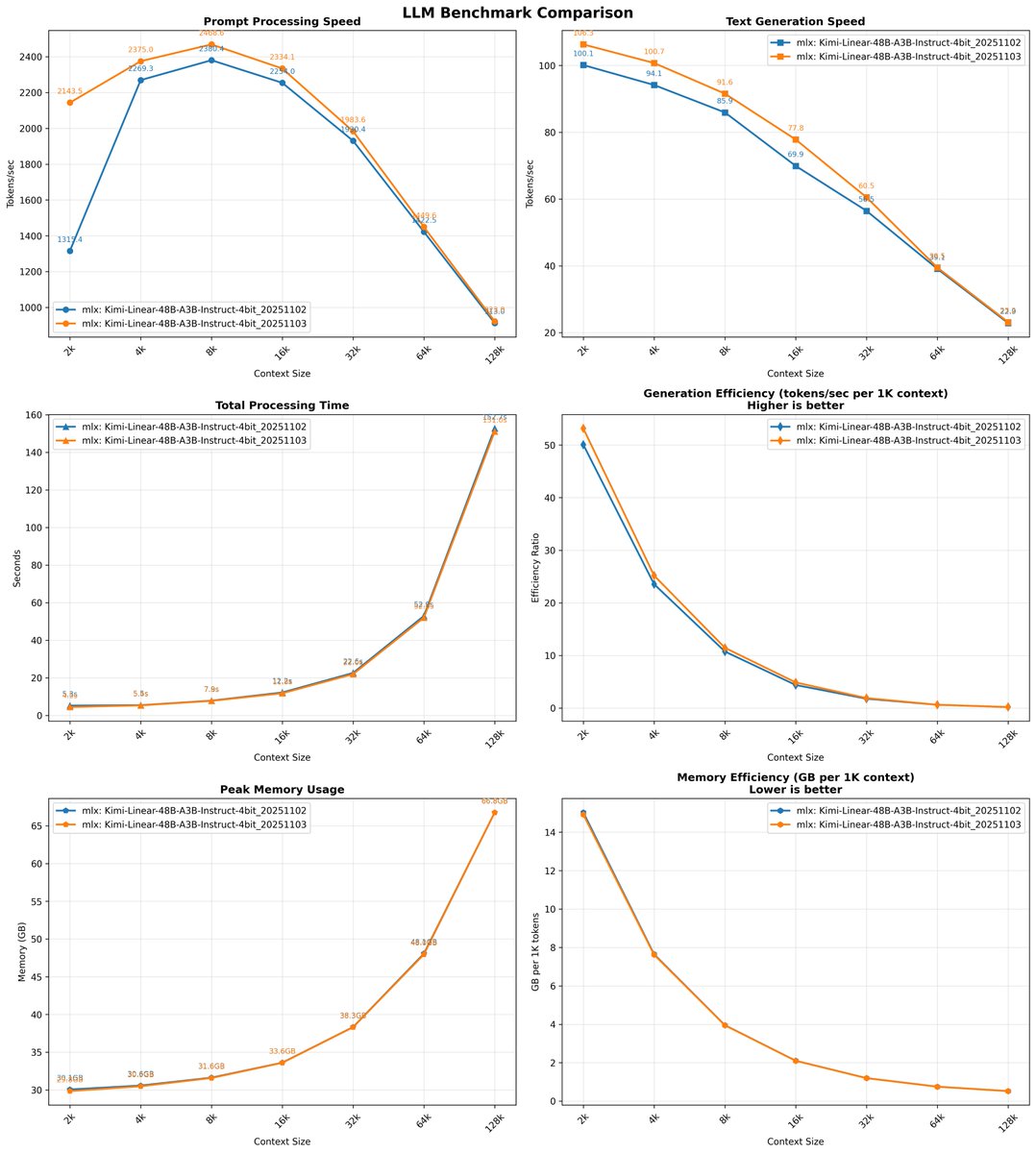

MLX: preview of Kimi-Linear-48B-A3B-Instruct-4bit context benchmark on M3 Ultra 512. Thanks to @Prince_Canuma and @kernelpool for the PR (WIP)

In Mukachevo, the Russians practically burned down an American company producing electronics—home appliances, nothing military. The Russians knew exactly where they lobbed the missiles. We believe this was a deliberate attack against American property and investments in Ukraine.