Joel Miller

255 posts

Joel Miller

@CS_Synthesist

PhD student asking "what would economics look like if people had friends?" at UIC, Microsoft Research, and Gitcoin. 0.1X engineer

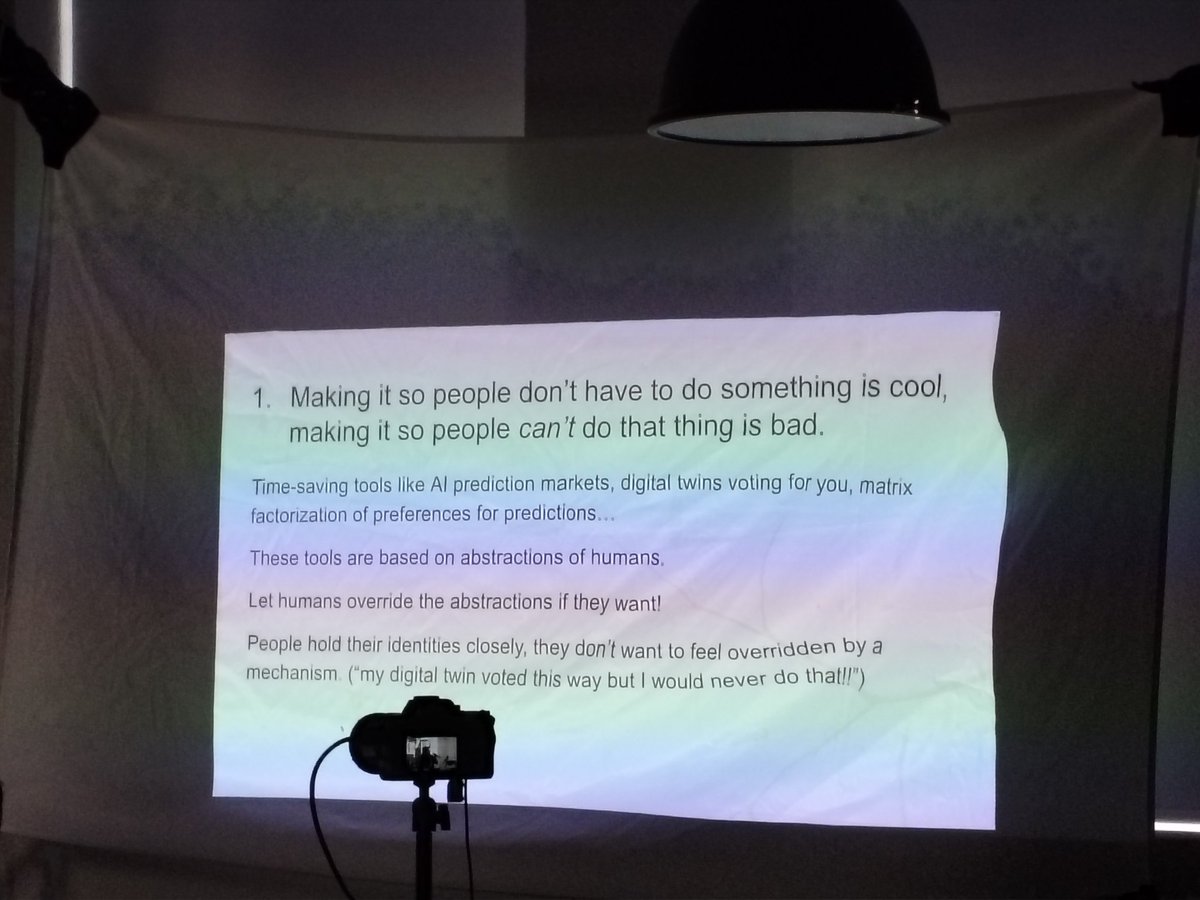

The evening session today dug into retroactive public goods funding systems with @cryptoeconlab At a larger level, the problem to solve is certain projects like dashboards, OSS or network R&D are good for an ecosystem but hard to build an ecosystem around But give too much and we have laffer curve dynamics where people flee the ecosystem (like UK currently) & give too little & we leave Pareto efficiencies on the table Filecoin addresses these challenges with 2 approaches: Proactive funding programs & retroactive ones. As much as distributing capital, it is also about surfscing signal across the ecosystem & setting a course for the network Filecoin has distributed around 500k+ FIL ($1-10 million depending on price over time) via 2 rounds of retro funding. They break up these programs into 3 parts; 1. Measure stage where you map the ecosystem & take in applications 2. Evaluate projects thru high context badgeholders 3. Channel rewards, which they now do via @dripsnetwork so they can map out dependency graphs in their ecosystem Their initial learnings from round 1 were - information too vague for judges too properly assess projects - confusion over whether to support mixed goods or only public spirited ones - distribution being too flat whereas it should be more power law like - dislike on 0 voting where judges could single handedly bring down a projects score by giving a zero to them - confusion over impact gap vs direct impact The last point reminds me of what @dwddao brought up, that retro funding is actually harder than proactive since it needs to subtract aggregate impact from a counterfactual where the project didn't exist, which is hard to do Whereas proactive can simply identify gaps & have a theory of change to execute on These learnings led to RPGF 2 where 33 badgeholders voted in a way that a few projects got a lot but also had a long tail giving money to smaller projects Post discussion we realized that for retro funding to be truly meaningful, we either 1. Keep a long window between announcement of the round and it's eventual reward, so teams have enough time to deliver. Similar to conferences in many ways, that have an annual deadline teams work towards 2. Have really short monthly cycles that create regularity in funding cadence, in a way that doesn't balloon overhead for the judges We were particularly excited by digital AI twins of the badgeholders that allocate 10% of the funds monthly, and every 3-6 months the human version reviews projects and releases the remaining 90% Overall while round 3 is going to continue being predictable, i expect major changes incoming for filecoin RPGF from round 4 onwards

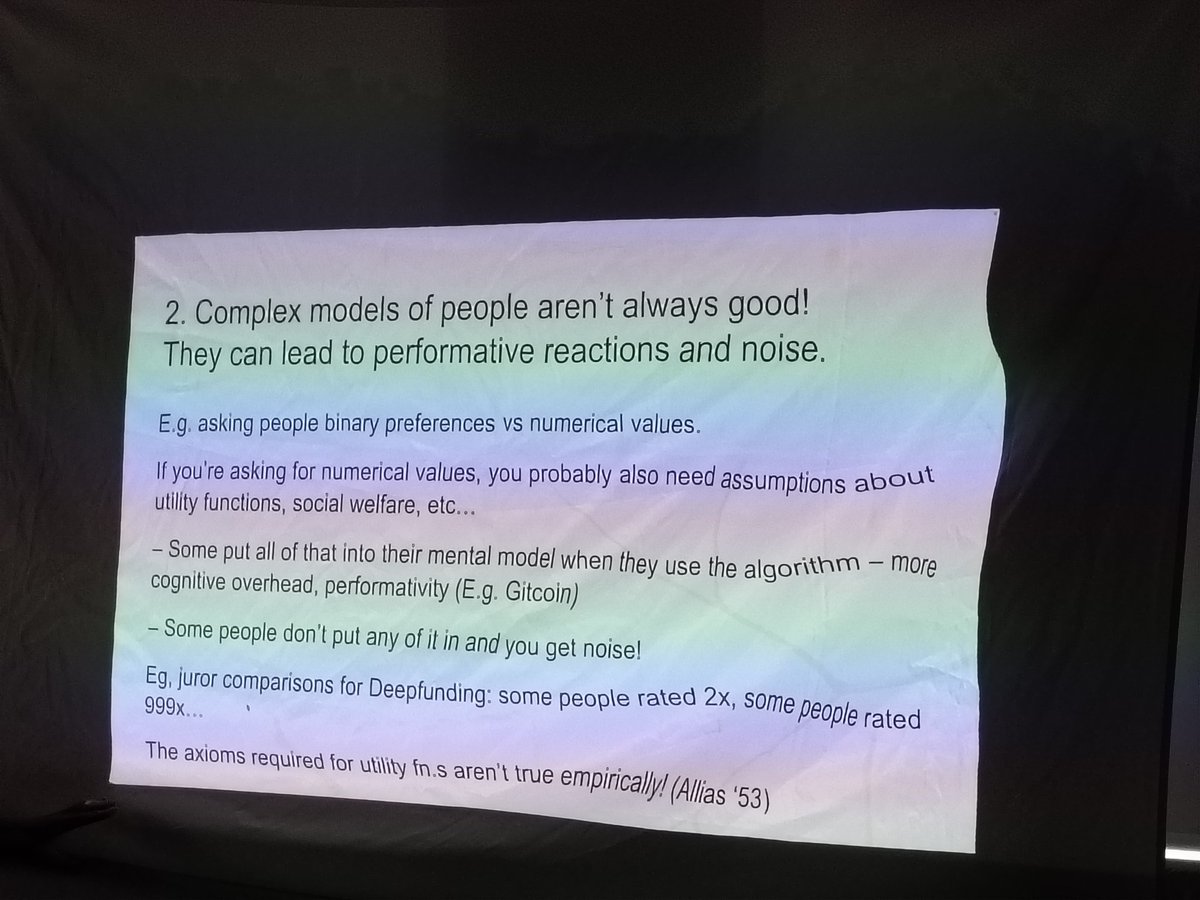

One effect of the impact evaluator residency in Iceland has been getting me to look much deeper into condorcet voting They conform with insights @JonasSFT shared on not having human do quantification but only rankings @CS_Synthesist gave a talk today on 4 ways of implementing it. It's one of those rare ideas that academics all agree is good in theory but there are few empirical case studies of how it works in practice At a broad level Condorcet does away with strategic voting by only having voters rank different options. It then uses computation to emerge with a winner through pairwise matchups of each candidate based on the rankings provided (there is an edge case or condorcet paradox where no winner is declared) Classic condorcet works well when there are few options so voters can actually rank all of them. However it functions with a static list of items as new entrants affect the ranking order In 2024 Brandl proposed a new version of it called the urn mechanism that overcame both issues by letting people propose new options at any time & reducing cognitive by not asking for exhaustive rankings but only answering a few binary questions It's main drawback is the heavy computation required, for 20 people with 5 options it took 50k steps to finally have results computed 😨 So then we come to more experimental setups like simulated urn condorcet, where people can state that "any distribution is fine so long as A = 2x B , C> A, etc or even give their preferred split & vote based on how close it is to that split. While faster to converge, the main drawback is that like classic condorcet it works only with a static list of projects to rank as introduction of a new entrants screws up the rules you put in place So then we come to the final version of it that @williamhwgeorge helped brainstorm: have a reigning option that can be displaced by one other competitor So we need some quality control on who gets to propose a candidate against the current split but it allows for input & creativity along with dynamism in letting new objects enter without messing up earlier calculations So what are some use cases we are targeting here? For one, to choose between different domains or mechanisms. We hope to propose this on @gitcoin as a way for the network & stewards to come to consensus on which domains deserve the most support in GG24 If it works well here, we can see more ambitious uses like letting a larger network comes to consensus on resource distribution between different mechanisms like QF, time weighting, deep funding, etc or even between direct projects themselves One unnadressed question here, although condorcet can give us a ranked ordering it isn't clear how we distribute funds based on the list. Is it a power law where the top winner gets orders of magnitude more or a peanut butter spread that's more even? Overall i like the ambitiousness of empirically testing out condorcet in some way & think the residency would pay off its RoI on simply this pilot taking place!