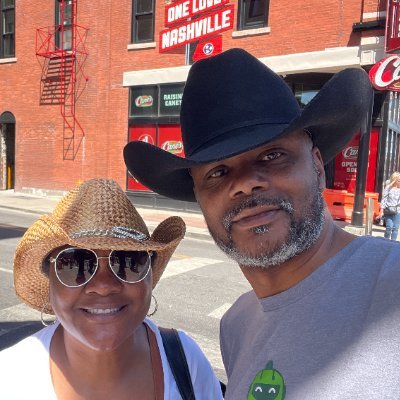

Keith Townsend

85K posts

Keith Townsend

@CTOAdvisor

CTO Turned Advisor | Helping Vendors Resonate and IT Leaders Execute. Engage with my virtual twin https://t.co/7fh1X8hbEJ. Independent Advisor.

Announcing general availability of Amazon EC2 M3 Ultra Mac instances dlvr.it/TSXx2s

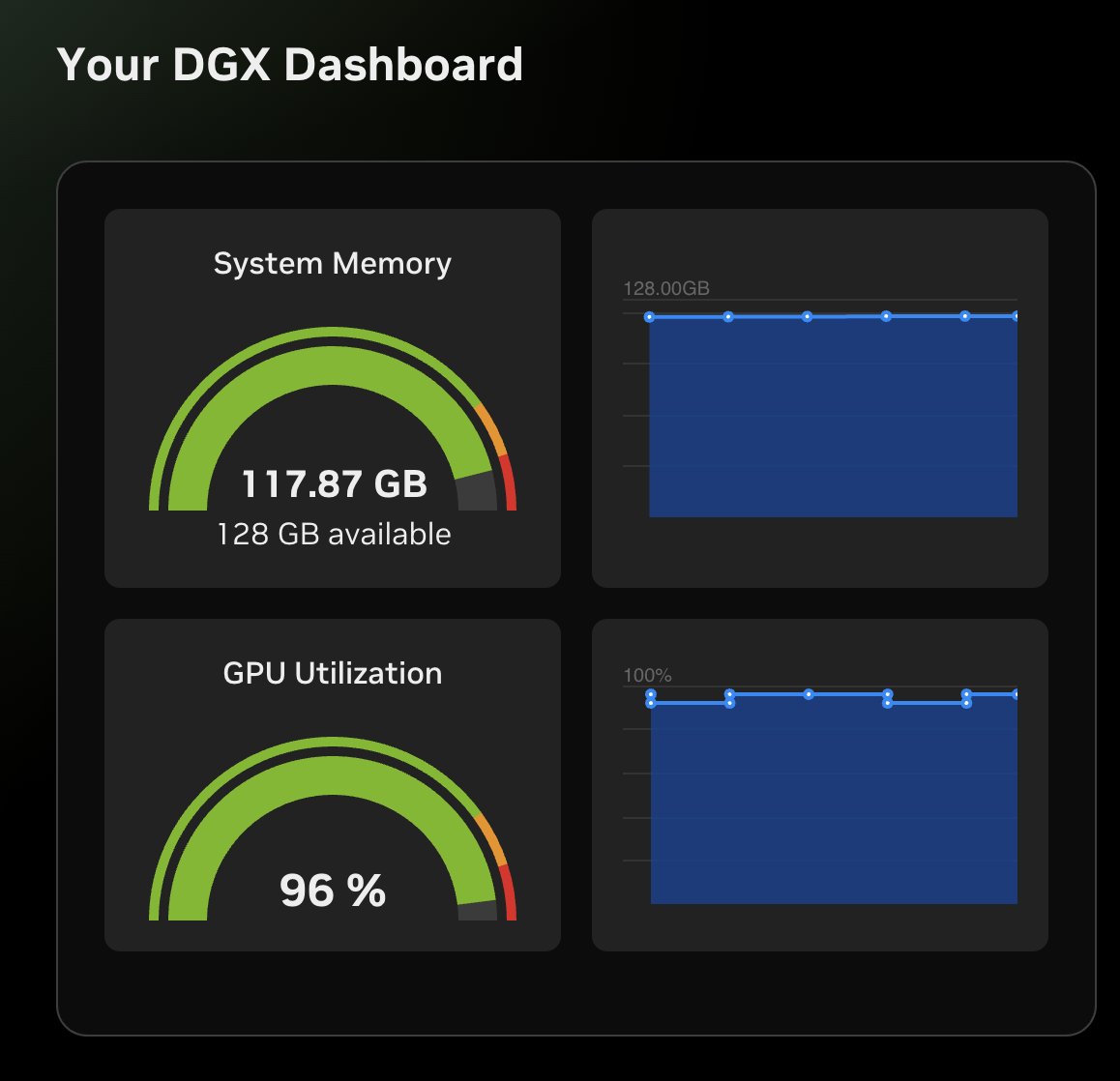

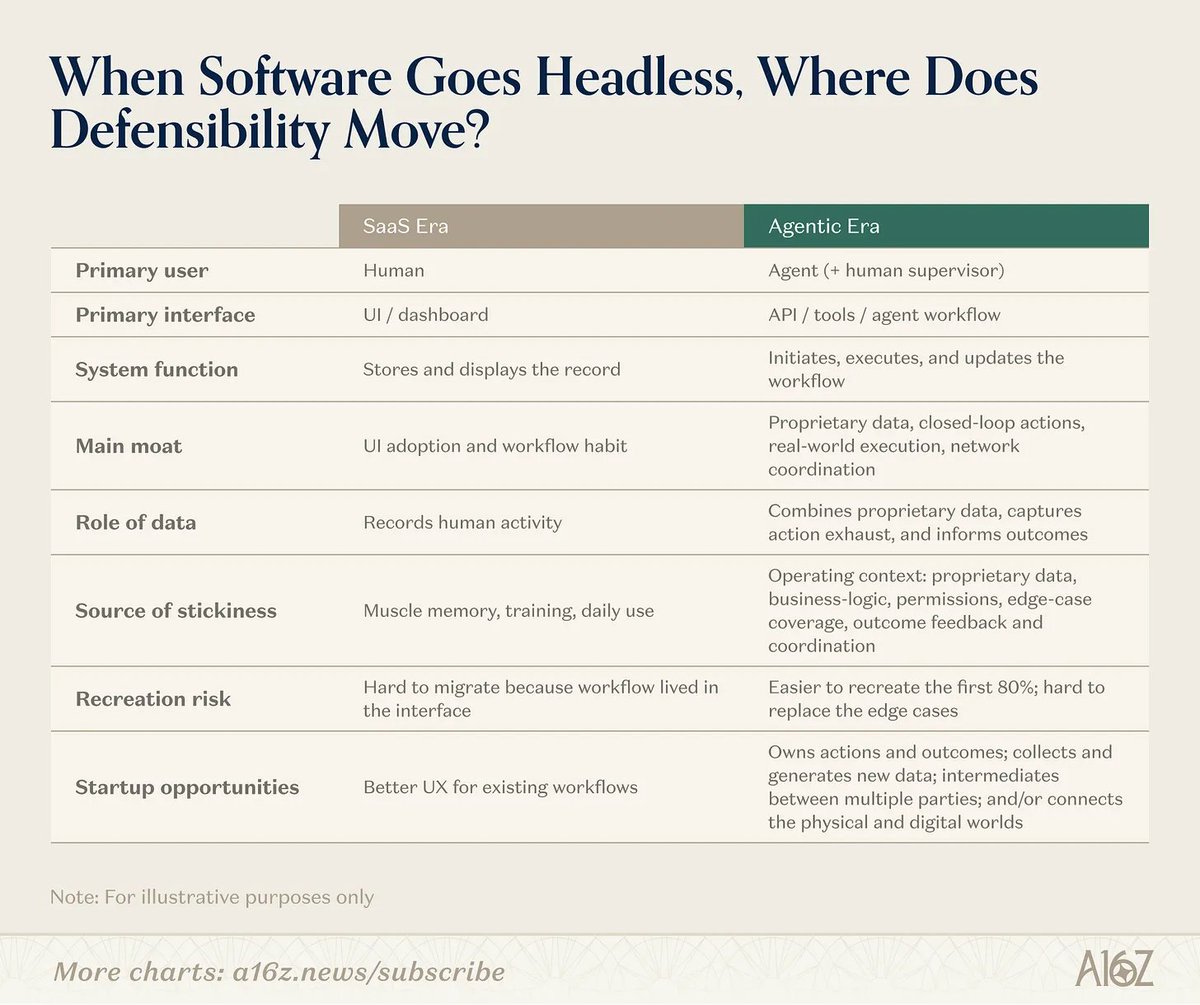

Everyone demos agents. The more useful question is who controls the loop. I wrote a requirements document for an experiment I have not run yet. The unusual part is not writing the requirements document first. The unusual part is publishing it before running the experiment. Why publish it now? Because the design decisions are the content. I have a DGX Spark in the lab. The interesting question is not whether it can run a model. Local inference is becoming practical enough that “can it run?” is the less interesting question. The harder question is whether a local model can participate in a real agentic system when execution, validation, and judgment are separated deliberately. My working hypothesis: local models may be excellent workers but unreliable governors. A local model can summarize, extract, classify, and propose a decision. But should it own the loop? Can it tell when evidence is weak? Can it detect when it is summarizing instead of deciding? Can it know when to stop? Can it know when local judgment is not enough? The architecture under test is not all-local or all-cloud. It is local execution, deterministic validation, and selective escalation to a stronger reasoning model when the workflow requires judgment. In plain English: local workers, deterministic control, and outsourced judgment only when needed. That is the Layer 2C question in my 4+1 AI Infrastructure Model. Where does judgment live? Full post: “Who Controls the Loop? A Requirements Document for Local-First Agentic AI” thectoadvisor.com/blog/2026/05/1…

"Why can't you just go back to the old model of charging based on messages?"

Everyone demos agents. The more useful question is who controls the loop. I wrote a requirements document for an experiment I have not run yet. The unusual part is not writing the requirements document first. The unusual part is publishing it before running the experiment. Why publish it now? Because the design decisions are the content. I have a DGX Spark in the lab. The interesting question is not whether it can run a model. Local inference is becoming practical enough that “can it run?” is the less interesting question. The harder question is whether a local model can participate in a real agentic system when execution, validation, and judgment are separated deliberately. My working hypothesis: local models may be excellent workers but unreliable governors. A local model can summarize, extract, classify, and propose a decision. But should it own the loop? Can it tell when evidence is weak? Can it detect when it is summarizing instead of deciding? Can it know when to stop? Can it know when local judgment is not enough? The architecture under test is not all-local or all-cloud. It is local execution, deterministic validation, and selective escalation to a stronger reasoning model when the workflow requires judgment. In plain English: local workers, deterministic control, and outsourced judgment only when needed. That is the Layer 2C question in my 4+1 AI Infrastructure Model. Where does judgment live? Full post: “Who Controls the Loop? A Requirements Document for Local-First Agentic AI” thectoadvisor.com/blog/2026/05/1…