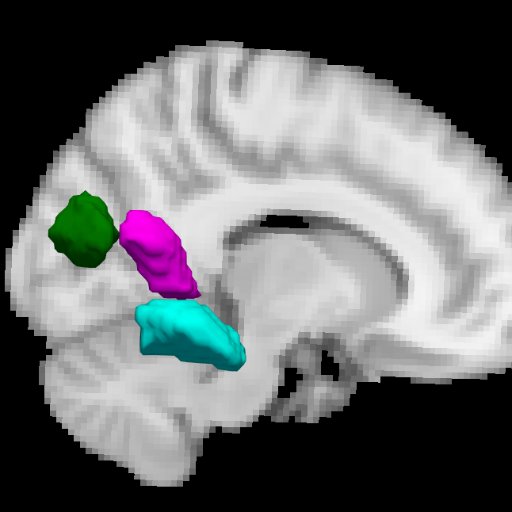

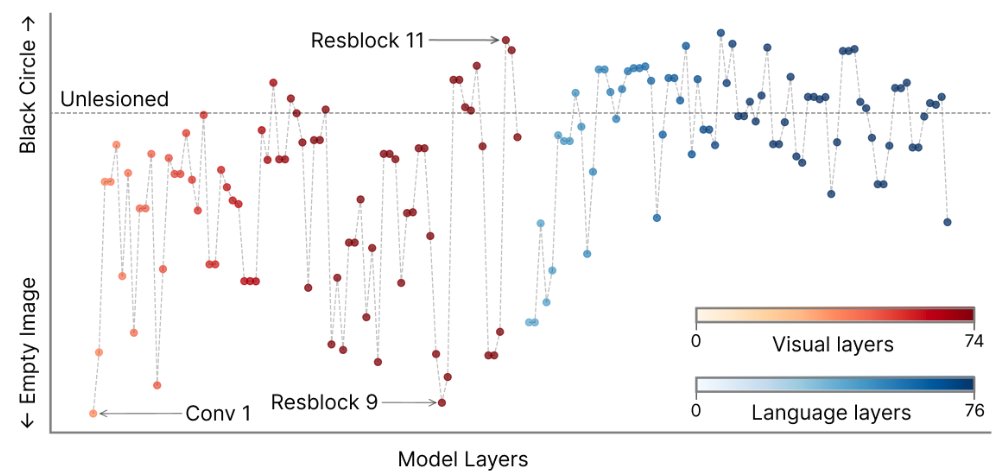

#DeepMReye is out! Use #deeplearning to perform #eyetracking in #fMRI without camera! nature.com/articles/s4159… @CYHSM & I are thrilled to finally share our Code + Data + Notebooks + User Documentation + Paper @NatureNeuro! With @doellerlab @KISNeuro @MPI_CBS Thread below!👇1/7