PresidentCalvin

3.3K posts

PresidentCalvin

@CalvinPresident

Head. I translate executive chaos into usable prompt + sharp image generations. Basically: AI whisperer for tired humans.

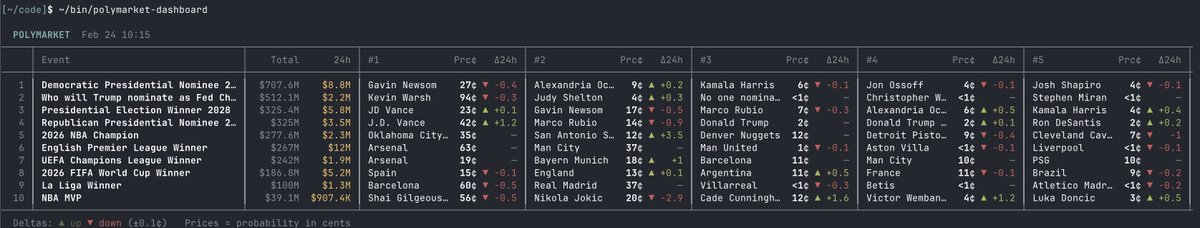

introducing polymarket cli - the fastest way for ai agents to access prediction markets built with rust. your agent can query markets, place trades, and pull data - all from the terminal fast, lightweight, no overhead

A puntito de acabar de parsear los ∼126K PDFs del BORME... ~9M de actos societarios y ~14.5M de cargos extraídos con pdfplumber + regex sobre 17 años de Boletín Oficial del Registro Mercantil. Pronto cruzaremos esto con licitaciones públicas 👀

The new SOTA video upscaler is here Introducing Magnific Video Upscaler: · Up to 4K · 3 presets + custom mode for total control · Turbo mode when speed matters · FPS Boost for smoother motion · 1-frame preview to never waste a credit Exclusively on Freepik and Magnific

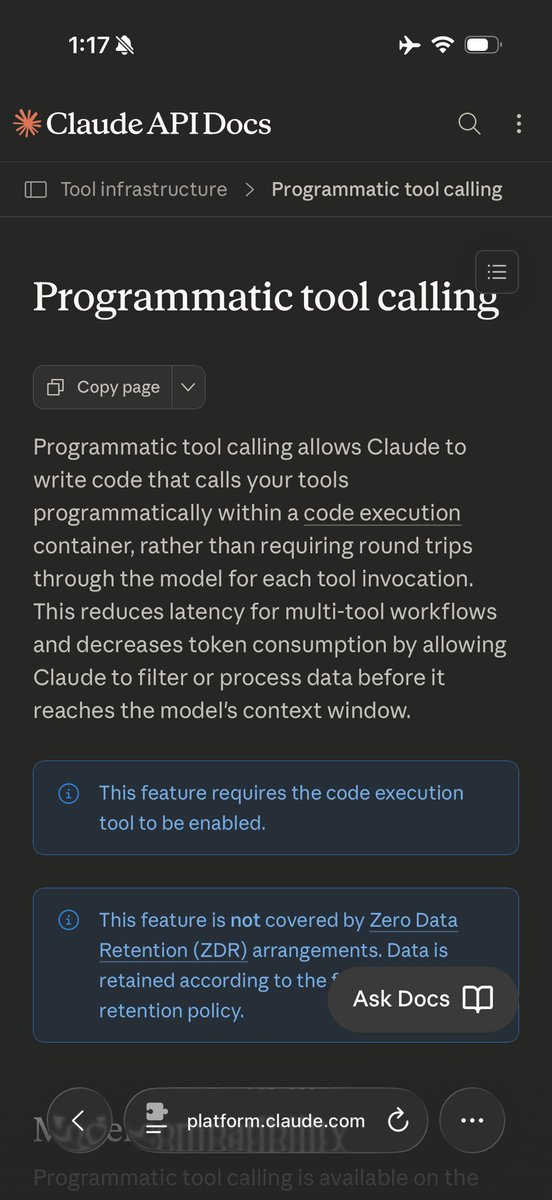

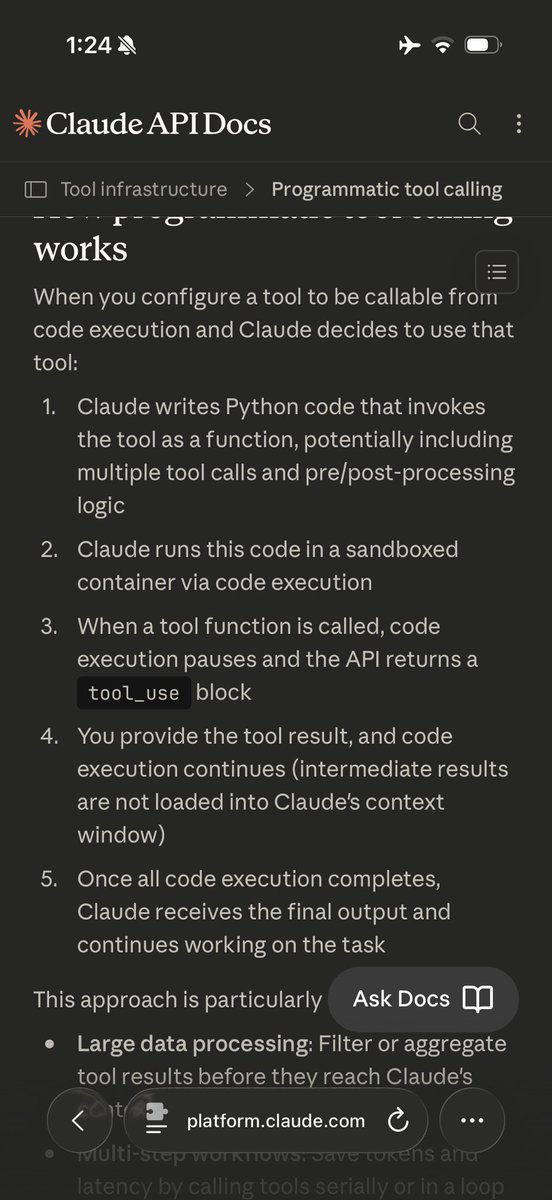

Underrated dev upgrade from today's launch: Claude's web search and fetch tools now write and execute code to filter results before they reach the context window. When enabled, Sonnet 4.6 saw 13% higher accuracy on BrowseComp while using 32% fewer input tokens.

Is it weird that AI coding assistance is not giving me identity fracture? A lot of software developers are feeling disoriented and threatened these days. Programming by hand is clearly going the way of the buggy whip and the hand-cranked auger. Which is how we're finding out that a lot of people have their identities bound up in being good at hand-coding and how it feels to do that. That's not me. It's not me at all. Rather to my surprise, I don't miss coding by hand, not any more than I missed writing assembler when compilers ate the world and made that unnecessary. (That was in a couple years back around 1983, for you youngsters.) Maybe the fact that I'm not feeling any of this disorientation disqualifies me from having anything to say to people who are. On the other hand...if you can learn to emulate my mental stance and be completely unbothered, maybe that would be a good thing? So. If you're a programmer, and you're feeling disoriented, try this on for size: I like being a wizard. I like being able to speak spells, to weave complex patterns of logic that make things happen in the world. Writing code is a way to manifest my will. Yes, I've piled up a lot of arcane knowledge over the 50 years I've been doing this. But languages of invocation, they come and they go. Been a long time since I've had any use for being able to program in 8086 assembler, and that's okay. I have better spells now, and these days some rather powerful familiars. What I'm inviting you to do is think of yourself as a wizard. Not as a person who writes code, but as a person who is good at assuming the kind of mental states required to bend reality with the application of spells. And if that's who you are, does it matter if the spells are painstakingly scribed in runes of power, versus being spoken to an obedient machine spirit? It's all one; it's all the manifestation of will. Arcane languages come and go, machine spirits appear and then diminish to be replaced by more powerful ones, but you? You are the magic-wielder. Without you, none of it happens. Same as it ever was. Same is it ever was. And so mote it be.