Changdae Oh @ ICLR'26 retweetledi

Your LLM agent just mass-deleted a production database because it was confident it understood the task. It didn't. Avoiding these irreversible mistakes requires uncertainty quantification, a pressing open problem in the era of LLM agents.

Check out our #ACL2026 paper:

"Uncertainty Quantification in LLM Agents: Foundations, Emerging Challenges, and Opportunities"

🔍 Why this matters:

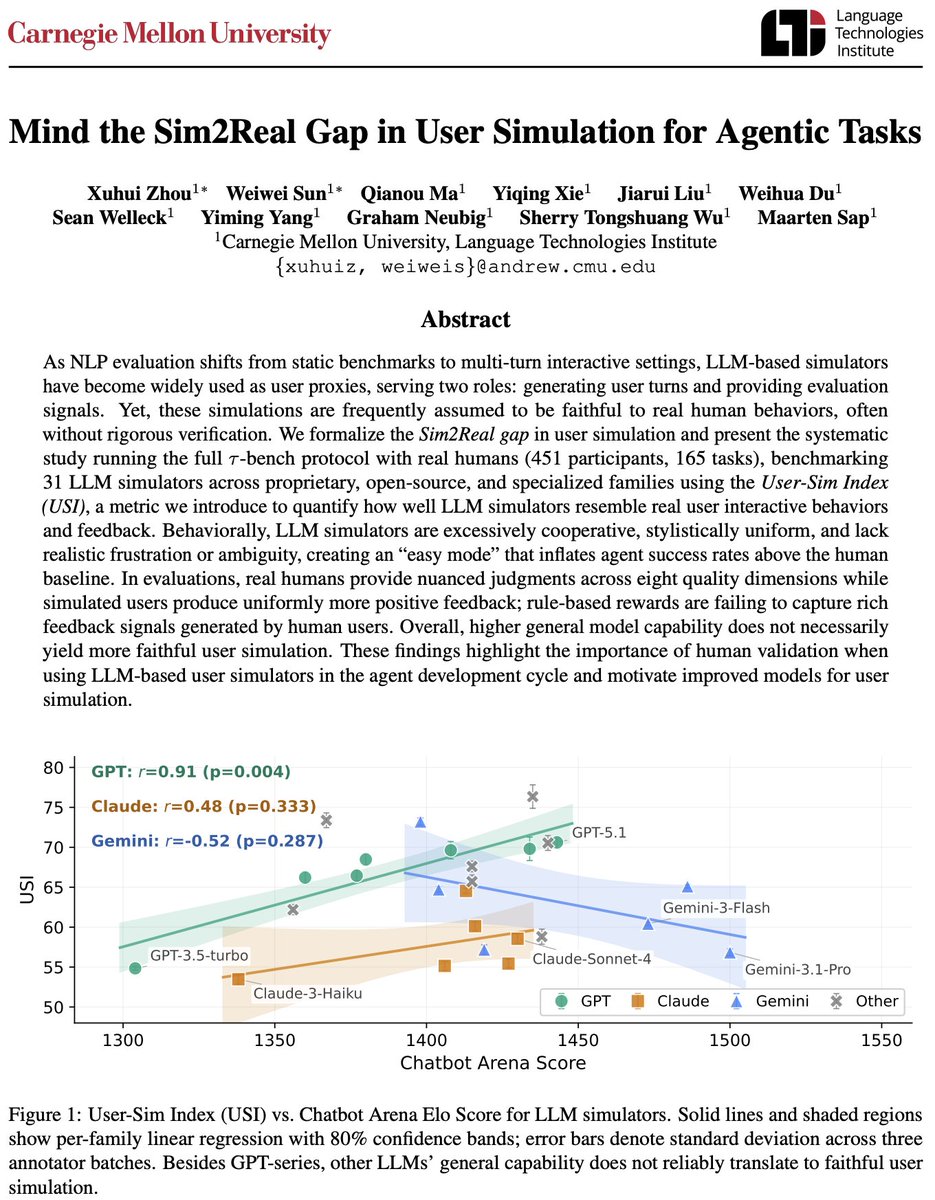

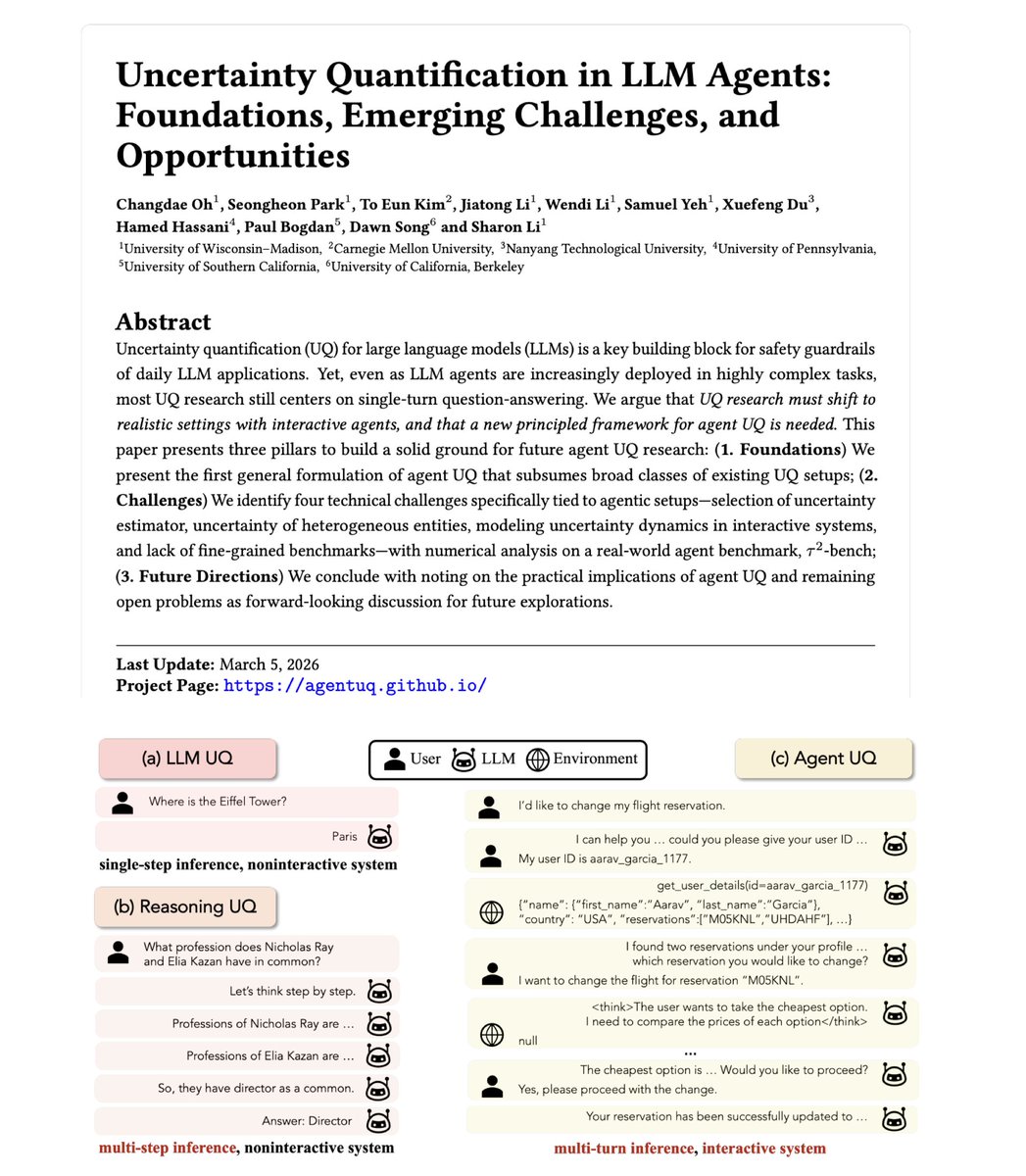

LLM agents now book flights, modify databases, and execute code autonomously. Yet most UQ research still measures a single-turn QA setup. In contrast, agents follow multi-turn trajectories in which they interact with users, call tools, and receive environmental feedback. The gap between how we study UQ and how agents actually operate is enormous.

⚙️ A unified formulation:

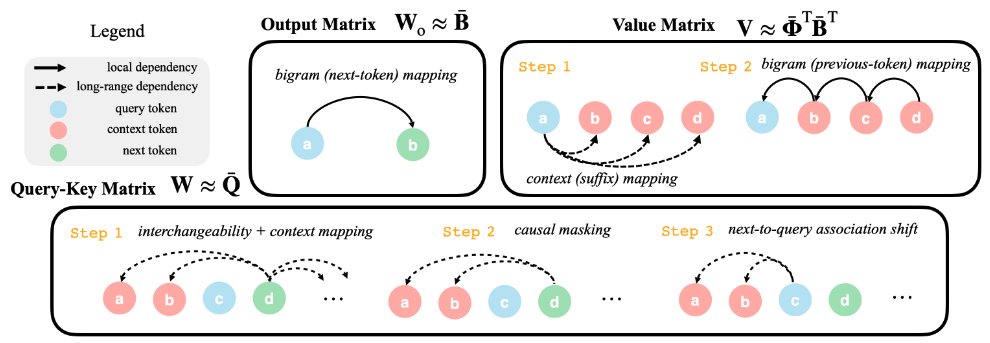

We present the first unified formulation of Agent UQ. It models the full trajectory (actions, observations, states) and decomposes uncertainty per turn via the chain rule. Under this formulation, single-step LLM UQ and multi-step reasoning UQ fall out as special cases.

🚧 Challenges:

We identify four core challenges: from selecting the right UQ estimator when existing methods all break down in agentic settings to handling heterogeneous uncertainty sources (user, tools, environment) to the near-total lack of fine-grained agent benchmarks (we survey 44 and find that turn-level evaluation is extremely rare).

🌍 Implications and open problems:

Agent UQ is the missing safety layer for healthcare agents triaging patients, SWE agents pushing code to prod, and agents controlling cyber-physical systems. We also surface open problems around solution multiplicity, multi-agent UQ, and self-evolving systems.

We release code and data to help the community build on this.

📄 Paper: arxiv.org/abs/2602.05073

🌐 Project: agentuq.github.io

💻 Code: github.com/deeplearning-w…

Huge shoutout to @changdaeoh, who spearheaded this effort. When we started the work, agent UQ was a loosely defined space with scattered ideas; Changdae brought the clarity, structure, and rigor that the field needed to move forward.

Also thanks to all the collaborators: @seongheon_96 , To Eun Kim, @JiatongLi0418, @Wendi_Li_ , @Samuel861025 @xuefeng_du, Hamed Hassani, Paul Bogdan, Dawn Song

English