chason retweetledi

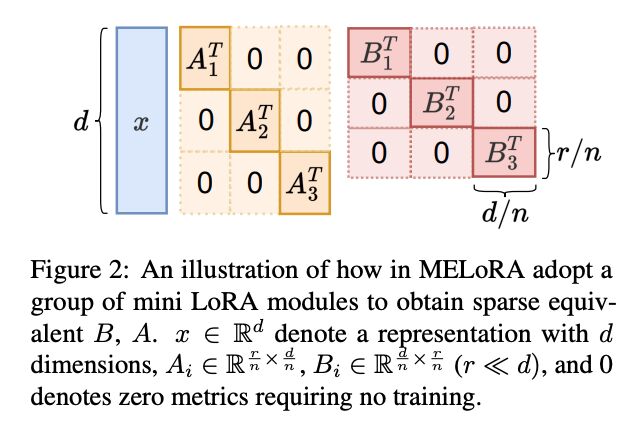

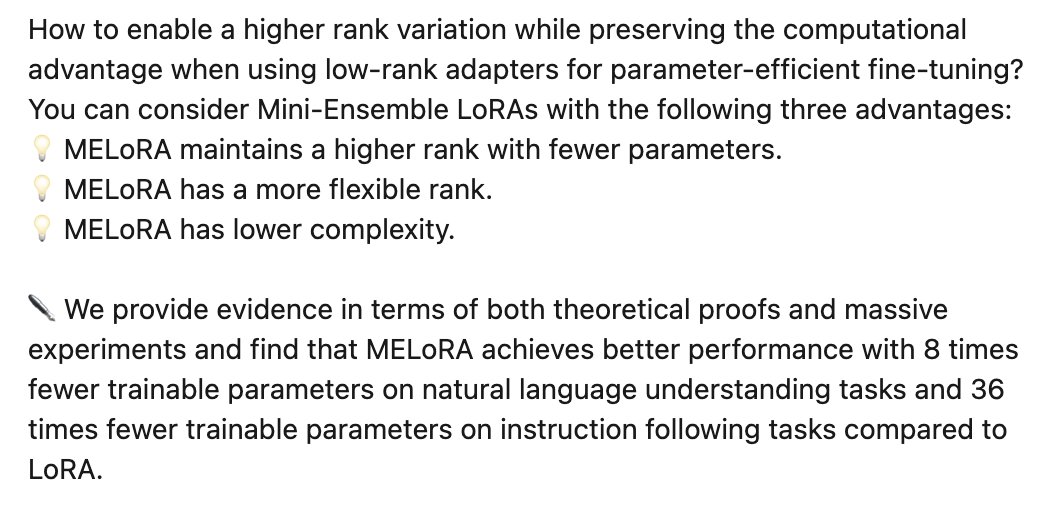

How to enable a higher rank variation while preserving the computational advantage when using low-rank adapters for parameter-efficient fine-tuning? You can consider Mini-Ensemble LoRAs at #ACL2024.

Congrats, @jayren3 @ChengshunS @zhaochun_ren @mdr

arxiv.org/pdf/2402.17263…

English