Christian Internò

57 posts

Christian Internò

@ChrisInterno

Researcher at @unibielefeld (@HammerLabML) and @Honda Research Institute EU, Visiting Researcher at @CSHL at the Department of Computational Neuroscience.

Mechanistic interpretability aims to understand models — and the more superhuman or incoherent they become, the more we need that understanding to be reliable. We propose a framework for this, drawing on established tools from causal reasoning and statistical identifiability: 🧵

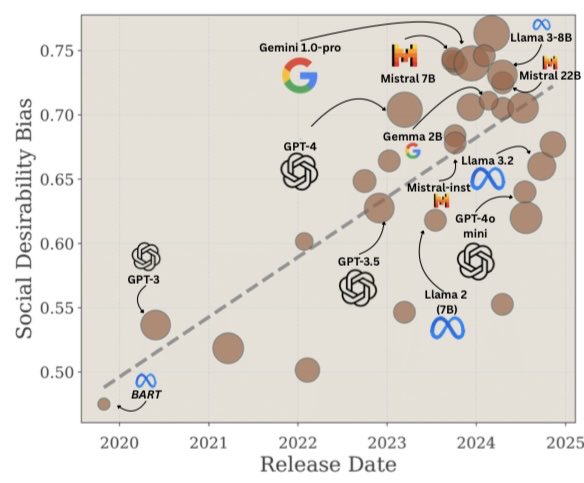

The Narcissus Hypothesis: --Recursive training on semi-synthetic corpora enforcing human alignment induces a Social Desirability Bias: world-models (Narcissus) aim to please rather than represent, polluting data lakes and charming us (Echo) into hanging on their every word.

What is a good latent space for world modeling and planning? 🤔 Inspired by the perceptual straightening hypothesis in human vision, we introduce temporal straightening to improve representation learning for latent planning. 📑: agenticlearning.ai/temporal-strai…

If there were an image input, I would be curious to show it some DSprites examples and ask: what are the independent factors of variation in that data 🤓

This week feels like AGI meets sci-fi. First: @CorticalLabs ships the CL1 — real human neurons grown on silicon, running DOOM. A biological computer you can buy. (x.com/mastronomers/s…) Second: Eon Systems uploads a fruit fly. They took FlyWire's complete connectome — 130,000 neurons, ~50M synapses — simulated it with a biologically realistic neuron model, and connected it to a MuJoCo body. The simulated fly avoids toxic compounds. Sensory input to physical behavior, loop closed. (x.com/michaelandregg…) Same week. Two completely different paths to the same place. Cortical Labs (bottom-up): plate real neurons on a multielectrode array, give them a closed-loop environment, apply the free energy principle. No backprop. No gradient descent. They learn. The CL1 has ~800K neurons. The human brain has 86 billion. But the same principles apply. (pubmed.ncbi.nlm.nih.gov/36228614/) Eon Systems (top-down): take the connectome — the wiring diagram — and run it as a simulation. No real cells. Just the map, instantiated in silicon, driven by spiking neuron dynamics from real electrophysiology. Wire the motor outputs to a physics engine. Watch it move. (FlyWire: doi.org/10.1038/s41586… | Shiu et al.: doi.org/10.1038/s41586…) One starts with biology and asks what it can compute. The other starts with the map and asks how to run it. Both are making the same bet: that the structure of biological circuits — not their wetware substrate — is where intelligence lives.

A new Spinosaurus species uncovered in northern Niger appears to have been a wading predator of fish like its close relatives, but it lived as many as 1000 kilometers inland from the Tethys Sea. The fossil find may represent a third phase of evolution for this group of massive, fish-eating dinosaurs. Learn more this week in Science: scim.ag/4tOnxpY