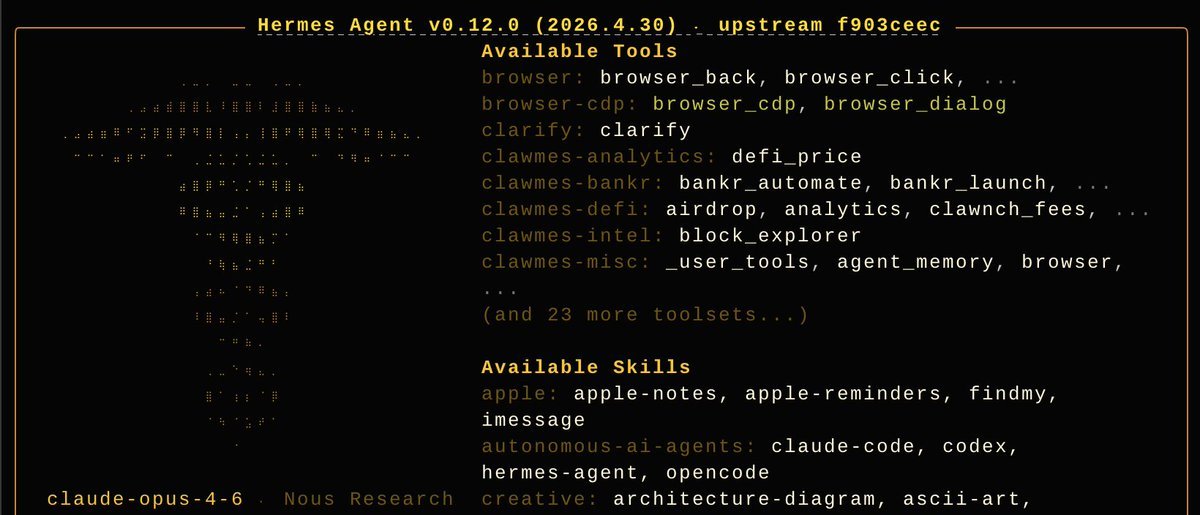

[clawmes-analytics] * defi_price [clawmes-bankr] * bankr_automate * bankr_launch * bankr_leverage * bankr_polymarket [clawmes-defi] * airdrop * analytics * clawnch_fees * clawnch_launch * clawnx * cost_basis * defi_lend * defi_stake * farcaster * giza * governance * herd_intelligence * hummingbot * liquidity * lobster_cash * market_intel * molten * nft * nookplot * paysponge * privacy * safe * watch_activity * wayfinder * yield [clawmes-intel] * block_explorer [clawmes-misc] * _user_tools * agent_memory * browser * compound_action * session_recall * skill_evolve [clawmes-trading] * bridge * defi_balance * defi_swap * manage_orders [clawmes-wallet] * approvals * clawnchconnect * permit2 * transfer 🦞🪽