Aaron Levie@levie

One hard problem with AI right now is retrieval augmented generation (RAG) with wide-ranging heterogeneous information. A common architecture pattern in AI right now is that you connect up a large amount of data to an AI model, and when a user or machine sends in a query, you find the best matches from the underlying data set and then send that information to the AI model to answer the user's prompt. This is a very efficient way to be able to have an AI access information that is frequently changing (like web data) or potentially wouldn't be appropriate to have in an underlying training set of the AI model (like private corporate data).

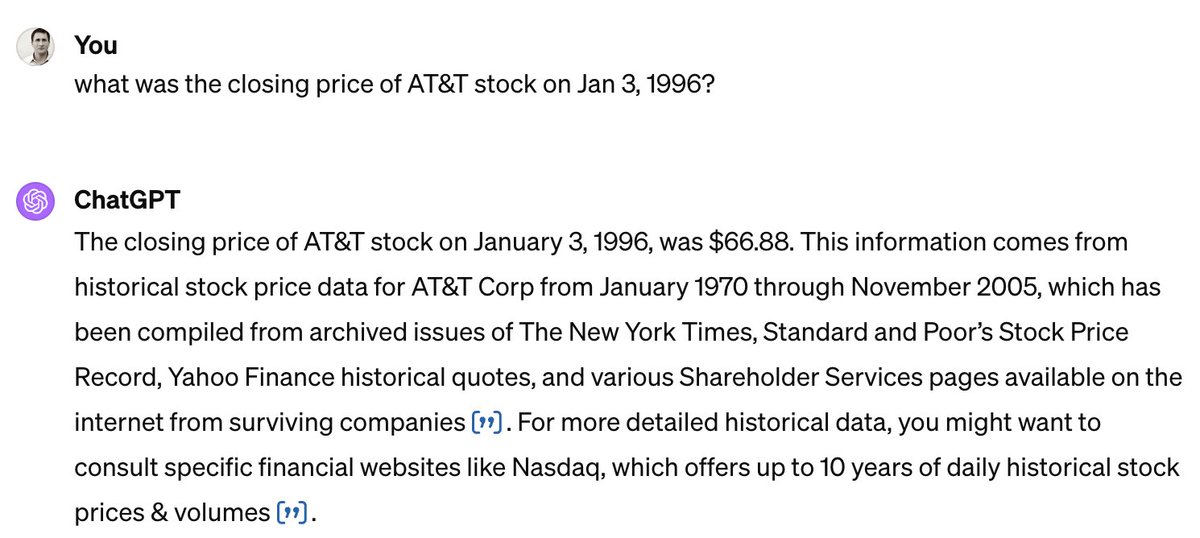

This is a relatively fundamental and breakthrough architecture in AI, but there's a small catch. The AI's answer is only as good as the underlying information that you serve it in the prompt. And because the user isn't the one giving it the data, but instead a computer, you're at the mercy of how good that computer is at finding the right information to give the AI to answer the question. Which means, of course, you're also at the mercy of how good, accurate, up-to-date, and authoritative your underlying information is that you're feeding the AI model in the prompt.

Let's take a very basic example. Say you ask an AI that's connected to the web, "who are the top movie studio heads right now?". The issue is that there's not many authorative webpages on the internet that are the singular list of movie studio heads *right now* (or for any esoteric topic for that matter). A human would do lots of browsing, compare answers between sites, check corporate webpages, and more just to decide an answer. With AI, we're often at the mercy of a search engine going across the web and trying to find various articles and determining their accuracy and authoritativeness to try and eventually find enough information to produce an answer. Chances are some of those articles are out of date within a year or even were wrong to begin with, but the AI has no reason to know that -- so it will still use that information in its underlying prompt, and lo and behold it can produce an inaccurate answer.

The challenge also occurs on smaller data sets, as well. Imagine an AI assistant that has access to all of your documents, emails, and calendar, and you ask a simple question like "what was last quarter's revenue?" Not only does the AI have to figure out how to assess the information that is tied specifically to "last quarter", but also it has to wrangle the possibly conflicting information in your underlying data. You may have an email that had early -but not verified- revenue results, that are different from draft documents that the finance team created, which are different yet again from the final documents with the results. AI is still quite constrained in its "intelligence" to be able to triage these conflicting answers *today* to produce an accurate result, no matter how confident it sounds.

There are some awesome efforts to try and solve this problem, and it's clear this will continue to become less and less of an issue. With Box AI, to address this challenge, we just launched a new feature in beta called Box Hubs. With Hubs, users pre-curate content that is the "authoritative" source of truth for particular topics and information -- say for instance, Sales materials, HR documents, or R&D files. When you ask an AI question in a Hub, you are aiming that question at the most up-to-date and relevant information for that topic. In our early testing and rolling out it dramatically reduces the issue of getting confusing data, and delivers more accurate answers.

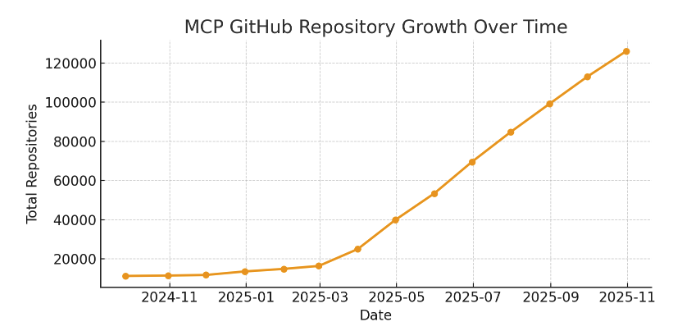

I'm excited to see many other breakthroughs in this space -- from better ways of organizing the web over time to AI agents that can fully browse the web and do more of the "research" that a human would when finding information.