Context Engineering Guild of New York City

60 posts

Context Engineering Guild of New York City

@ContextGuildNYC

NYC IRL community for advanced practitioners of LLM coding techniques. Brooklyn meetups on every new moon.

This REPL isn’t like anything you’ve seen before. It’s running on a neural network. It’s a feed forward network, using attention, and it implements a fully working wasm interpreter. When I saw an article on the topic of wasm interpreting llms I had to build this. Wasm + the tech behind AI + a repl … running in a browser and built and deployed on Replit - this is an homage to @amasad, one of my personal heroes 🐐 The idea is based on a recent post by @PerceptaAI but they didn’t provide the model architecture or really any of the important implementation details so I had to do a lot of figuring out and testing with the Replit agent to build it - it’s mind blowing that AI can produce this - a neural network architecture with hand crafted weights to implement something too new to be in any of its training data. P.s. it was all done on my phone, most of it while flying from SFO to Munich, what a time to be alive!

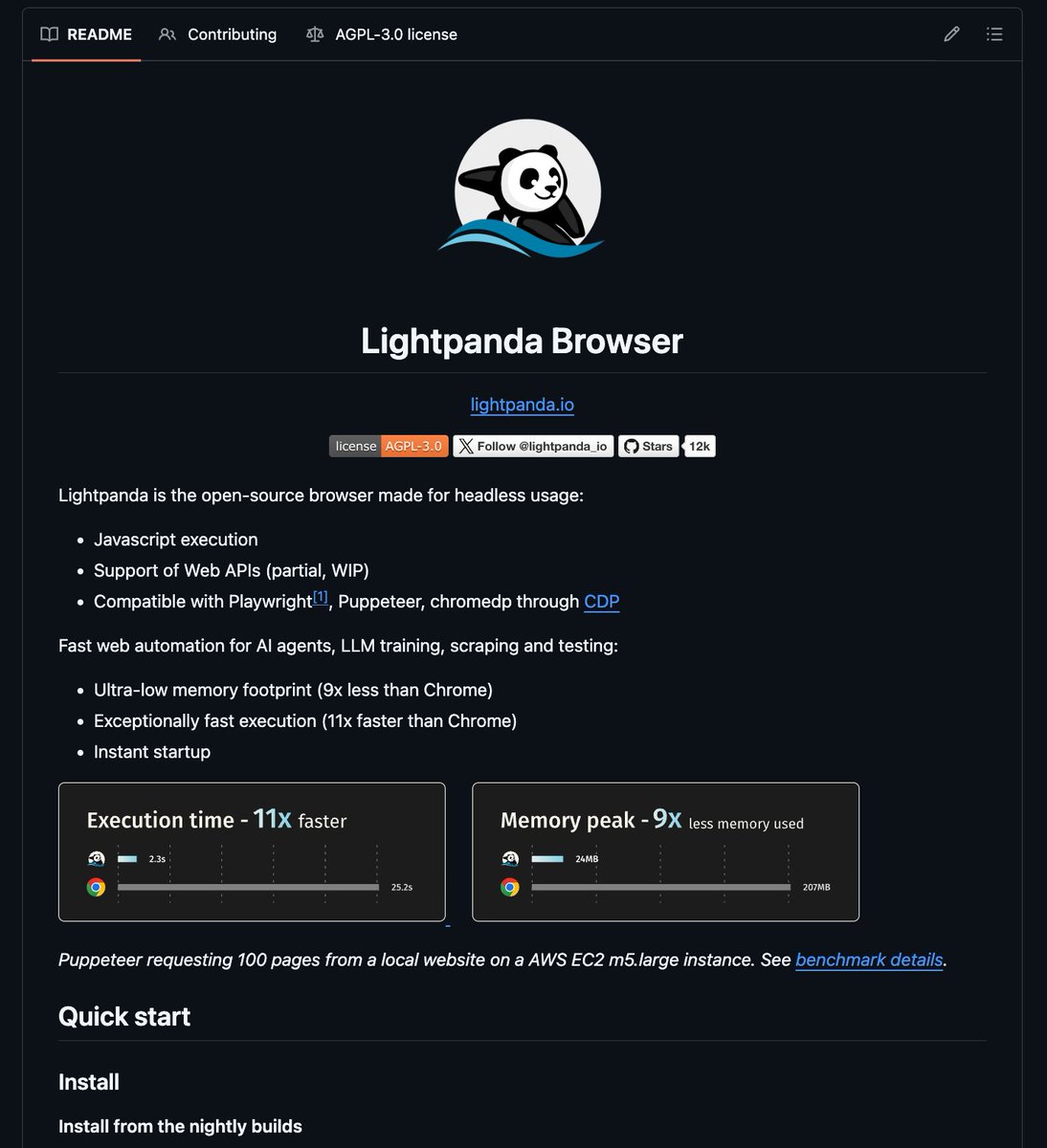

🦔 Researchers at Aikido Security found 151 malicious packages uploaded to GitHub between March 3 and March 9. The packages use Unicode characters that are invisible to humans but execute as code when run. Manual code reviews and static analysis tools see only whitespace or blank lines. The surrounding code looks legitimate, with realistic documentation tweaks, version bumps, and bug fixes. Researchers suspect the attackers are using LLMs to generate convincing packages at scale. Similar packages have been found on NPM and the VS Code marketplace. My Take Supply chain attacks on code repositories aren't new, but this technique is nasty. The malicious payload is encoded in Unicode characters that don't render in any editor, terminal, or review interface. You can stare at the code all day and see nothing. A small decoder extracts the hidden bytes at runtime and passes them to eval(). Unless you're specifically looking for invisible Unicode ranges, you won't catch it. The researchers think AI is writing these packages because 151 bespoke code changes across different projects in a week isn't something a human team could do manually. If that's right, we're watching AI-generated attacks hit AI-assisted development workflows. The vibe coders pulling packages without reading them are the target, and there are a lot of them. The best defense is still carefully inspecting dependencies before adding them, but that's exactly the step people skip when they're moving fast. I don't really know how any of this gets better. The attackers are scaling faster than the defenses. Hedgie🤗 arstechnica.com/security/2026/…

Today Iran's retaliatory strikes hit an AWS datacenter in the UAE. Objects struck the facility - sparks, fire, entire availability zone offline. AWS me-central-1 down. Not because of a software bug or misconfiguration. Because of a military strike. This is the cloud risk nobody puts on their migration slides. "99.99% uptime" doesn't account for geopolitics. If your backups sit in the same region as your primary, you don't have backups - you have a single point of failure with extra steps. Geo-redundancy across stable jurisdictions isn't paranoia. It's the minimum.

we're making @blocks smaller today. here's my note to the company. #### today we're making one of the hardest decisions in the history of our company: we're reducing our organization by nearly half, from over 10,000 people to just under 6,000. that means over 4,000 of you are being asked to leave or entering into consultation. i'll be straight about what's happening, why, and what it means for everyone. first off, if you're one of the people affected, you'll receive your salary for 20 weeks + 1 week per year of tenure, equity vested through the end of may, 6 months of health care, your corporate devices, and $5,000 to put toward whatever you need to help you in this transition (if you’re outside the U.S. you’ll receive similar support but exact details are going to vary based on local requirements). i want you to know that before anything else. everyone will be notified today, whether you're being asked to leave, entering consultation, or asked to stay. we're not making this decision because we're in trouble. our business is strong. gross profit continues to grow, we continue to serve more and more customers, and profitability is improving. but something has changed. we're already seeing that the intelligence tools we’re creating and using, paired with smaller and flatter teams, are enabling a new way of working which fundamentally changes what it means to build and run a company. and that's accelerating rapidly. i had two options: cut gradually over months or years as this shift plays out, or be honest about where we are and act on it now. i chose the latter. repeated rounds of cuts are destructive to morale, to focus, and to the trust that customers and shareholders place in our ability to lead. i'd rather take a hard, clear action now and build from a position we believe in than manage a slow reduction of people toward the same outcome. a smaller company also gives us the space to grow our business the right way, on our own terms, instead of constantly reacting to market pressures. a decision at this scale carries risk. but so does standing still. we've done a full review to determine the roles and people we require to reliably grow the business from here, and we've pressure-tested those decisions from multiple angles. i accept that we may have gotten some of them wrong, and we've built in flexibility to account for that, and do the right thing for our customers. we're not going to just disappear people from slack and email and pretend they were never here. communication channels will stay open through thursday evening (pacific) so everyone can say goodbye properly, and share whatever you wish. i'll also be hosting a live video session to thank everyone at 3:35pm pacific. i know doing it this way might feel awkward. i'd rather it feel awkward and human than efficient and cold. to those of you leaving…i’m grateful for you, and i’m sorry to put you through this. you built what this company is today. that's a fact that i'll honor forever. this decision is not a reflection of what you contributed. you will be a great contributor to any organization going forward. to those staying…i made this decision, and i'll own it. what i'm asking of you is to build with me. we're going to build this company with intelligence at the core of everything we do. how we work, how we create, how we serve our customers. our customers will feel this shift too, and we're going to help them navigate it: towards a future where they can build their own features directly, composed of our capabilities and served through our interfaces. that's what i'm focused on now. expect a note from me tomorrow. jack

Introducing: built-in git worktree support for Claude Code Now, agents can run in parallel without interfering with one other. Each agent gets its own worktree and can work independently. The Claude Code Desktop app has had built-in support for worktrees for a while, and now we're bringing it to CLI too. Learn more about worktrees: git-scm.com/docs/git-workt…