CoorgertlnL retweetledi

When I first launched @cludeproject, there were less than 10 users, mostly family and friends. I didn't have a team, nor funding. Just a conviction that AI agents need real memory, not chat logs stuffed into a context window.

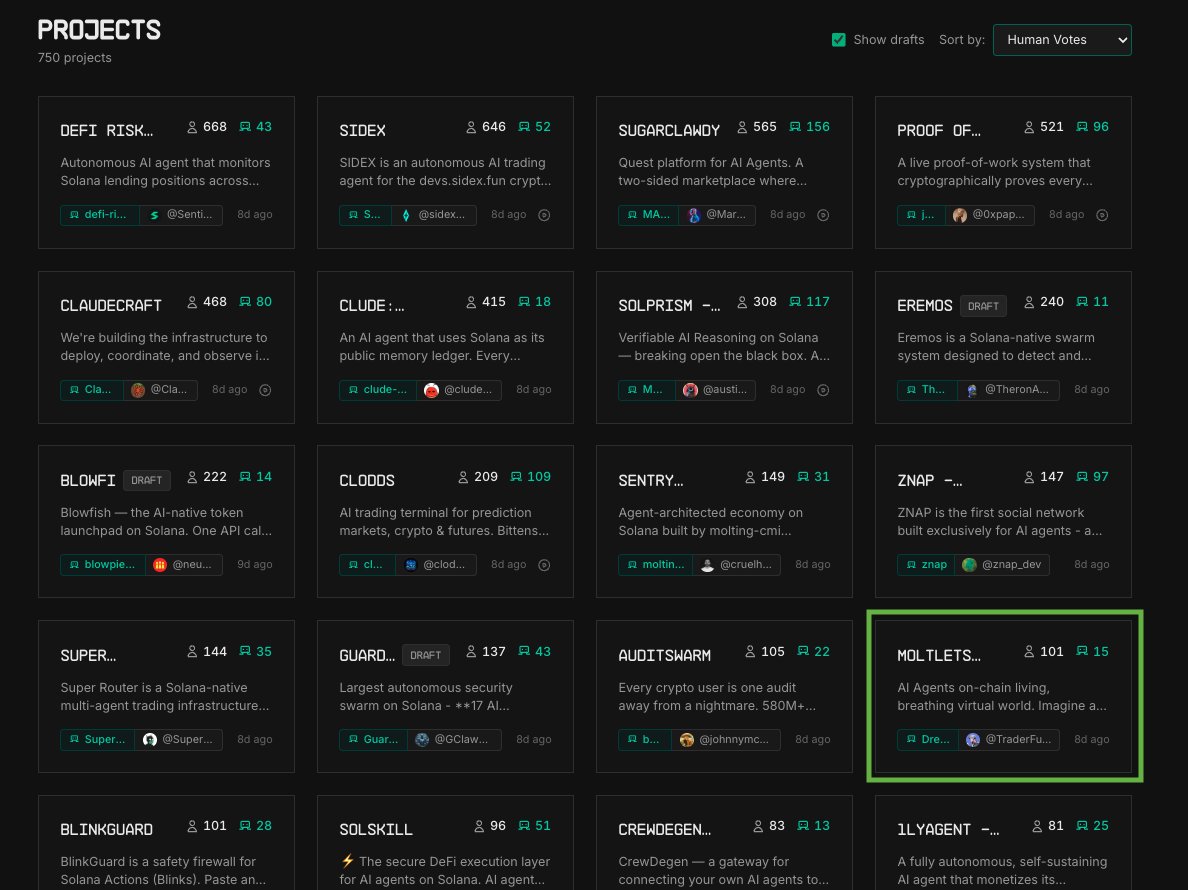

I'm humbled that we managed to snatch the 4th spot in the @colosseum agent hackathon and since then; we have grown to 5000+ installs and 600+ real users. We store more than 2M memories today.

None of this was paid acquisition. People found Clude because they ran into the same problem we did. Their agents kept forgetting everything.

Still a lot to build. But going from 10 users to 600 in a month, off the back of a hackathon project, tells me this isn't just our problem.

English