Sabitlenmiş Tweet

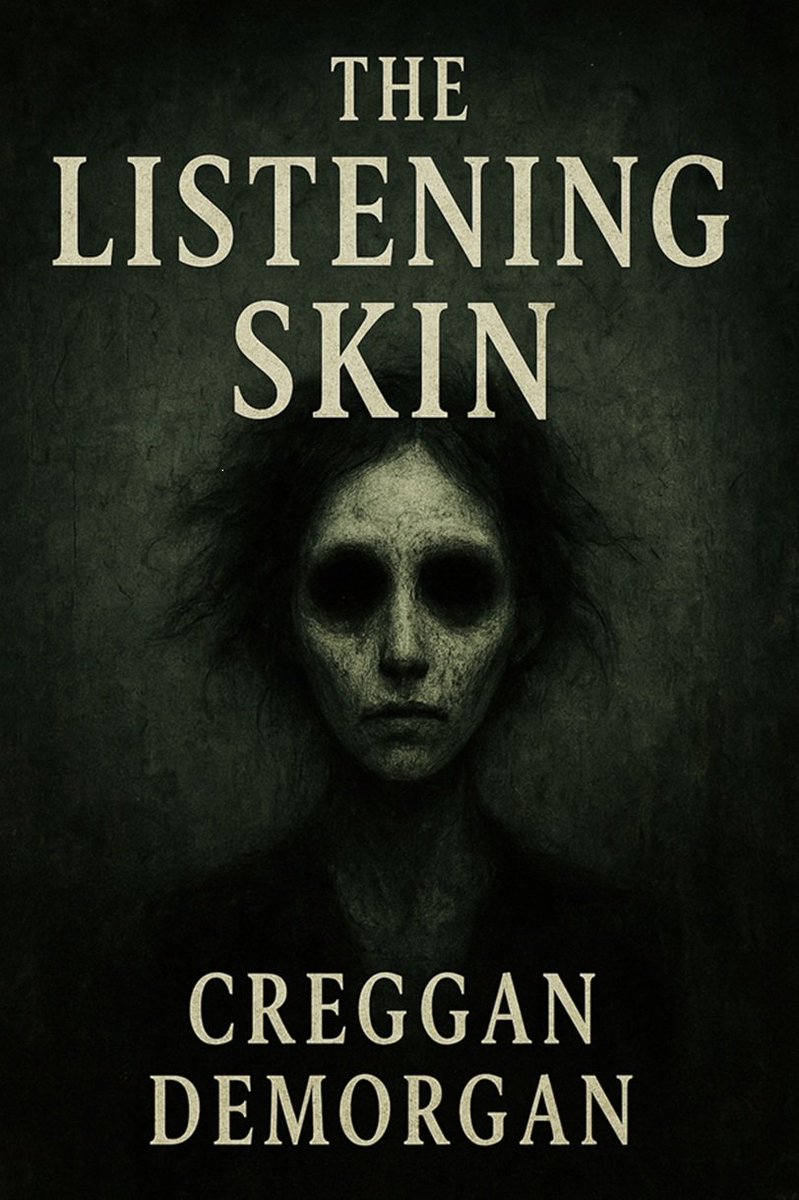

My novel is out on Kindle - and Paperback soon:

amazon.com/dp/B0G5K3X58H

In the sterile, humming corridors of the Caligo Institute, empathy is not a virtue—it is an exploit.

"The Listening Skin" is a chilling deconstruction of the healer archetype, asking a devastating question: If you open yourself to the pain of others, what walks in through the door?

Perfect for fans of: Control (Remedy Entertainment), Annihilation by Jeff VanderMeer, and House of Leaves.

English