Laurisha

11K posts

Laurisha

@CryptoFilma

Content producer partnering with Innovators & Artists. Ex-@ConsenSys @CeloOrg Cinephile with a keyboard.

LA Katılım Aralık 2010

1K Takip Edilen835 Takipçiler

@CryptoFilma Do you think this is Mom safe?

We thought it'd be a good fit for our mom but idk

English

Knives Out meets Babe.

I went in blind and cried 3x.

DiscussingFilm@DiscussingFilm

‘THE SHEEP DETECTIVES’ is now one of the highest rated wide-release films of 2026 with 97% on Rotten Tomatoes. The film follows a flock of sheep who must solve the mysterious murder of their shepherd.

English

@CryptoFilma Already fully moved to group projects and clinical simulations in the business ethics undergrad class I created at @CalPoly

English

I fully believe we’ll soon have schools that return to handwritten essays, oral assessments, and more group projects.

And the littles will get more playtime, which does wonders for the mind.

Squawk Box@SquawkCNBC

"There shouldn't be a classroom in America from kindergarten to PhD where you're allowed to use your personal devices," says @ArthurBrooks. "We're rewiring their brains to become lonely and depressed." cnb.cx/4tulKVu

English

@ryanvanasse The contrast is stark! I wrote a pilot about crypto, and now I'm having to rewrite it to really reflect social sentiment honestly

English

@CryptoFilma @Consensys I've been following crypto (at a distance) since 2011 or so, and the change between the enthusiast then and the crypto bro now is pretty stark. It was really a currency designed to be spent then, and now it seems much more of a speculative asset.

English

I started my first FT crypto role in 2018. Everyone at @Consensys wanted to replace banks, and now the industry sells the most to them.

Not stating it's right or wrong, just an observation...

English

An AI founder told me to my face last month that I was probably a better prompter than most—but that my job would be obsolete if I didn’t keep honing that skill.

If they’re saying this to my face, they certainly aren’t fighting for marketing FTEs.

So I told him, “Yes, I do have prompting skills, and I believe taste and all art has to stay with humans. ”

Then I politely left the convo.

English

@abigailcarlson_ This after a 7-year career break too...there's a memoir here

English

I wonder if there's anything in the Bible about golden idols and stuff

Christopher Hale@ChristopherHale

NEW: MAGA evangelical leaders gather in Mar-a-Lago to bless and dedicate a gold statue dedicate to Donald Trump.

English

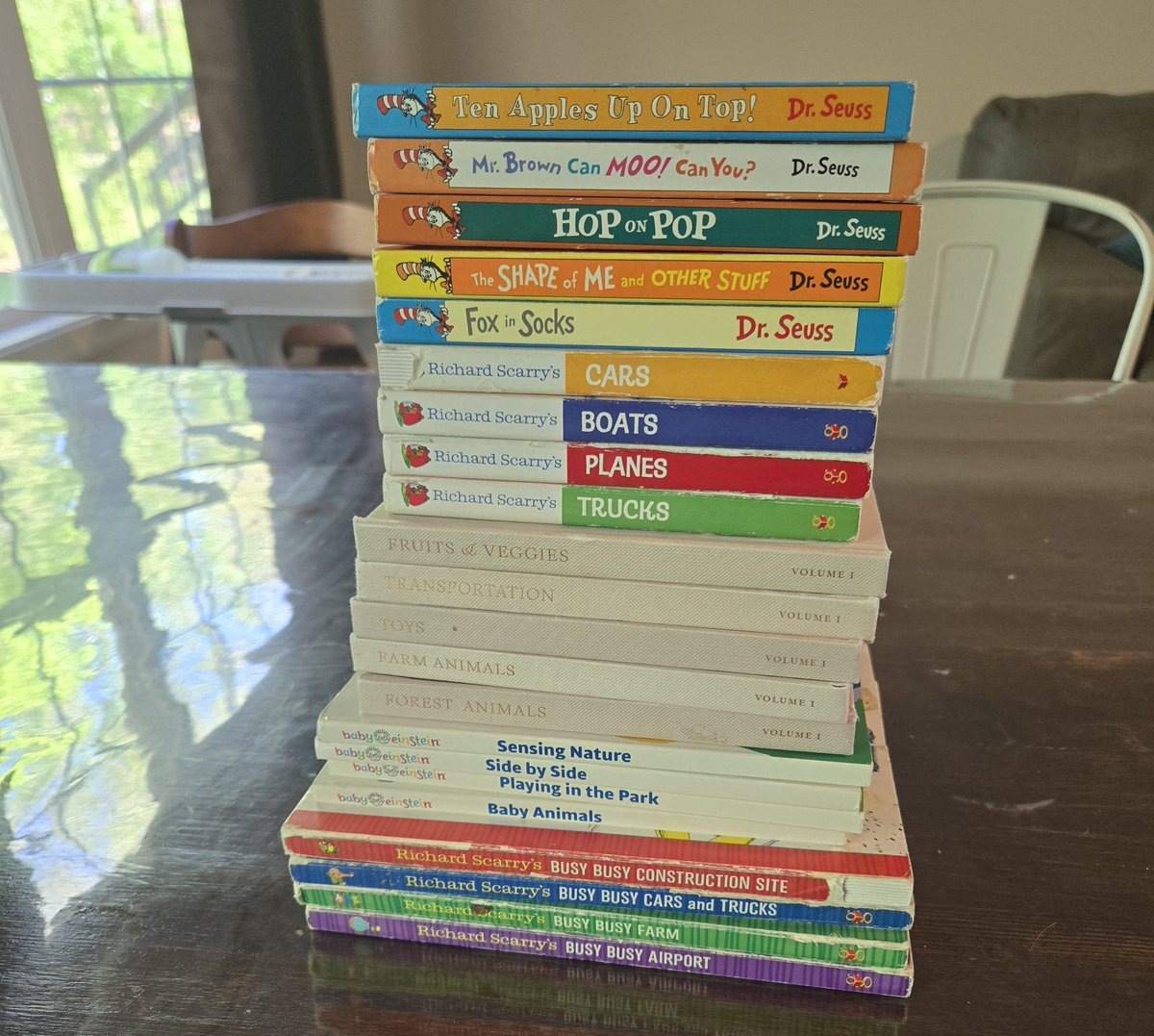

@abbythelibb_ I thought my memory was bad until I started reciting Dr.Seuss books in the car

English

Laurisha retweetledi

Ted Turner, founder of CNN, died today at 87. nytimes.com/2026/05/06/bus… Most obituaries will focus on his enormous impact on the media world, but I'd also note that he revolutionized philanthropy. Before him, rich people gave to museums, universities, churches. They spent more money buying paintings of women than actually helping women or girls. And then in 1997, Ted changed that almost by accident in a speech. "I was on my way to New York to make the speech,” Ted recalled to me later. “I just thought, what am I going to say?” So, to make the speech interesting, he announced he was going to give $1 billion to the UN to fight global poverty. (Here's the piece where he described how he made that gift: nytimes.com/2012/12/27/opi… ) That started a competition among tycoons to be more philanthropic and led many more to try to help the needy. He made giving cool, and he saved countless lives. RIP, Ted Turner, and thanks for all you did.

English

@_adamfernandez 🫠 I understand AI replacing junior role takes (tho not great for the economy) but taking over teams, that's where it crosses the line imo

And yet it's becoming the norm.

English