Star | Technology & Cyber Law Attorney

2.5K posts

Star | Technology & Cyber Law Attorney

@CyberLawStar

Founding Partner of Cyber Law Firm | Views are mine | Media Inquiries: [email protected] | https://t.co/8L85NFKko0 | #privacylaw #technologylaw

As major news outlets cut off the Wayback Machine, journalists and advocacy groups are rallying to protect the Internet Archive’s vast collection of web pages. wired.com/story/the-inte…

If Meta is a "publisher," then like any other publisher, they need to be held liable for any defamatory material they publish, like the rest of us are.

I'm concerned about this verdict and the overall trend of treating speech platforms as addictive — and therefore dangerous — products. Also, the verdict diminishes the responsibility parents have to raise healthy kids. For example: "Kaley says she began using YouTube at age 6 and Instagram at age 9 and told the jury she was on social media 'all day long' as a child." Where were the parents?

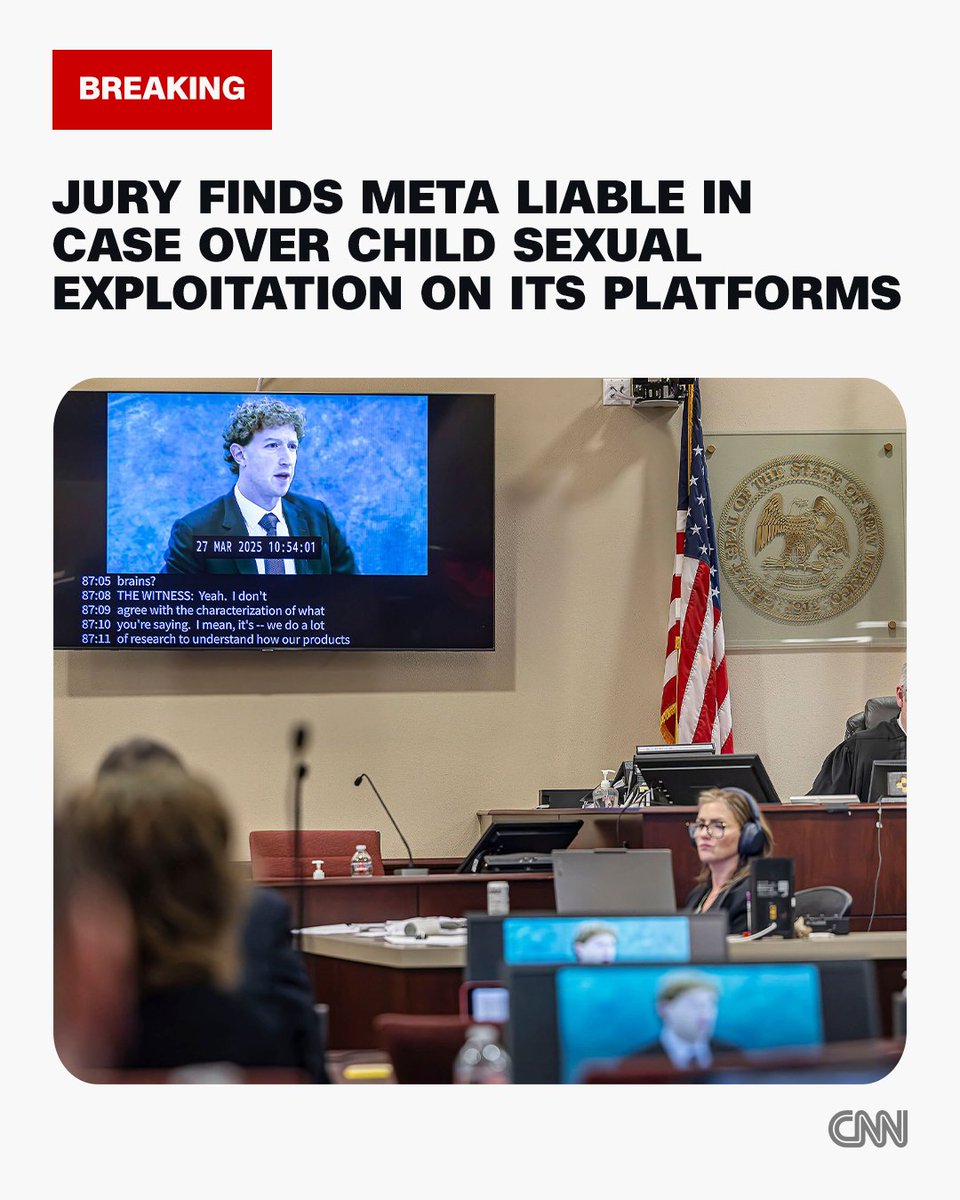

Jury finds Meta and YouTube liable in landmark social media trial that accused the tech giants of harming a woman's mental health cnn.it/47TlGqg

SANTA FE, N.M. (AP) — New Mexico jury finds Meta's platforms are harmful to children's mental health and imposes $375 million penalty.

JUST IN: YouTube now has a popup asking "does this feel like AI slop?" to help combat low quality AI-generated videos.