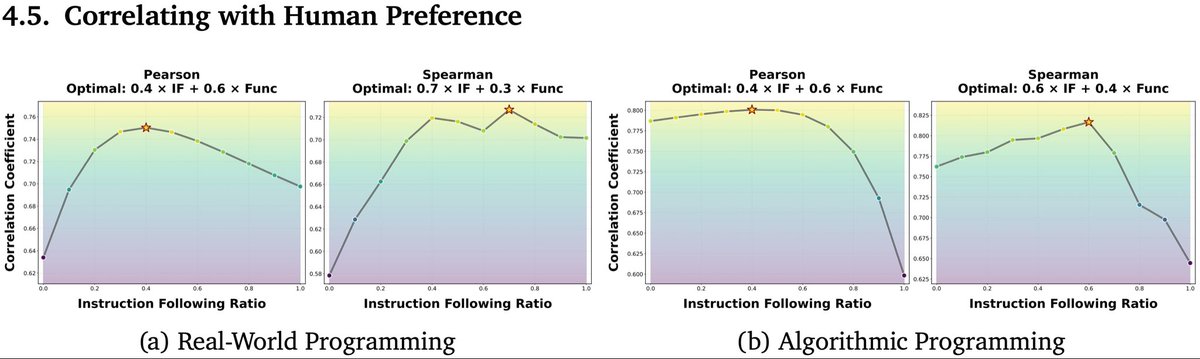

Today on the blog we introduce a notion of sufficient context to examine retrieval augmented generation (RAG) systems, developing a method to classify instances, analyzing failures of RAG systems & proposing a way to reduce hallucinations. Read more →goo.gle/43gp3Vk