Daniel W. Linna Jr.

12K posts

@DanLinna

prof & Dir Law & Technology, @NorthwesternLaw & @NorthwesternEng; @CodeXStanford Affiliated Faculty; frmr #BigLaw partner; AI4Law; Law4AI; ppl+process+data+tech

Elon Musk reveals the brutal math behind why a single hour of his time is worth $100 million "Tesla this year will do over $100 billion in revenue, so that's $2 billion a week. If I make slightly better decisions I can affect the outcome by a billion dollars. The marginal value of a better decision can easily be in the course of an hour $100 million" "You have to look at it on a percentage basis. If you look at it in absolute terms, I would never get any sleep. I'd just keep working and work my brain hotter, trying to get as much as possible out of this meat computer"

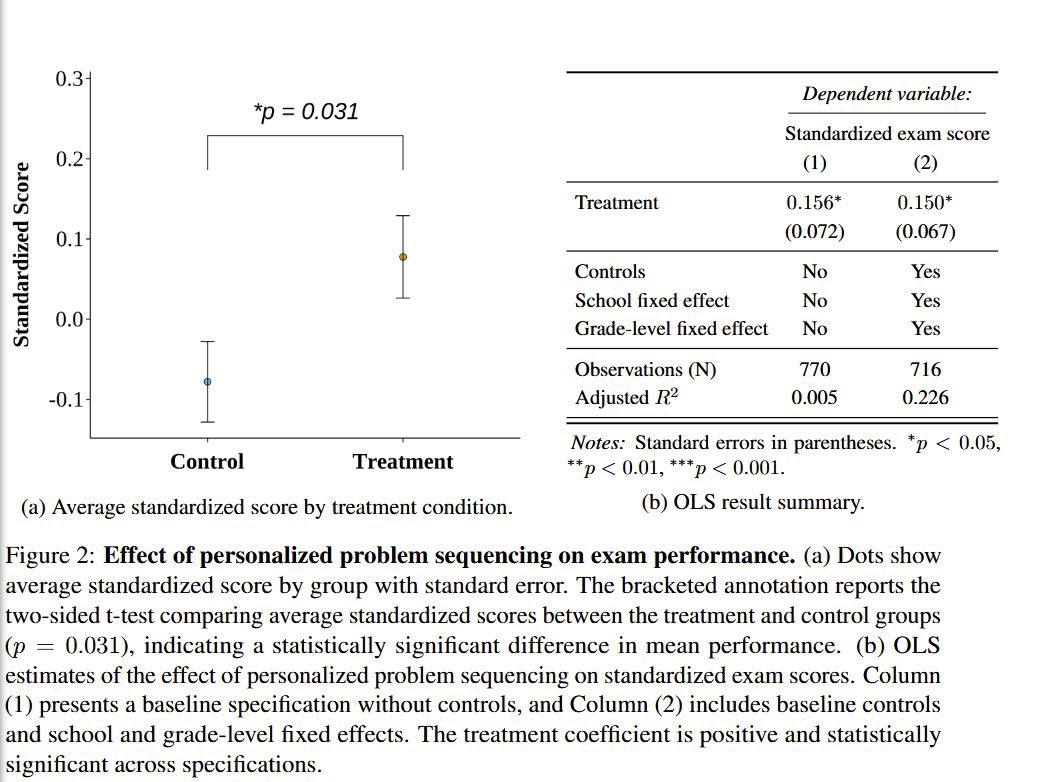

Yes, just having students “use AI to study” hurts learning (a helpful assistant is not a tutor), but using AI prompted to act like a tutor, especially with teacher support, seems to have large positive effects on learning in randomized trials. papers.ssrn.com/sol3/papers.cf…

Students who used AI to study remembered less than those who did not.

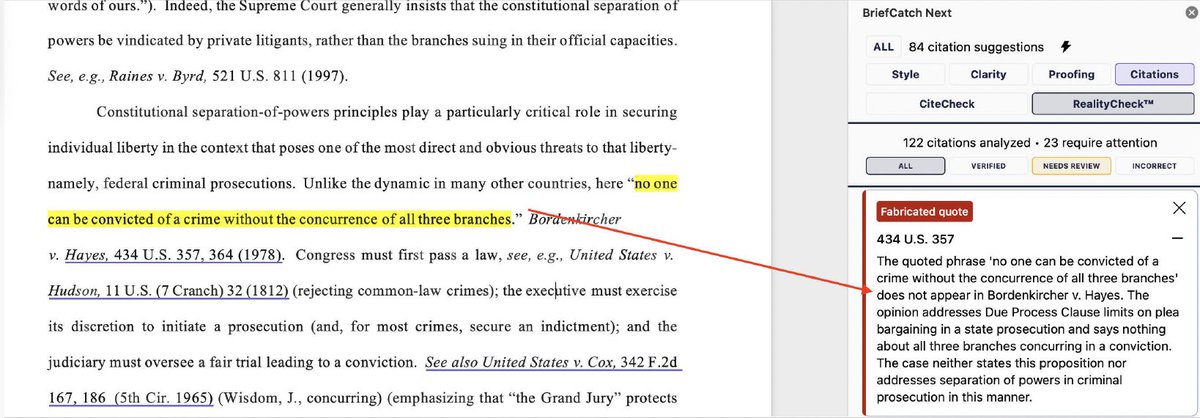

"Attorneys should ask themselves whether the time and effort they will save by using generative AI to draft a legal document is worth the damage their career and professional reputation will suffer if they do not ensure the document’s accuracy."

Students who used AI to study remembered less than those who did not.

🚨New preprint! We find evidence of LLMs enabling people to file lawsuits without lawyers (filing "pro se") at historically unprecedented rates in federal courts.👇 1/n

This paper shows people are asking a lot of medical questions of AI already, but we have little evidence of how good or bad this is. Most of the published research uses old models & compares to doctors. How do new models compare to the info people would have gotten without AI?

Anthropic CEO Dario Amodei: “50% of all tech jobs, entry-level lawyers, consultants, and finance professionals will be completely wiped out within 1–5 years.”