Dan Oneață

131 posts

Depending on the course project, it usually takes 8 to 15 minutes to go through the project with the student, and then they have up to 30 minutes to make the changes (I start the process in parallel with another student during this time).

This is easier to do when there is a project specification that everyone in the course has to respect.

To better prepare them for the project defense, I give them a few examples of possible changes during the semester so they can get a sense of what can be expected of them during the grading. This can also be useful to send a signal to them about which parts of the project are critical for them to understand. During the defence, they don't get the same questions, but they are usually from a similar distribution.

During the project defence, I write the changes they need to make on the cards, and let them draw them. In this way, there is no TA bias. They are of a similar difficulty for someone who understands everything critical in the project.

Also, the changes can, for example, be 10% or 20% percent of the grade, so they don't necessarily fail the course if they mess up, but they don't get the top grade.

Also, they have a few chances for project defences during the year, so they can try again if they are not satisfied with how it went.

I hope some of this helps you and others.

English

A number of people are talking about implications of AI to schools. I spoke about some of my thoughts to a school board earlier, some highlights:

1. You will never be able to detect the use of AI in homework. Full stop. All "detectors" of AI imo don't really work, can be defeated in various ways, and are in principle doomed to fail. You have to assume that any work done outside classroom has used AI.

2. Therefore, the majority of grading has to shift to in-class work (instead of at-home assignments), in settings where teachers can physically monitor students. The students remain motivated to learn how to solve problems without AI because they know they will be evaluated without it in class later.

3. We want students to be able to use AI, it is here to stay and it is extremely powerful, but we also don't want students to be naked in the world without it. Using the calculator as an example of a historically disruptive technology, school teaches you how to do all the basic math & arithmetic so that you can in principle do it by hand, even if calculators are pervasive and greatly speed up work in practical settings. In addition, you understand what it's doing for you, so should it give you a wrong answer (e.g. you mistyped "prompt"), you should be able to notice it, gut check it, verify it in some other way, etc. The verification ability is especially important in the case of AI, which is presently a lot more fallible in a great variety of ways compared to calculators.

4. A lot of the evaluation settings remain at teacher's discretion and involve a creative design space of no tools, cheatsheets, open book, provided AI responses, direct internet/AI access, etc.

TLDR the goal is that the students are proficient in the use of AI, but can also exist without it, and imo the only way to get there is to flip classes around and move the majority of testing to in class settings.

Andrej Karpathy@karpathy

Gemini Nano Banana Pro can solve exam questions *in* the exam page image. With doodles, diagrams, all that. ChatGPT thinks these solutions are all correct except Se_2P_2 should be "diselenium diphosphide" and a spelling mistake (should be "thiocyanic acid" not "thoicyanic") :O

English

When they are defending the project, I ask them to show me just the critical parts of the code and to demonstrate the functionalities (if non-critical code for the course has problems, it can usually be spotted when they are showcasing the functionalities). For example, for the Algorithms and Data Structures class, a GUI is non-critical, and I only look at the code for it if it's not working.

I also mix in a few theoretical questions about the project, and finish by asking them to make some changes in the code.

When they are making the changes, a second student can start their project defense, so they overlap.

English

@njmarko @karpathy BTW did you find that AI-assisted students develop better understanding than non-AI-assisted ones? I taught a project-based course in 2024 & 2025, and had oral exam at the end. The 2025 class turned in much better projects (thanks to AI), but their understanding was clearly worse

English

As a TA, I allow students to use AI and teach them how to do so efficiently, thereby maximizing their learning experience.

Because AI can now complete many course projects in a single attempt (“one-shot” them), I require students to implement a modification to their project during the defense. They are informed in advance that they will be asked to do this.

Since even a simple change in functionality can affect multiple files, I permit AI-powered line autocompletion during the defense, provided students can identify where changes need to be made.

In this way, students can use AI tools without guilt while still being incentivized to develop a deep enough understanding of their project to explain and modify it confidently during the defense.

English

@HerrDreyer Thanks for the post! I would also be curious to read the 3,000-word draft about why the focus should be more on the delivery of the talk rather than a precise technical exposition 🙂

English

After triumphantly proclaiming the return of Herr Dreyer over two months ago, I finally managed to push out a proper blog post. Enjoy.

herrdreyer.wordpress.com/2025/10/19/on-…

English

@wkvong Thanks for the answer! I'll think about this! This paper suggests that a low temperature parameter (I see that you have used τ = 0.07) might help close the gap, but they have only carried experiments on a toy example.

openreview.net/pdf?id=8W3KGzw…

English

@DanOneata That’s a great question! We didn’t do any post processing of the embeddings, but anecdotally, we did observe a bigger modality gap with the out-of-distribution stimuli. I’m not sure what’s driving the difference but happy to chat if you have any ideas!

English

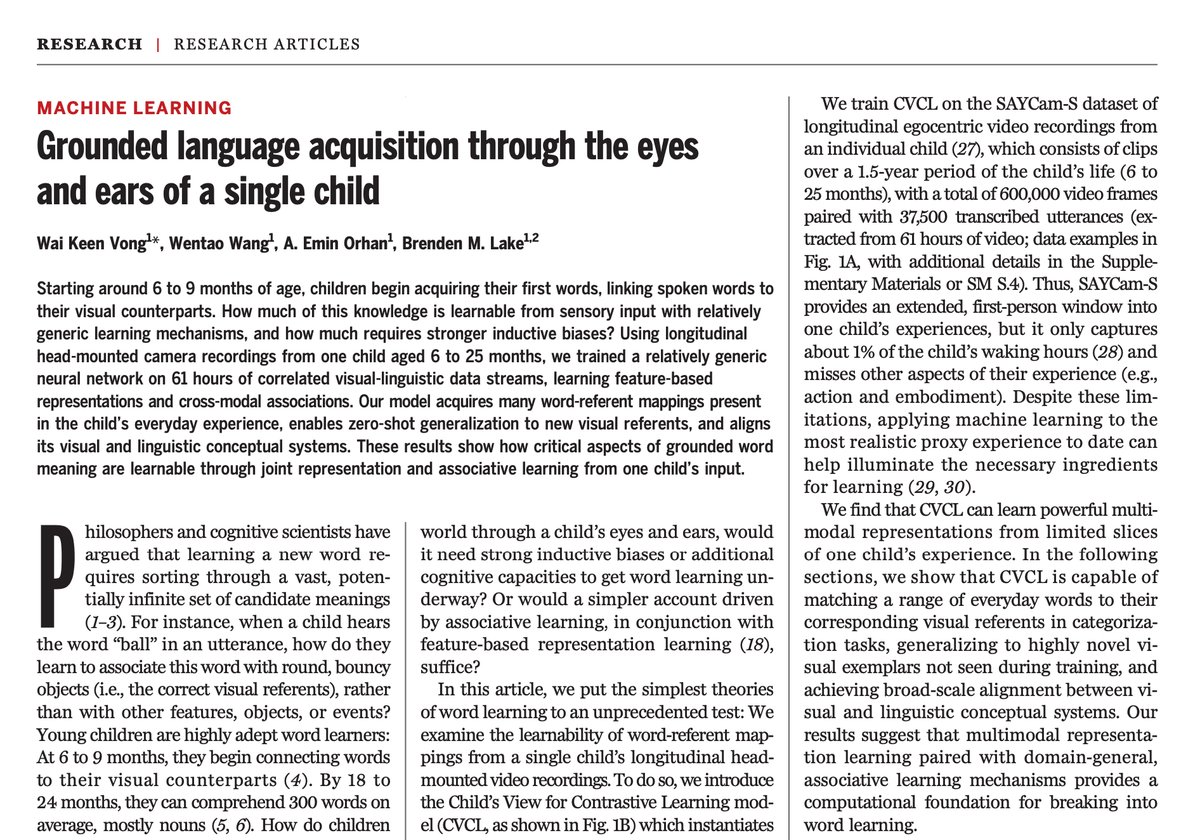

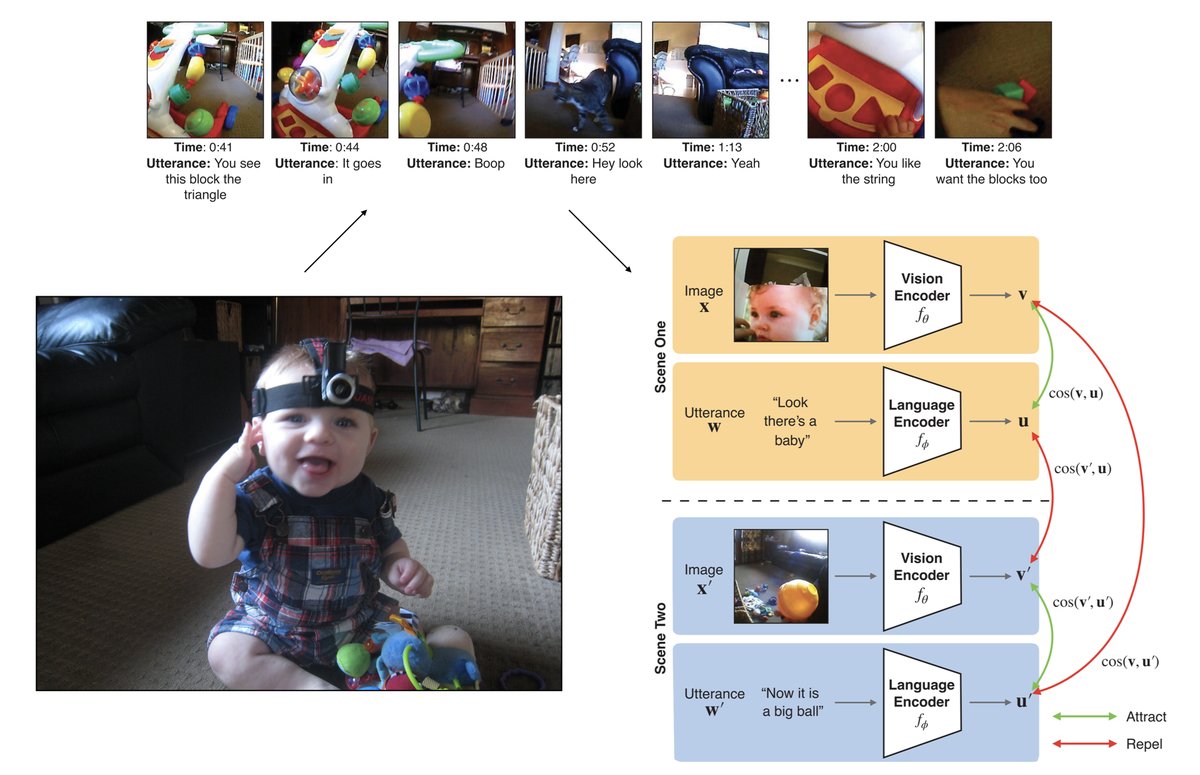

1/ Today in Science, we train a neural net from scratch through the eyes and ears of one child. The model learns to map words to visual referents, showing how grounded language learning from just one child's perspective is possible with today's AI tools. science.org/doi/10.1126/sc…

English

@wkvong I was curious: is the contrastive loss motivated in any way by cognitive science? The negatives pairs used by the contrastive loss don't seem to have a direct correspondent in learning (while explicit negative corrections happen they seem to be more sparse than positive pairs).

English

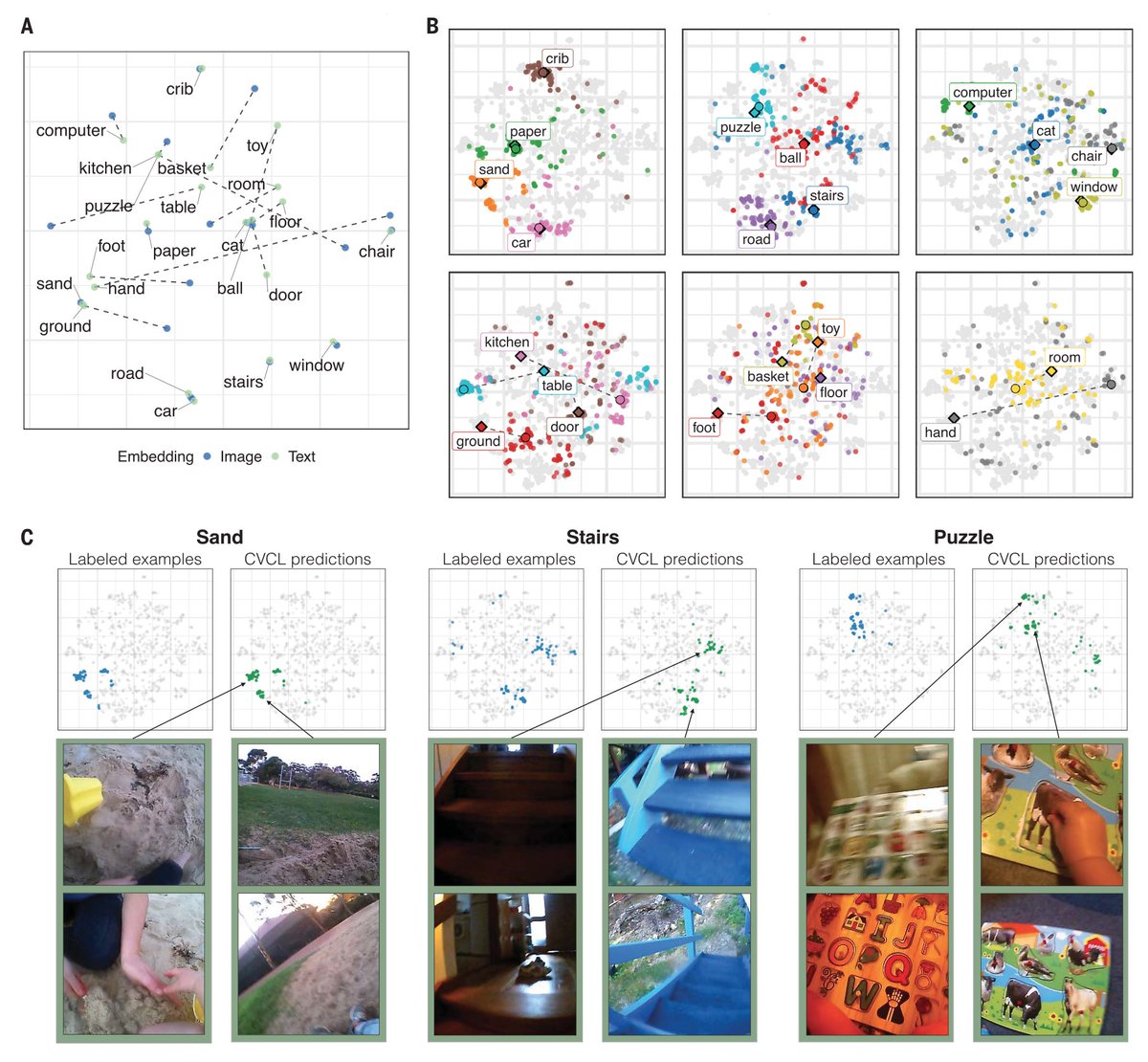

5/ We ran exactly this experiment. We trained a neural net (which we call CVCL, related to CLIP by its use of a contrastive objective) on headcam video, which captured slices of what a child saw and heard from 6 to 25 months. It’s an unprecedented look at one child’s experience, but still the data is limited: just 61 hours (transcribed) or about 1% of their waking hours.

English

@wkvong Does CVCL hear? From the model diagram it looks like the model gets text as input, not audio. Is that right? If so, do you think the conclusions would change in any way if it were to use audio?

English

8/ Limitations: A typical 2-year-old's vocabulary and word learning skills are still out of reach for the current version of CVCL. What else is missing? Note that the modeling, and the data, are inherently limited compared to children’s actual experiences and capabilities.

- CVCL has no taste, touch, smell.

- CVCL learns passively compared to a child’s fundamentally active and embodied experiences.

- CVCL has no social cognition: it doesn't explicitly perceive desires, goals,or social cues from others, nor is it motivated by wants and doesn’t realize language is a means of achieving wants (like cheerios!).

English

@wkvong I assume Fig. A (second image) shows audio and text embeddings. I'm surprised that they are so well aligned given that CLIP exhibits a modality gap [1]: images and text are placed in different spaces. Did you postprocess the embeddings in any way?

[1] openreview.net/forum?id=S7Evz…

English

6/ Results: Even with limited data, we found that the model can acquire word-referent mappings from merely tens to hundreds of examples, generalize zero-shot to new visual datasets, and achieve multi-modal alignment. Again, genuine language learning is possible from a child's view with today's AI tools.

English

@wkvong Neat work! Do you have a preprint of your work that you could share?

English

@abursuc I've also come across:

"[W]e do *not* aim to develop new components; instead, we make *minimal* adaptations that are sufficient to overcome the [...] challenges." [emphasis theirs]

This was an unexpected word of caution, since the paper was already interesting and consistent.

English

@srush_nlp How it started, how it's going 😀 (assuming I'm not mistaken and Hugh is indeed Chris's son)

English

@DanOneata I think they wanted to use him as a single DM or double pivot DM, but he is not versatile enough and tactically good (space awareness/occupation, defensive awareness) to handle that role.

English

@PiotrZelasko Flickr 8K and its associated spoken captions?

groups.csail.mit.edu/sls/downloads/…

English

@hippopedoid Previous discussion 🙂

twitter.com/tdietterich/st…

Thomas G. Dietterich@tdietterich

The term "ablation" is widely misused lately in ML papers. An ablation is a removal: you REMOVE some component of the system (e.g., remove batchnorm). A "sensitivity analysis" is where you VARY some component (e.g., network width). #pedantic

Français

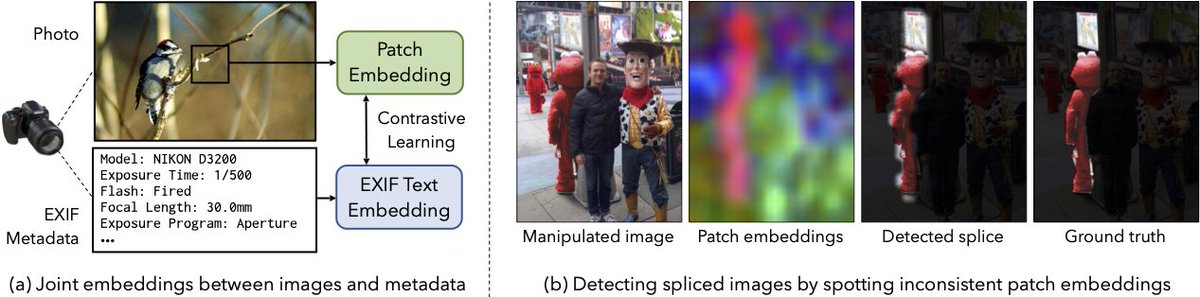

@andrewhowens Very nice work! Do you think the learnt features would also be suitable for training deep fake detectors? Usually, these detectors need to rely on low-level information, but as a consequence are very sensitive to data shifts (changes of the generator or its training dataset).

English

When you take a photo, the camera adds metadata to the image file. In our "EXIF as Language" paper at #CVPR2023, we learn a multimodal embedding between image patches and camera metadata, giving us a representation that captures low level image properties.

hellomuffin.github.io/exif-as-langua…

English

@bgavran3 @dshieble Here are some of these papers, in case you are curious:

- MLP-Mixer arxiv.org/pdf/2105.01601…

- ResMLP arxiv.org/pdf/2105.03404…

- gMLP arxiv.org/pdf/2105.08050…

English

@bgavran3 @dshieble I don't know if this what you had in mind,

but a couple of years ago there was a trend of removing the inductive biases even further—by using only multi-layer perceptrons. Back then there were some discussions whether the MLP layers are just convolutions. twitter.com/ylecun/status/…

Yann LeCun@ylecun

Well, not *actually* conv free. 1st layer: "Per-patch fully-connected" == "conv layer with 16x16 kernels and 16x16 stride" other layers: "MLP-Mixer" == "conv layer with 1x1 kernels"

English