Danny Driess

169 posts

Danny Driess

@DannyDriess

Research Scientist @physical_int. Formerly Google DeepMind

Many real-world tasks require memory to be successful. Yet, most robots don’t have any form of memory. Today, we are going to change that. We developed a system called MEM that introduces memory into VLAs on multiple scales

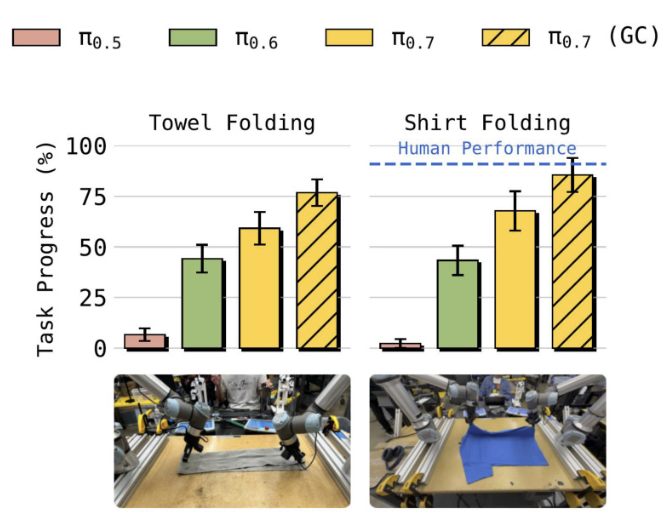

Our newest model, π0.7, has some interesting emergent capabilities: it can control a new robot to fold shirts for which we had no shirt folding data, figure out how to use an appliance with language-based coaching, and perform a wide range of dexterous tasks all in one model!

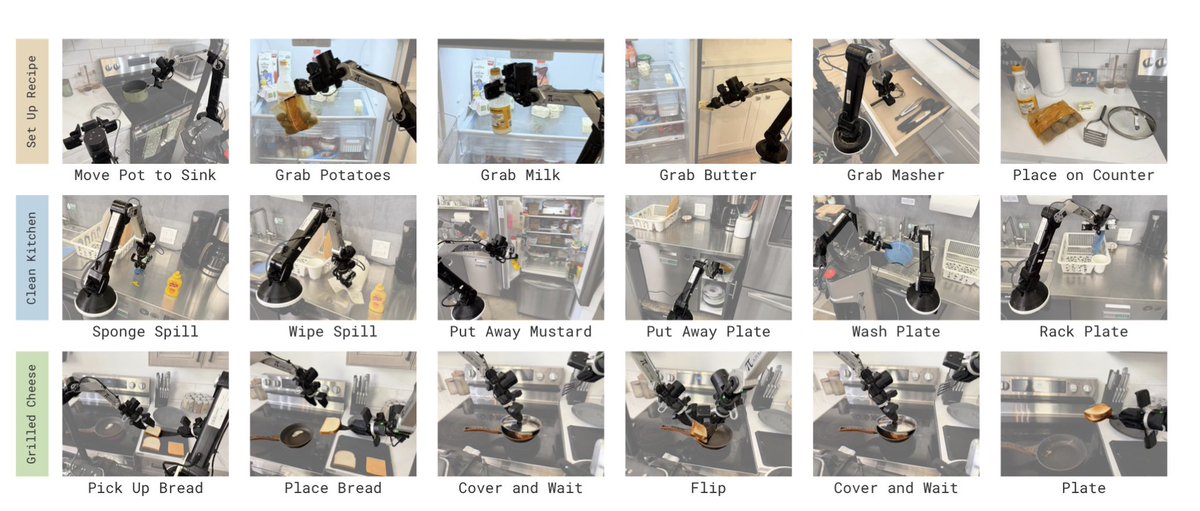

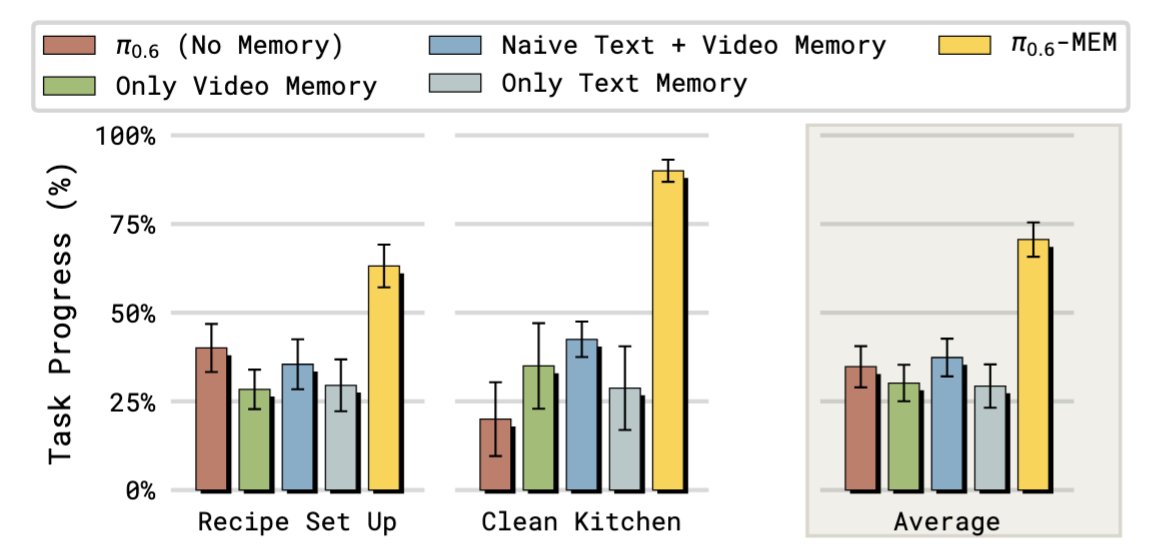

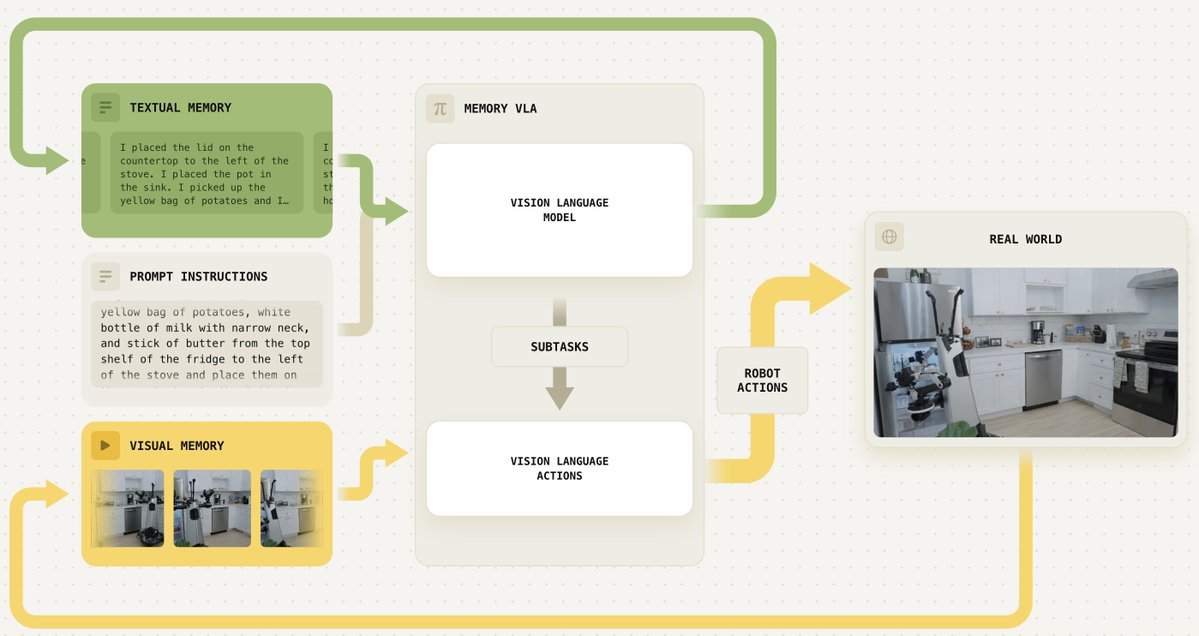

We’ve developed a memory system for our models that provides both short-term visual memory and long-term semantic memory. Our approach allows us to train robots to perform long and complex tasks, like cleaning up a kitchen or preparing a grilled cheese sandwich from scratch 👇

We’ve developed a memory system for our models that provides both short-term visual memory and long-term semantic memory. Our approach allows us to train robots to perform long and complex tasks, like cleaning up a kitchen or preparing a grilled cheese sandwich from scratch 👇

We’ve developed a memory system for our models that provides both short-term visual memory and long-term semantic memory. Our approach allows us to train robots to perform long and complex tasks, like cleaning up a kitchen or preparing a grilled cheese sandwich from scratch 👇

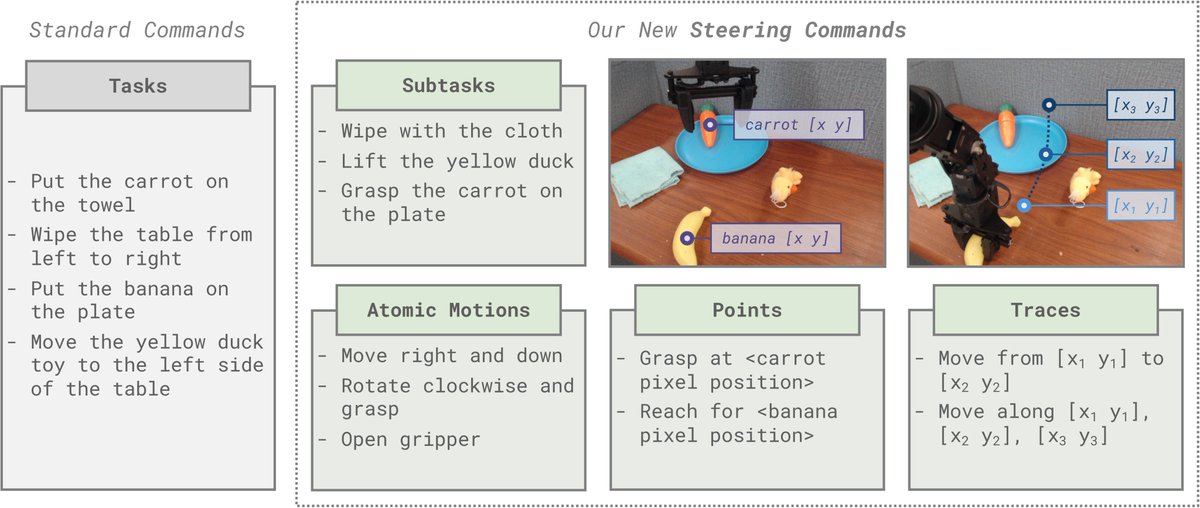

How can robot policies be trained to best leverage VLMs' CoT reasoning and in-context learning for generalization? The key is Steerable Policies: vision-language-action models that can be flexibly controlled in many ways! steerable-policies.github.io 1/9

Our model can now learn from its own experience with RL! Our new π*0.6 model can more than double throughput over a base model trained without RL, and can perform real-world tasks: making espresso drinks, folding diverse laundry, and assembling boxes. More in the thread below.