Karl Pertsch

454 posts

Karl Pertsch

@KarlPertsch

Robot Foundation Models @physical_int

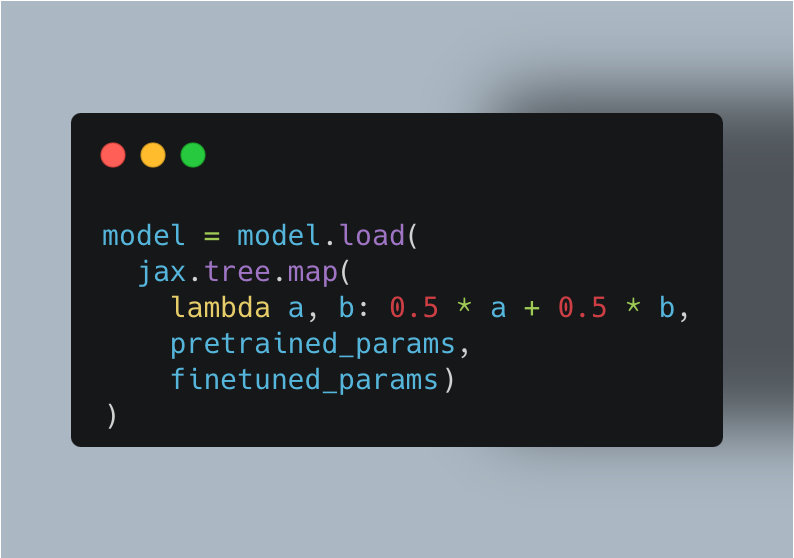

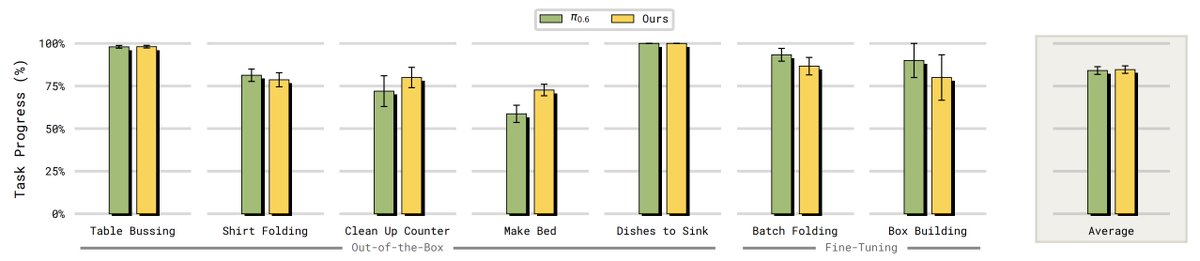

Do you ever find finetuning VLA overfits to the target task, to the point where generalist ability is lost and even minor deviations beyond the SFT data break the policy? We found an extremely simple solution: directly merge the base and finetuned policy in weight space 🤯 👇🧵

We’ve developed a memory system for our models that provides both short-term visual memory and long-term semantic memory. Our approach allows us to train robots to perform long and complex tasks, like cleaning up a kitchen or preparing a grilled cheese sandwich from scratch 👇

We’ve developed a memory system for our models that provides both short-term visual memory and long-term semantic memory. Our approach allows us to train robots to perform long and complex tasks, like cleaning up a kitchen or preparing a grilled cheese sandwich from scratch 👇

We’ve developed a memory system for our models that provides both short-term visual memory and long-term semantic memory. Our approach allows us to train robots to perform long and complex tasks, like cleaning up a kitchen or preparing a grilled cheese sandwich from scratch 👇

🧵(6) DROID Eval CoVer-VLA achieves 14% gains in task progress and 9% in success rate on the challenging red-team PolaRiS benchmark. In the pan cleaning task, π₀.₅ shows incorrect intent, grasping the pan handle. In contrast, CoVer-VLA correctly uses sponge to scrub the pan.

General-purpose AI models are behind some of the most exciting applications we now can't live without. We envision that an analogous “physical intelligence layer” built with models like π0.6 will similarly spur a new wave of applications for the physical world. We’ve recently begun working with a handful of companies that have deployed their robots to do real-world, useful things. pi.website/blog/partner/?…

How can robot policies be trained to best leverage VLMs' CoT reasoning and in-context learning for generalization? The key is Steerable Policies: vision-language-action models that can be flexibly controlled in many ways! steerable-policies.github.io 1/9

Reliable rewards are critical for effective RL, yet in most robotic applications obtaining such rewards requires significant task-specific human effort. Can we do better? Check out RoboReward, our new generalist, language-conditioned reward model for real-world robot RL!

Reliable rewards are a bottleneck for real-world RL for robotics: human labels are costly, and handcrafted rewards are brittle. In RoboReward 🤖💰, we study VLMs as reward models and find they are unreliable across tasks, embodiments, and scenes. Paper: arxiv.org/abs/2601.00675