Shengding Hu

41 posts

Shengding Hu

@DeanHu11

PhD @ Tsinghua University @deepseek_ai

new post. there's a lot in it. i suggest you check it out

Thrilled to announce the @Kaggle Game Arena, a new leaderboard testing how modern LLMs perform on games (spoiler: not very well atm!). AI systems play each other, making it an objective & evergreen benchmark that will scale in difficulty as they improve. kaggle.com/game-arena

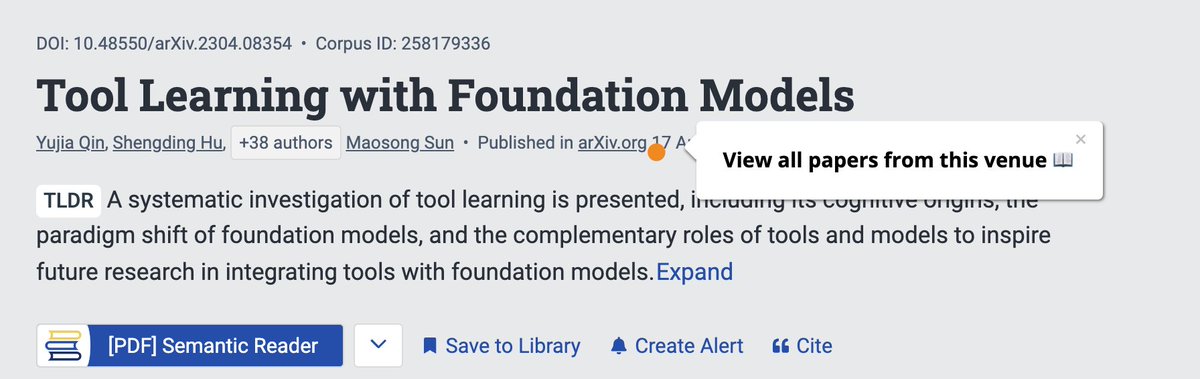

Okay, interesting. I saw a very similar idea in Vision now. They do experiments on images and videos. Even the title is almost the same. 😅😅😅 arxiv.org/abs/2412.07720

🔍How does pretraining loss evolve under different LR schedules? 🌟Meet our Multi-Power Law: predicts the full loss curve for various schedules! 🌟Accurate enough to optimize LR schedules directly. 🌟Result? A WSD-like schedule that outperforms the rest! 🔥Accepted at #ICLR2025

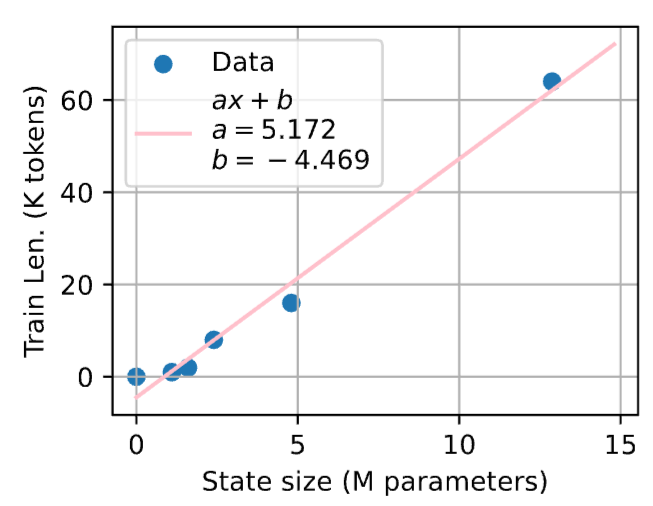

this is a great paper (with a great name) - clever exps on the state capacity and long context ability of SSMs arxiv.org/abs/2410.07145… strikingly, for every state size M there's a phase transition at some training context len >= K where SSMs will length-generalize robustly this is because with context length < K, the recurrent state isn't fully utilized and so the model "overfits" during training but once the model's state capacity is fully utilized (by training on long enough seqs), it automatically extrapolates notably, K is linear in M (!) - suggesting there's some notion of intrinsic information content per token (there exists B such that each token in the context corresponds to B bytes of recurrent state). perhaps B is architecture dependent? conversely, worrying about length generalization in recurrent models is probably a red herring. no need to design new mechanisms or special mitigations: just train on longer sequences (which has no computation overhead since linear time!) to generalize better takeaway: stuffing is tasty, and feed your Mambas fully!

Shocked! Llama3-V project from a Stanford team plagiarized a lot from MiniCPM-Llama3-V 2.5! its code is a reformatting of MiniCPM-Llama3-V 2.5, and the model's behavior is highly similar to a noised version of MiniCPM-Llama3-V 2.5 checkpoint. Evidence: github.com/OpenBMB/MiniCP…

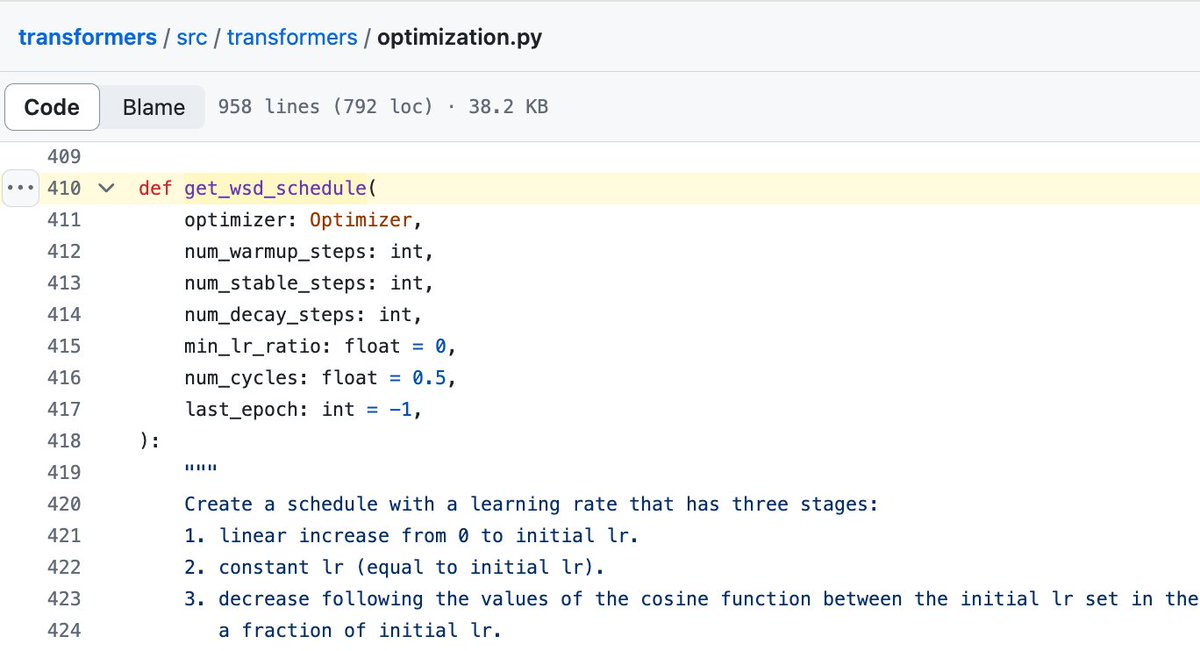

📢 I submitted my first (ever) paper! We find that with WSD LR schedule you can: 1. establish scaling laws with ~x2 savings 2. safely train a model without knowing the total training steps in advance 3. a new cooldown function outperforming linear/cosine decay 🧵 ⬇️

📢 I submitted my first (ever) paper! We find that with WSD LR schedule you can: 1. establish scaling laws with ~x2 savings 2. safely train a model without knowing the total training steps in advance 3. a new cooldown function outperforming linear/cosine decay 🧵 ⬇️

@huggingface @DeanHu11 @Yikang_Shen @LightOnIO @deepseek_ai TL;DR: WSD works as well as cosine schedule. It seems to become the standard for open-source models and might (already?) be used in closed-source models 👀 Also big thanks to @LoubnaBenAllal1 and @lvwerra for helping me with these experiments 🤗