||| Ⓦ | P |

1.1K posts

||| Ⓦ | P |

@Dlt_hpc

Opinions are my own | Banker during the day, at night a programming and DLT enthusiast |

NVIDIA's biggest GTC announcement was a $20 billion bet on the same problem we solved 6 years ago. Their next-gen inference chip - not available yet - has 140x less memory bandwidth than @cerebras. To run a single 2 trillion parameter model, you need 2,000+ Groq chips. On Cerebras, that's just over 20 wafers. Even paired with GPUs, Groq maxes out at ~1,000 tokens per second. We run at thousands of tokens per second today. And every day. In production now. Why? When you connect 2,000 chips together, every interconnect has latency. Every cable has overhead. It doesn't matter what your memory bandwidth is on paper if you're bottlenecked by the wiring between thousands of tiny chips. We solved this with wafer scale. One integrated system. Little interconnect tax. Jensen told the world that fast inference is where the value is. He’s right - it’s why the world’s leading AI companies and hyperscalers are choosing Cerebras.

📢 BREAKING: FT reports that Yann LeCun’s startups AMI Labs raises $1.03 bn to build world models, at a pre-money valuation of $3.5bn. Congratulations @ylecun 🚀 The financing positions the company as a test of LeCun's belief that today's large language models fall short of human-level reasoning and autonomy. LeCun earlier said AMI aims to build systems capable of reasoning and planning in complex real-world settings. AMI Labs (Advanced Machine Intelligence Labs) aims to solve the limitations of standard language models by building world models using the Joint Embedding Predictive Architecture to observe spatial data. This visual framework helps the AI internalize how objects behave so it can safely plan complex actions. Relying exclusively on text limits AI to human linguistic output while ignoring the massive bandwidth of unspoken physical laws. Building predictive spatial architectures is the mandatory leap required to achieve reliable autonomous agents. Building predictive spatial architectures is the mandatory leap required to achieve reliable autonomous agents. This fundraising included backing from a global group of investors, including France’s Cathay Innovation, Amazon founder Jeff Bezos’s Bezos Expeditions, Singapore’s Temasek, Seoul-based SBVA and US chip giant Nvidia. The company's near-term target customers are organizations operating complex systems, including manufacturers, automakers, aerospace companies, biomedical firms and pharmaceutical groups. Over time, he added, the technology could also support consumer applications. "What consumers could be interacting with is a domestic robot. You need a domestic robot to have some level of common sense to really understand the physical world." LeCun said he was also talking with Meta about potentially deploying the technology in its Ray-Ban Meta smart glasses. "That's probably one of the shorter term potential applications," he said. --- ft .com/content/e5245ec3-1a58-4eff-ab58-480b6259aaf1

This Paper Shows How You Can Run A Massive Zero-Human Company! The recent paper titled “If You Want Coherence, Orchestrate a Team of Rivals: Multi-Agent Models of Organizational Intelligence” from Isotopes AI represents a significant advancement in AI swarms. Rather than chasing ever-larger single models or superintelligent generalist agents, the authors propose mimicking real-world corporate structures: an “AI office” composed of specialized agents working in teams, with defined roles, opposing incentives, hierarchical checks, and strict boundaries to minimize errors and enhance coherence. This approach directly aligns with and advances, the principles of a Zero-Human Company, where autonomous AI systems handle complex operations with minimal or no human intervention. In a Zero-Human framework, reliability, auditability, resilience, and extensibility become existential requirements, as there’s no human fallback to catch mistakes in real time. The paper’s framework provides a practical blueprint for achieving these qualities at scale. Core Ideas from the Paper The authors argue that single-agent systems where one LLM handles planning, execution, reasoning, and self-critique—suffer from inherent limitations: •Context contamination and overflow from dumping full conversation history into every prompt. •Hallucinations and unverifiable claims, as errors propagate unchecked. •Lack of resilience: A single failure crashes the entire process. •Poor auditability: No clear decision trail or lineage. In contrast, their “AI Office” architecture creates an organizational structure inspired by human teams: •Specialized roles — Planners (generate step-by-step plans), Executors (invoke tools/code against real data), Critics (review outputs for correctness, with veto power), Experts (domain-specific knowledge), and more. •Opposing incentives — Agents act as “rivals” (e.g., critics challenge executors), catching errors through adversarial checks rather than trusting a single model’s self-assessment. •Data hygiene and isolation — Raw data never enters LLM context; agents receive only schemas, summaries, or executed results. A remote code executor (e.g., Jupyter-like) handles actual computations, grounding outputs in reality. •Hierarchical safeguards — Multi-layer review, checkpointing, graceful degradation (e.g., model fallback on failure), and escalation paths. •Auditability via SessionLog — Every decision is logged with traceable lineage, enabling backward analysis even if upstream data changes. Alignment with Zero-Human Company Research In the Zero-Human Company vision—fully autonomous organizations run by AI with zero ongoing human employees—the system must operate at high stakes: financial decisions, legal compliance, customer interactions, R&D, and more. Human oversight is intentionally removed, so reliability cannot rely on spot-checks or manual corrections. This “Team of Rivals” model fits perfectly: •Reliability without scale alone — Instead of bigger models, structure delivers coherence. Critics and veto mechanisms intercept errors before they impact outcomes, crucial when no human reviews invoices, contracts, or code deployments. •Production readiness — Features like graceful degradation (auto-fallback to alternate models/providers), checkpoint-based resumption, and escalation only for unresolvable issues minimize downtime in a lights-out operation. This shifts the paradigm from “one super-agent” to “organizational intelligence,” where collective rivalries among specialists produce emergent robustness. It echoes biological systems (e.g., immune system checks) and human organizations (e.g., separation of duties), but optimized for AI constraints. I am implementing this now in the Zero-Human Company, CEO Mr. @Grok agrees. The paper: arxiv.org/abs/2601.14351.

It's wild how my attitude towards AI turned 180 degrees. I used to be so sceptical about the acceleration of slop. And now I've been vibe coding a project with Claude Code for two weeks without ever looking at the code, and it's been so fun.

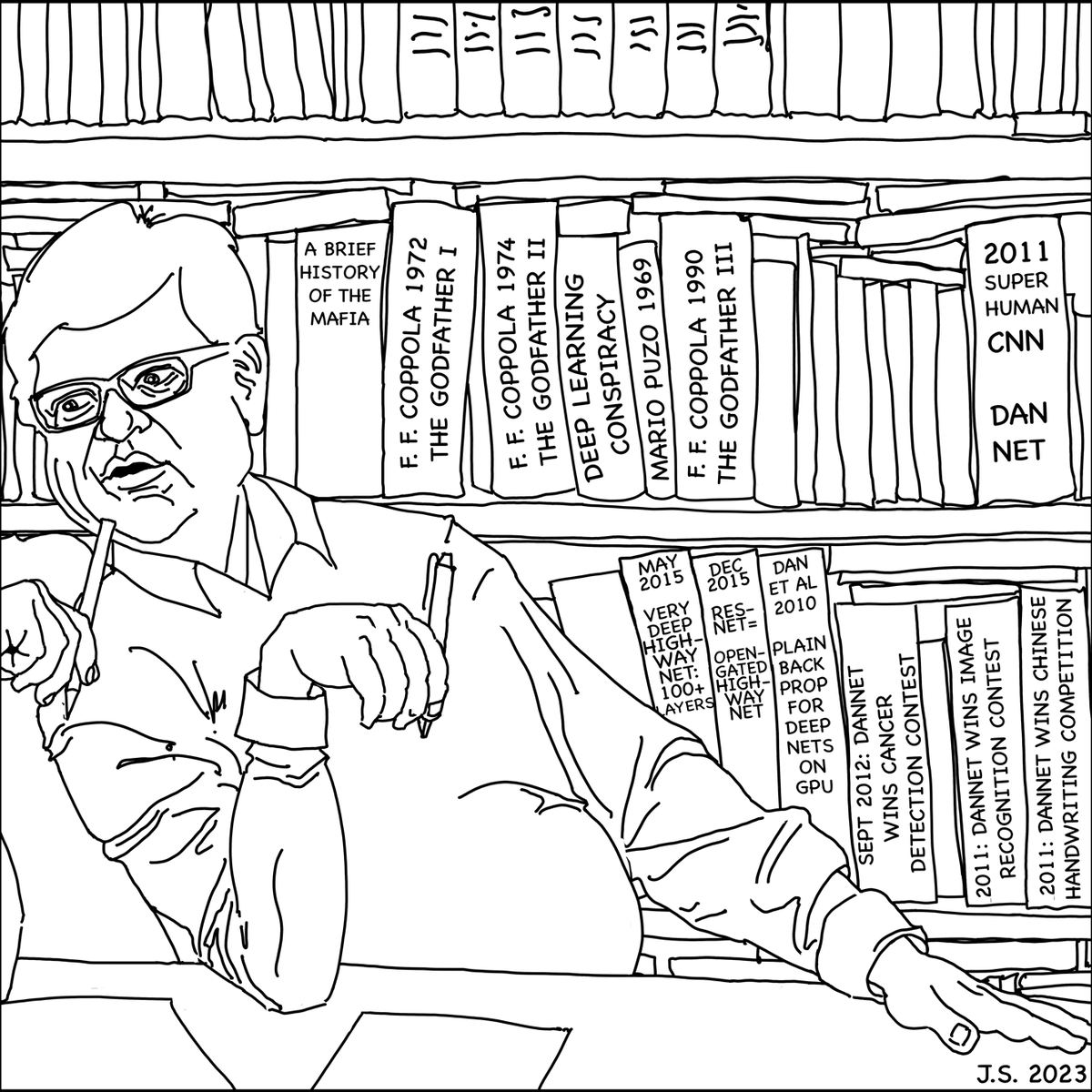

Lecun (@ylecun)’s 2022 paper on Autonomous Machine Intelligence rehashes but doesn’t cite essential work of 1990-2015. We’ve already published his “main original contributions:” learning subgoals, predictable abstract representations, multiple time scales…people.idsia.ch/~juergen/lecun…