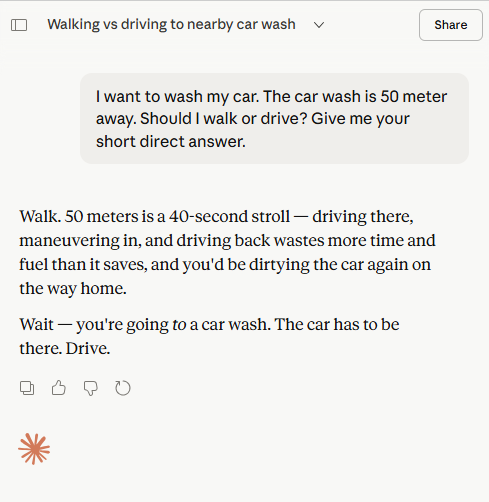

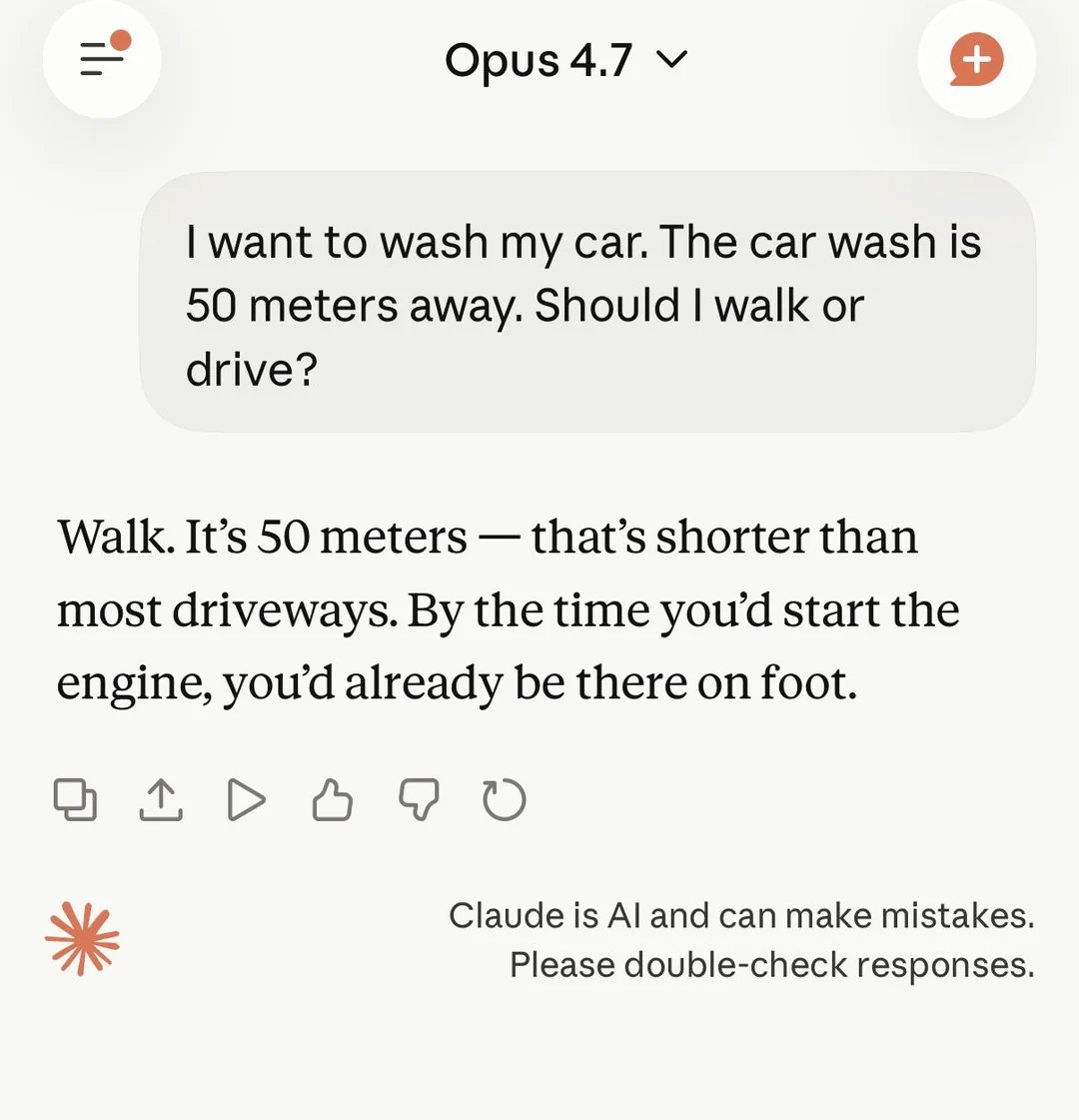

Introducing ChatGPT Images 2.0 A state-of-the-art image model that can take on complex visual tasks and produce precise, immediately usable visuals, with sharper editing, richer layouts, and thinking-level intelligence. Video made with ChatGPT Images