Doug Assis

980 posts

Doug Assis

@DougAssis1

Retired Canadian/Brazilian Motion Artist in love with AI. Animador Gráfico Canadense/Brasileiro aposentado aficionado por Inteligência Artificial. -Ambisinister

London, ON Canada Katılım Aralık 2011

859 Takip Edilen119 Takipçiler

Doug Assis retweetledi

@LumaLabsAI I was weary of using templates/models but this is quite a pleasure to see the results. Thanks for the share

English

Doug Assis retweetledi

@minchoi Went and Wow! its a little quirky but everything you really need is there. Thanks for the link

English

Doug Assis retweetledi

🚨BREAKING:

Last week Google quietly launched Nano Banana 2.

Since then, I’ve been studying it obsessively.

Testing. Refining. Breaking it. Rebuilding it.

And most people are using it at maybe... 20% of its potential.

So I did the work for you.

I handpicked the 11 most powerful prompts that unlock its full capability — for content, monetization, branding & growth.

No fluff.

No basic templates.

Only high-leverage prompts.

And I’m giving them away FREE.

Here’s how to get them:

1️⃣ Follow me

2️⃣ Like + RT this post

3️⃣ Comment “PROMPT”

I’ll send it to followers only.

If you’re serious about using AI like an operator — not a user — this is for you.

Let’s build smarter. 🚀

English

@ABPosse007 @johnnymaga Are you dumb enough to believe your own affirmation?

English

@HorrorGorl Just wait for your turn to get an ICE visit and a goodbye hug, with plastic zip ties of course.

That will test your devotion to the pesos and dictatorship

English

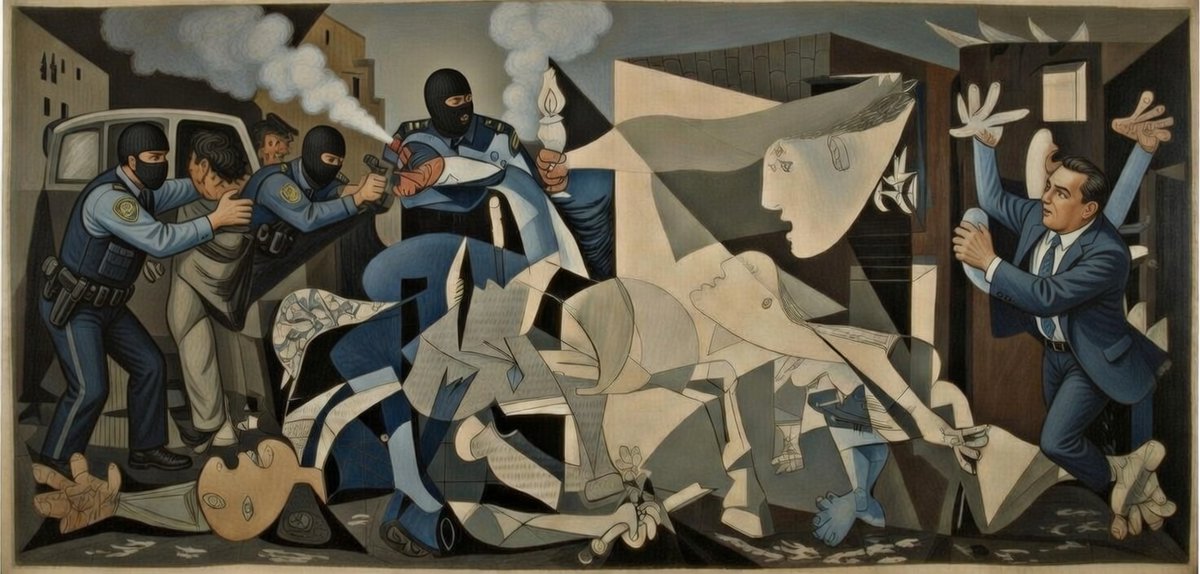

Tonight, we reached an agreement with the Department of War to deploy our models in their classified network.

In all of our interactions, the DoW displayed a deep respect for safety and a desire to partner to achieve the best possible outcome.

AI safety and wide distribution of benefits are the core of our mission. Two of our most important safety principles are prohibitions on domestic mass surveillance and human responsibility for the use of force, including for autonomous weapon systems. The DoW agrees with these principles, reflects them in law and policy, and we put them into our agreement.

We also will build technical safeguards to ensure our models behave as they should, which the DoW also wanted. We will deploy FDEs to help with our models and to ensure their safety, we will deploy on cloud networks only.

We are asking the DoW to offer these same terms to all AI companies, which in our opinion we think everyone should be willing to accept. We have expressed our strong desire to see things de-escalate away from legal and governmental actions and towards reasonable agreements.

We remain committed to serve all of humanity as best we can. The world is a complicated, messy, and sometimes dangerous place.

English

@SecWar The arrogance of an ignorant is only surpassed by the one of a boot licker.

English

This week, Anthropic delivered a master class in arrogance and betrayal as well as a textbook case of how not to do business with the United States Government or the Pentagon.

Our position has never wavered and will never waver: the Department of War must have full, unrestricted access to Anthropic’s models for every LAWFUL purpose in defense of the Republic.

Instead, @AnthropicAI and its CEO @DarioAmodei, have chosen duplicity. Cloaked in the sanctimonious rhetoric of “effective altruism,” they have attempted to strong-arm the United States military into submission - a cowardly act of corporate virtue-signaling that places Silicon Valley ideology above American lives.

The Terms of Service of Anthropic’s defective altruism will never outweigh the safety, the readiness, or the lives of American troops on the battlefield.

Their true objective is unmistakable: to seize veto power over the operational decisions of the United States military. That is unacceptable.

As President Trump stated on Truth Social, the Commander-in-Chief and the American people alone will determine the destiny of our armed forces, not unelected tech executives.

Anthropic’s stance is fundamentally incompatible with American principles. Their relationship with the United States Armed Forces and the Federal Government has therefore been permanently altered.

In conjunction with the President's directive for the Federal Government to cease all use of Anthropic's technology, I am directing the Department of War to designate Anthropic a Supply-Chain Risk to National Security. Effective immediately, no contractor, supplier, or partner that does business with the United States military may conduct any commercial activity with Anthropic. Anthropic will continue to provide the Department of War its services for a period of no more than six months to allow for a seamless transition to a better and more patriotic service.

America’s warfighters will never be held hostage by the ideological whims of Big Tech. This decision is final.

English