EIFY

7.2K posts

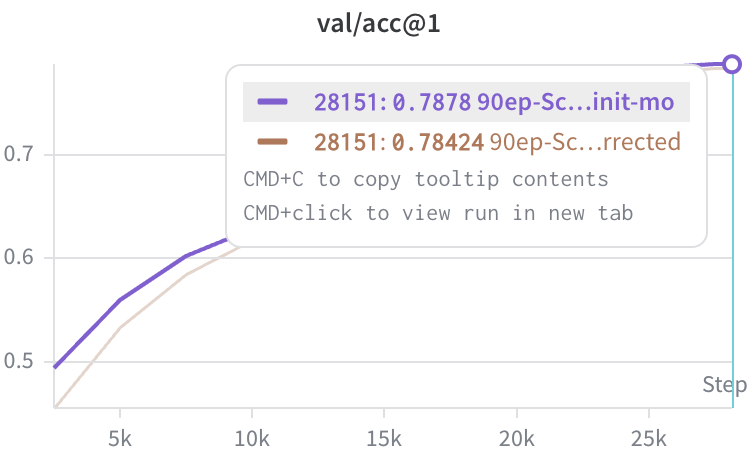

For some reason I decided to swap out standard dot-product attention for a scaled-rbf kernel. Pretty much expected it to fail to converge or be impossibly slow but the scaled-rbf-attention is getting unexpectedly good results?? 👇

捡来一张图

Nowadays biases are omitted in transformers for simplicity/same quality but it might matter more to keep affine layers for more expressivity, since bias doesn't affect Lipschitz

THAT'S CHINESE🇨🇳🤣 Imagine being so clueless you can't even identify your own history. That's 100% Chinese attire. Stop embarrassing yourselves and learn what your ancestors actually looked like. STUPID CHINESE🤣🤣

A new cryptic lineage popped up in St Louis a few weeks ago. I’ve been sampling this sewershed (500k people) twice a week for years and the first time I see this cryptic lineage it is 5 years old and makes up 50% of the sample. 1/