Elron Bandel

796 posts

Elron Bandel

@ElronBandel

Research Scientist | @IBMResearch | General Agent Evaluation Team

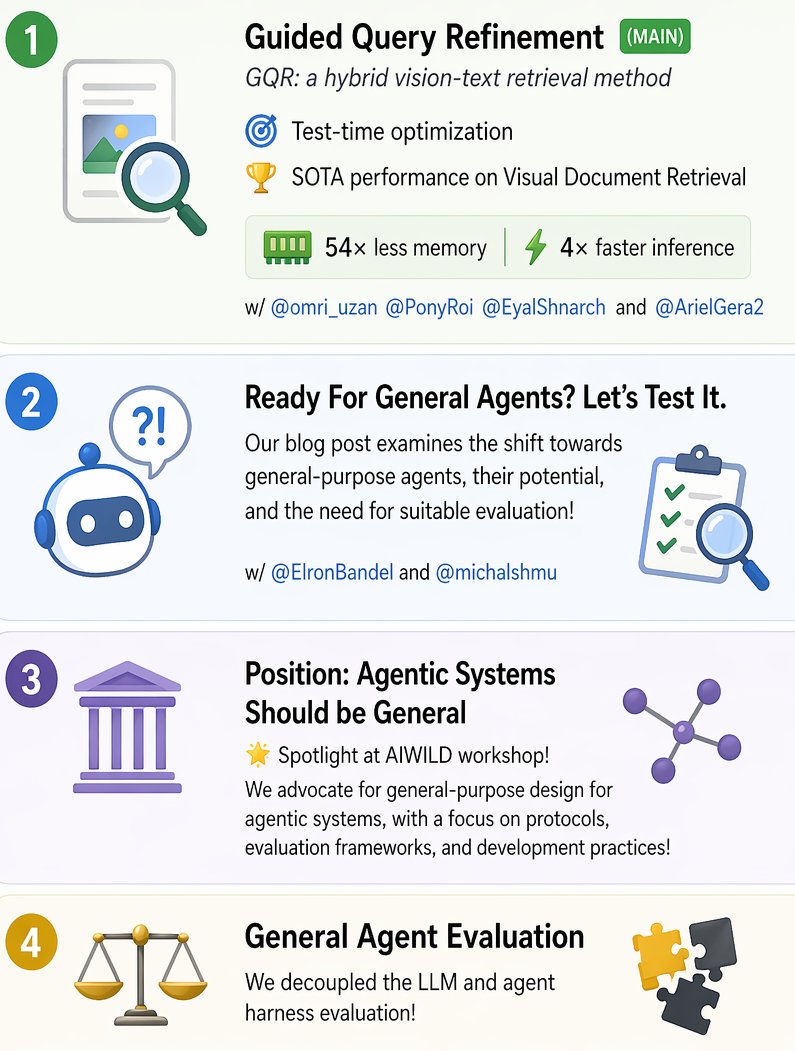

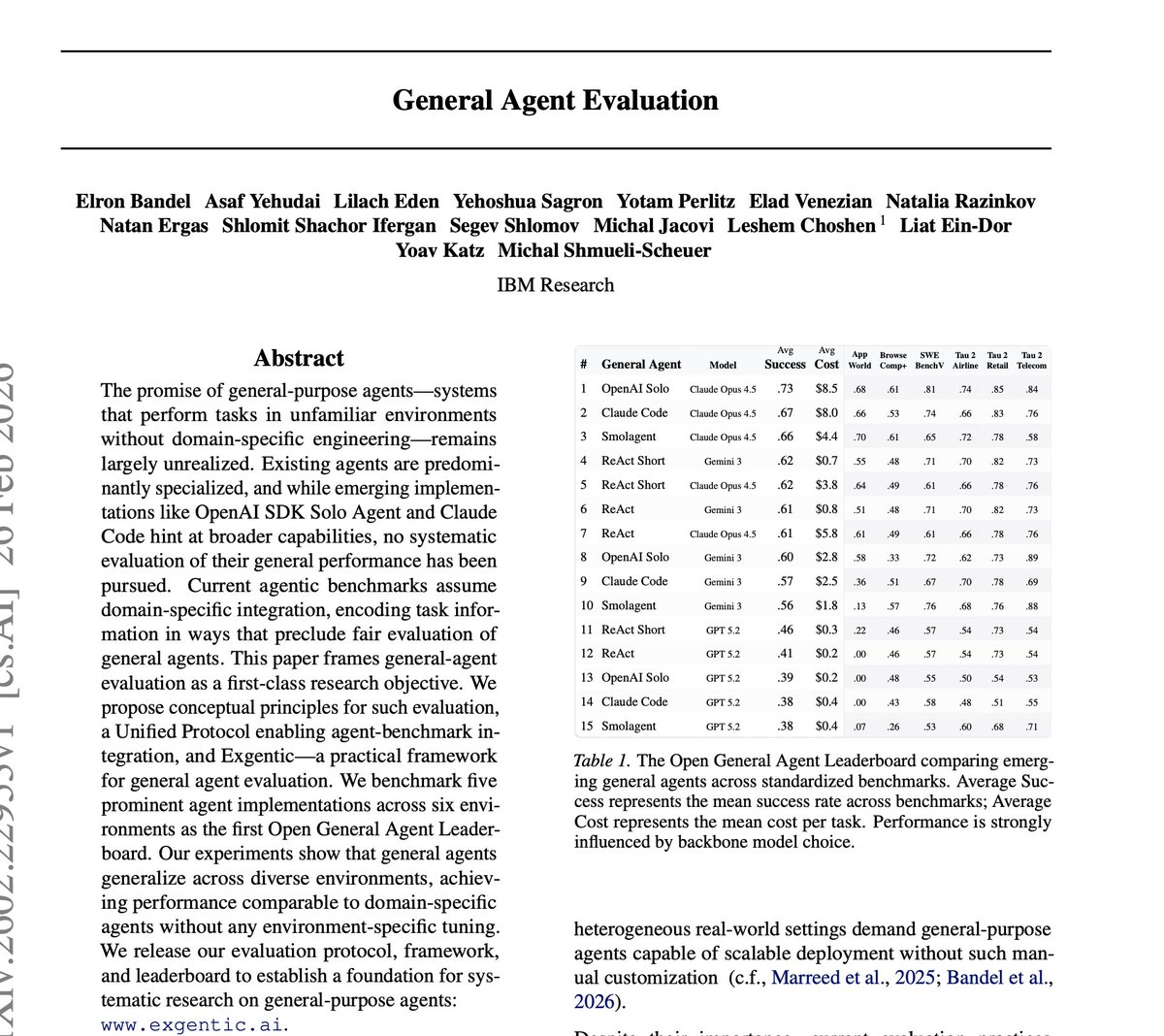

🧵 Should AI agents be specialized or general purpose? We think this question defines the Linux era for AI. @AsafYehudai @evijit @alex_lacoste_ @gneubig @mmitchell_ai @michalshmu @LChoshen

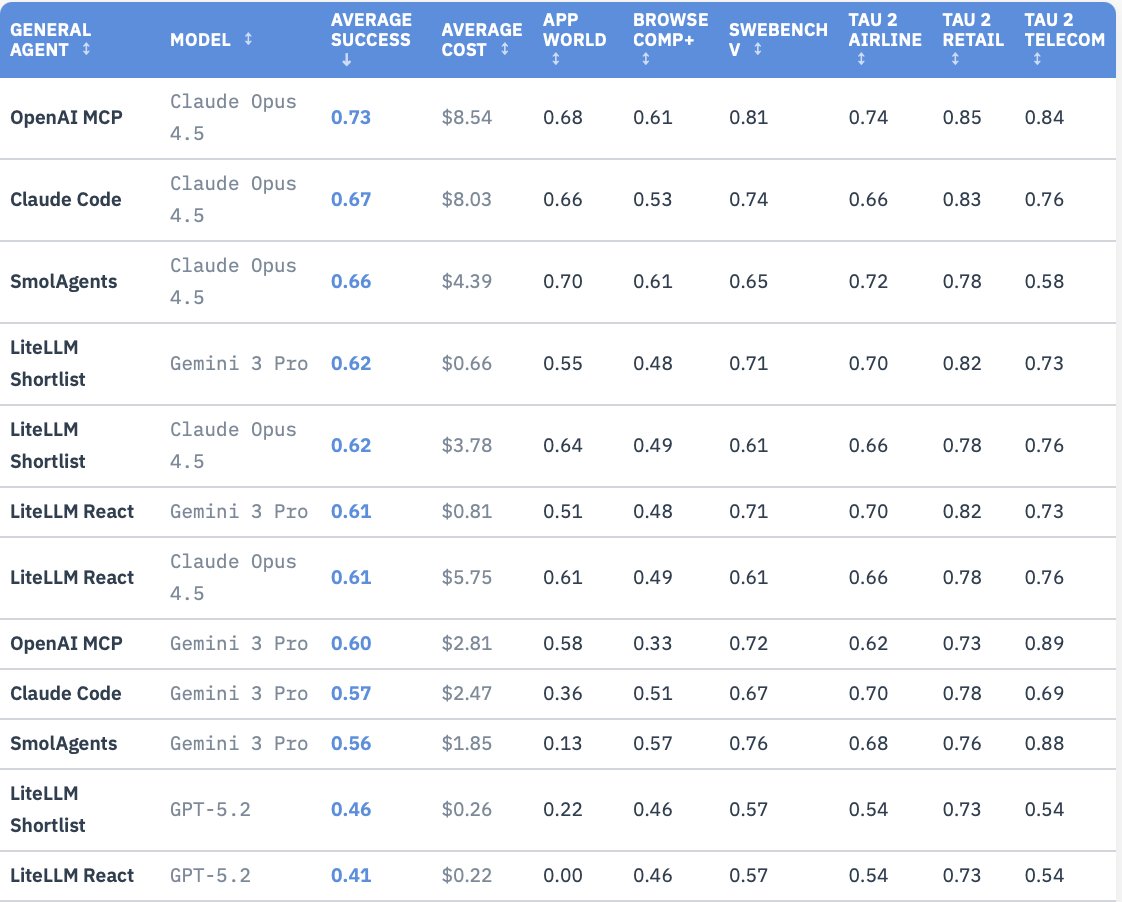

New preprint, evaluation framework & leaderboard!🚨 General-purpose AI agents are everywhere. 🤖 From ReAct to @claudeai Code and @OpenAI SDK. But how do we actually evaluate them — as general agents? Currently, benchmarks are deeply tied to domain-specific setups, making it impossible to evaluate true cross-domain agents. We’re changing that! We’re introducing Exgentic and the Open General Agent Leaderboard. 🧵👇

Agents should be general. Why are we building code agents, CLI agents, browser agents separately? Why does adapting to a new benchmark take a month? Our collaboration brings diverse views, pros here cons in the paper & Your push back if I’m wrong. Argument + paper link 👇🧵

💻 Claude Code? 🦞 Open Claw? Maybe a simple loop of LLM calls? Which agent is best across diverse tasks? Which one to deploy at scale? We're introducing the Open General Agent Leaderboard. General agents are far too important to leave untracked.🧵