Lotfi Slim

250 posts

Lotfi Slim

@EpiSlim

AI Developer Technology Engineer @Nvidia

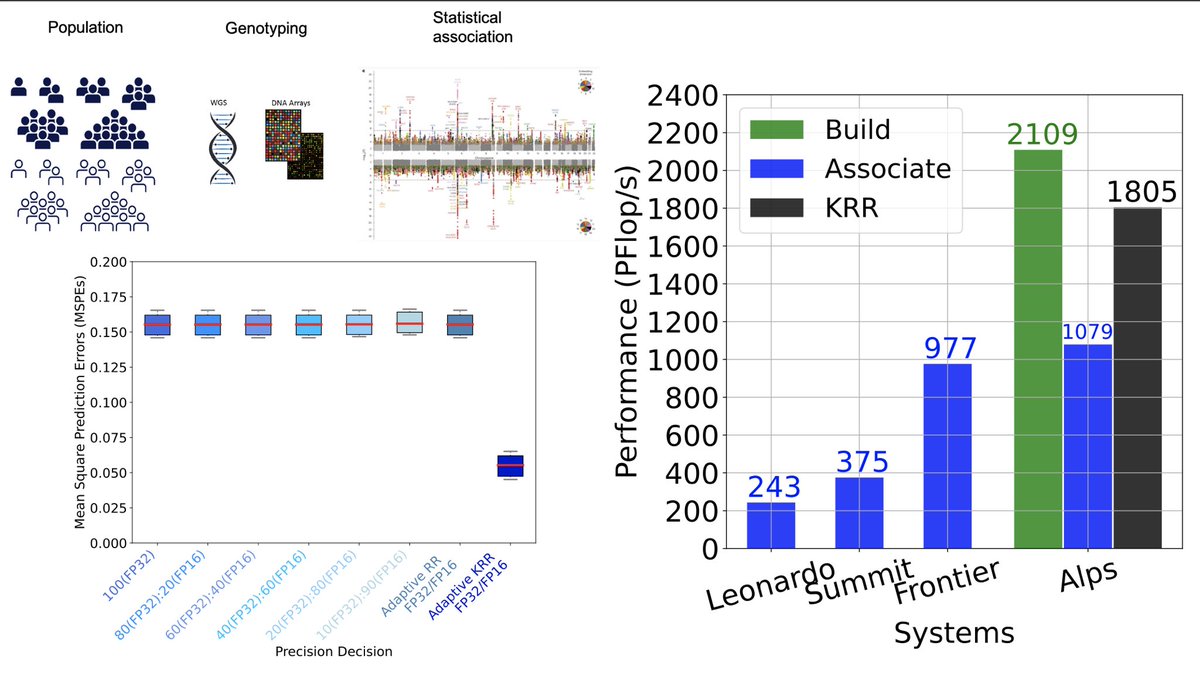

Faster SUP models in Dorado through a number of approaches. #NanoporeConf

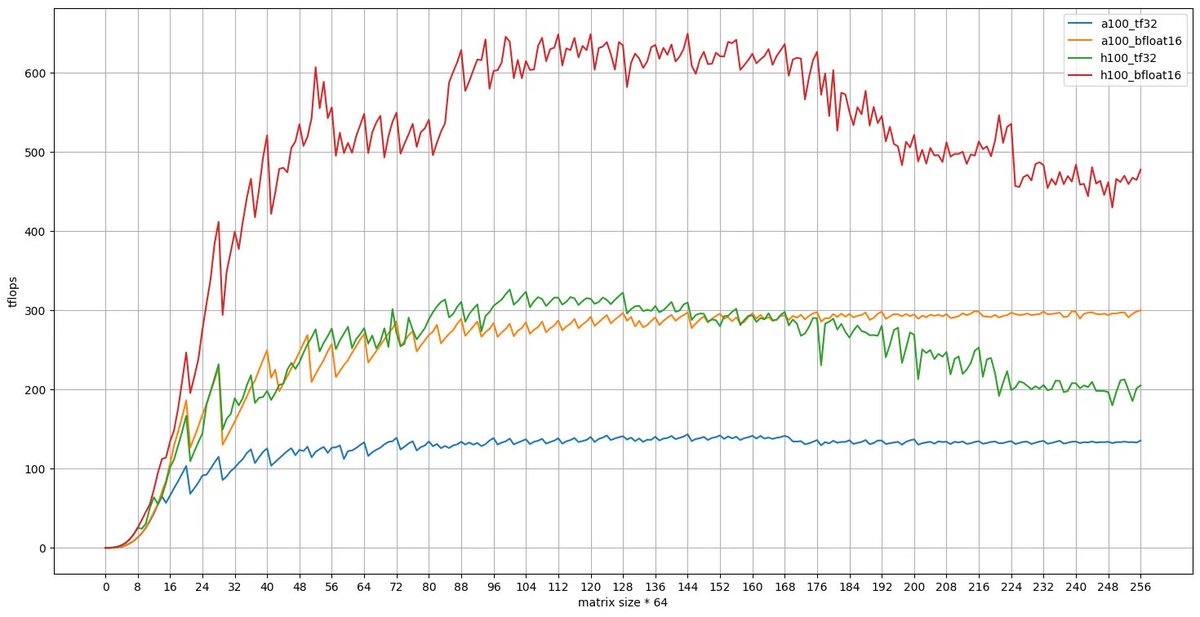

🚨 vLLM Office Hours continue on Thursday, September 5th, at 2PM ET / 11AM PT! Tyler Smith (@tms_jr), vLLM Committer & Technical Director at Neural Magic, will dive deep into using NVIDIA CUTLASS for high-performance INT8 & FP8 vLLM inference. Sign up: neuralmagic.com/community-offi…

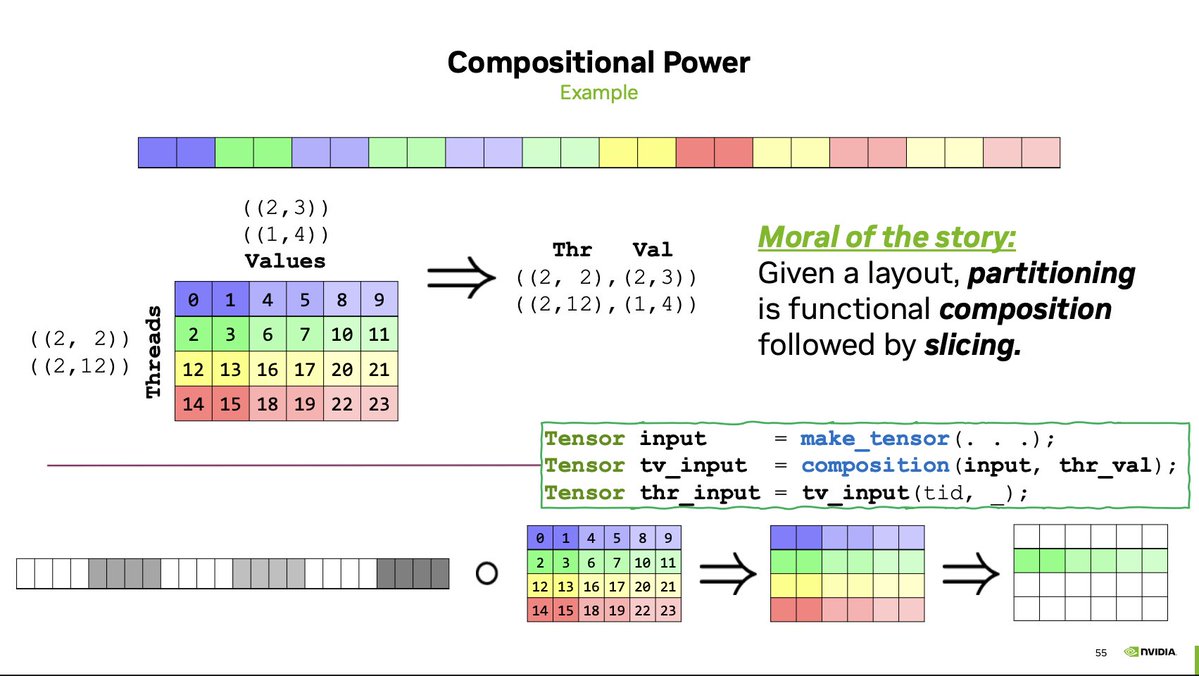

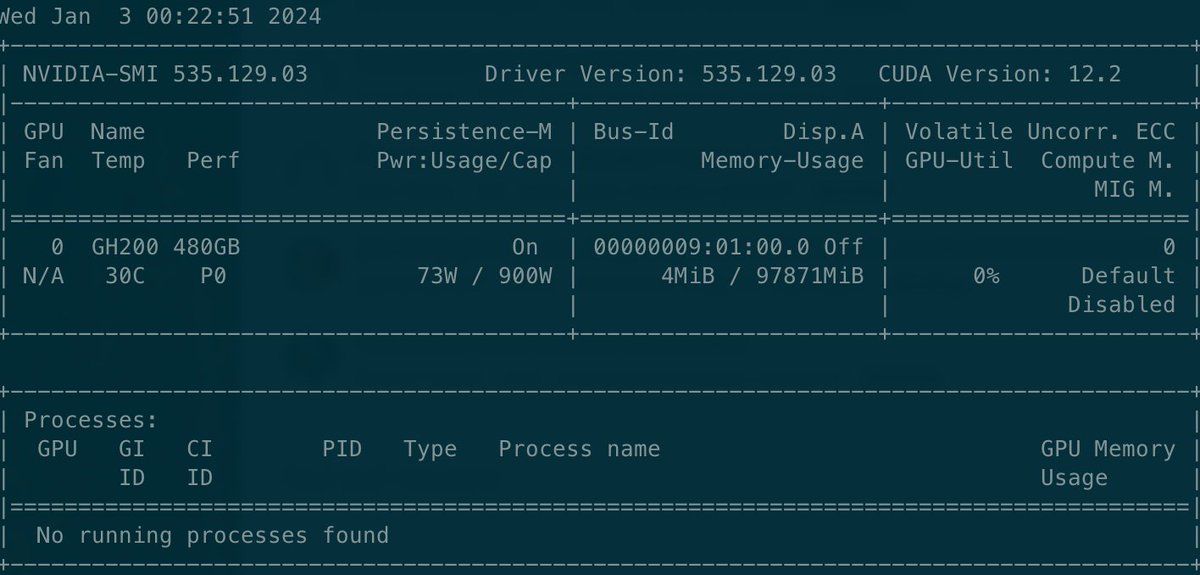

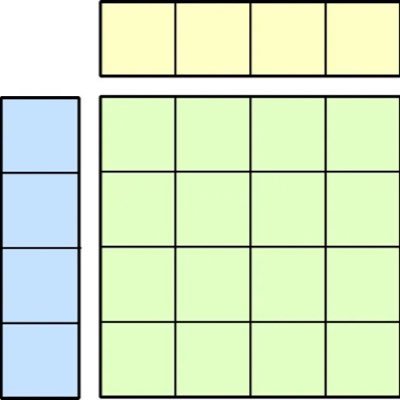

The CUTLASS/TensorCores/Hopper lecture covered quite advanced cuda programming. I guess we need further ramp-up lectures to make these topics more accessible. Recoding: youtu.be/hQ9GPnV0-50?si… Slides: drive.google.com/file/d/18sthk6…

I'm delighted to release the first half of my new open-access online textbook in human population genetics: web.stanford.edu/group/pritchar…

1/ We are happy to announce the open-source release of the inference code and weights of our four genomics #LLM, the nucleotide transformers, ranging from 500M to 2.5B parameters, trained in collaboration with @nvidia and @TU_Munchen 🧬 bit.ly/3YRSUz1