Francis Davidson retweetledi

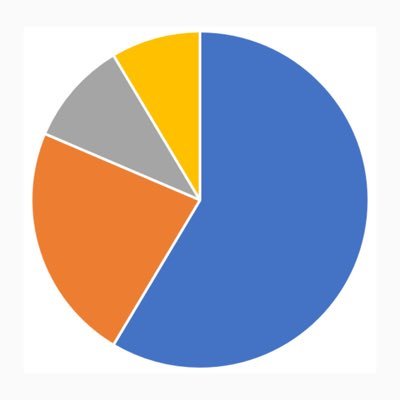

Chartbeat data shows Google Search referrals down 34% overall, 60% for small publishers. AI chatbots still <1% of referral traffic.

This alogns with @ahrefs data showing 18% search traffic decline across 74.7K sites while AI replaced less than 5% of the loss.

The traffic isn’t being replaced. It’s being absorbed. AI answers the query directly and the user never clicks. The value is shifting from traffic to influence…. and most analytics dashboards can’t see influence.

Nice share @NexusBen

9to5Google@9to5Google

Google Search referrals to the web have plummeted, AI links are 'less than 1%' of traffic 9to5google.com/2026/03/18/goo… by @nexusben

English