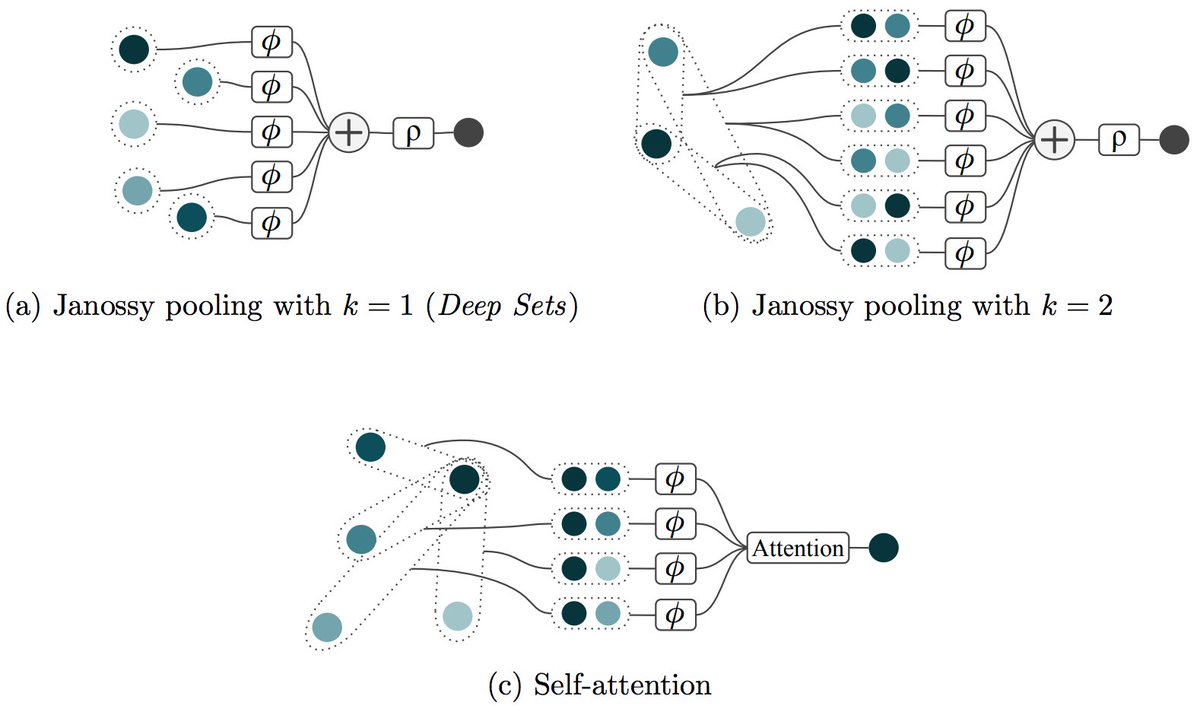

Both Max-Pooling (e.g. DeepSets) and Self-Attention are permutation invariant/equivariant neural network architectures for set-based problems. I am aware of a couple of variations for both of these. Are there additional, fundamentally different architectures for sets? 🤔