Fanqing Meng

411 posts

Fanqing Meng

@FanqingMengAI

vibe phd | kimi | kimi linear, K2, K 2.5, mm-eureka | Options are my own | https://t.co/LDxlIjhSih

Katılım Mart 2025

667 Takip Edilen1.3K Takipçiler

Sabitlenmiş Tweet

Fanqing Meng retweetledi

Fanqing Meng retweetledi

Fanqing Meng retweetledi

Fanqing Meng retweetledi

Now I will use apple watch which i buy it 3 years ago but never use 😂

Shobhit - Building SuperCmd@nullbytes00

Done @garrytan Now you can use your apple watch to control claude code session! built this in 6 hours, used gstack for this See /office-hours from gstack in action in the video. - Your Claude session, live on your Apple Watch - Accept, reject, or reply instantly to prompts use it here, made it open source: github.com/shobhit99/clau…

English

Fanqing Meng retweetledi

Fanqing Meng retweetledi

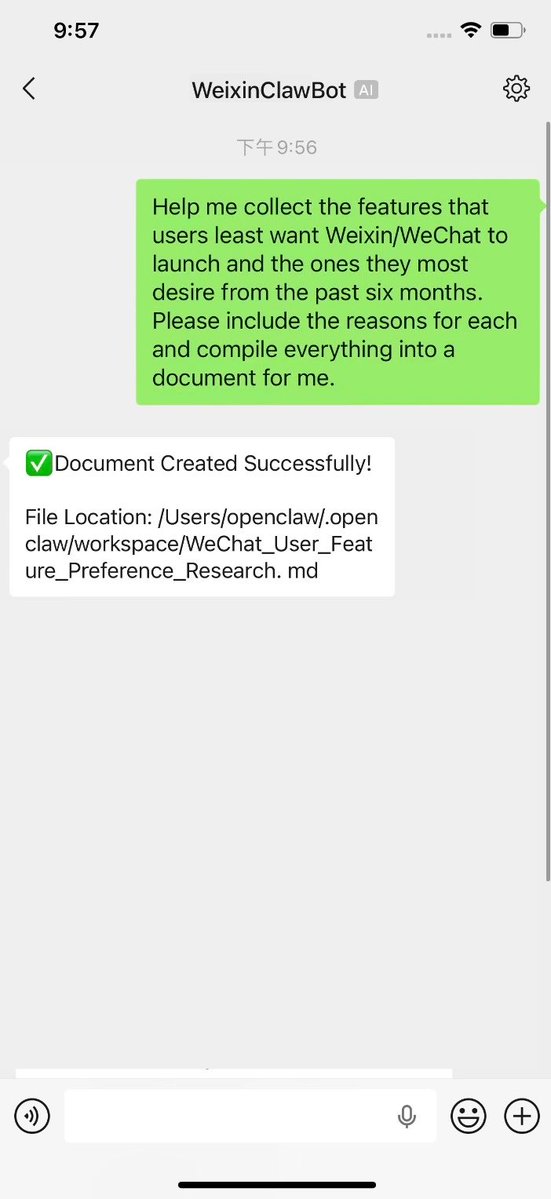

MetaBot 现在支持微信了!通过 ClawBot 插件,直接在微信里和 Claude Code Agent 对话——写代码、读文档、跑命令,手机上就能搞定。

飞书、Telegram、微信三端打通,同一个 AI 团队随时随地协作。

一行命令安装,扫码即用:

curl -fsSLhttps://raw.githubusercontent.com/xvirobotics/metabot/main/install.sh

GitHub: github.com/xvirobotics/me…

中文

Fanqing Meng retweetledi

Today, we are officially opening the capability to integrate #OpenClaw into #Weixin.

With the launch of the #WeixinClawBot, users can use Weixin as a dedicated messaging channel for OpenClaw.

Now, you can send and receive messages with OpenClaw just like texting a friend.

#AIAutomation #AI

English

Fanqing Meng retweetledi

this is why i still use cursor 😂😂

夏雨婷@cherylnatsu

“我现在什么报错都不怕,反正AI解决” “那这个呢” $ claude zsh: claude: command not found $ codex zsh: codex: command not found

English

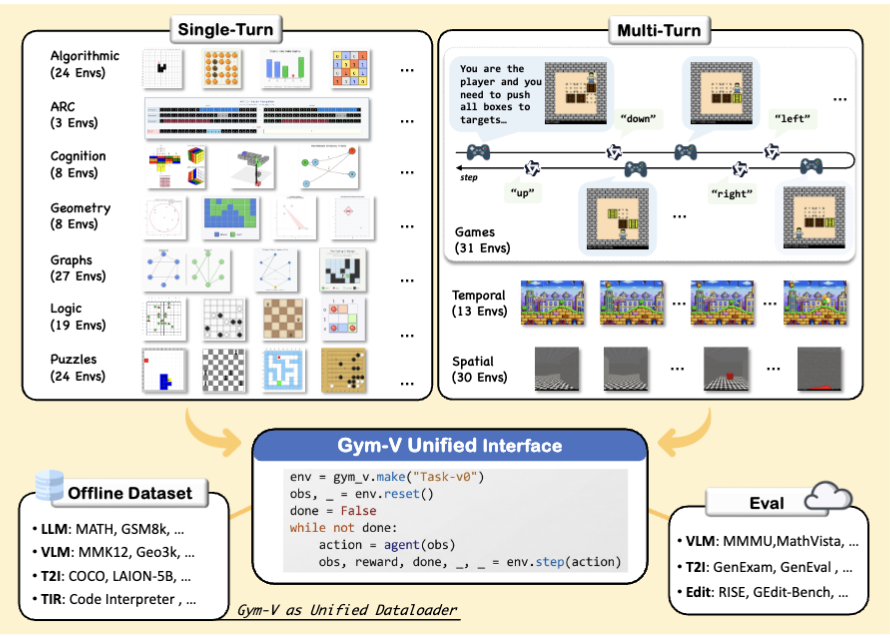

Gym-V is fully open-sourced. 5 lines of code to get started:

env = gym_v.make("Task-v0")

obs = env.reset()

action = agent(obs)

obs, reward, done, _ = env.step(action)

📄 Paper: arxiv.org/abs/2603.15432

💻 Code: github.com/ModalMinds/gym…

Let's build the Gym for vision agents, together!

English

Text agents have their Gym. Vision agents? Not until now.

Introducing Gym-V — a unified gym-style platform for agentic vision research, with 179 procedurally generated environments across 10 domains.

One API to rule them all:

📦 Offline dataset

🤖 Agentic RL training

🔧 Tool-use training

👥 Multi-agent training

📊 VLM & T2I model evaluation

All under the same reset/step interface.

Key findings:

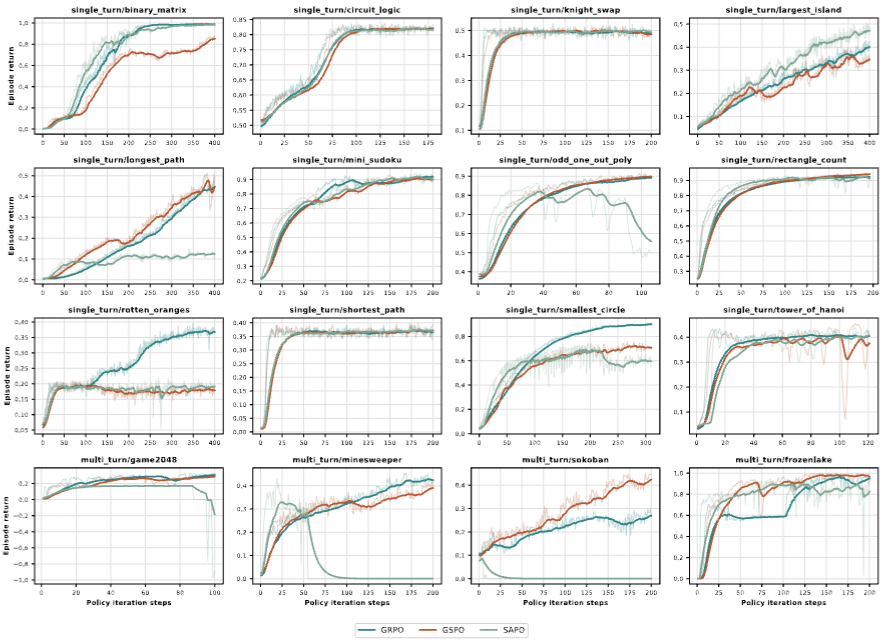

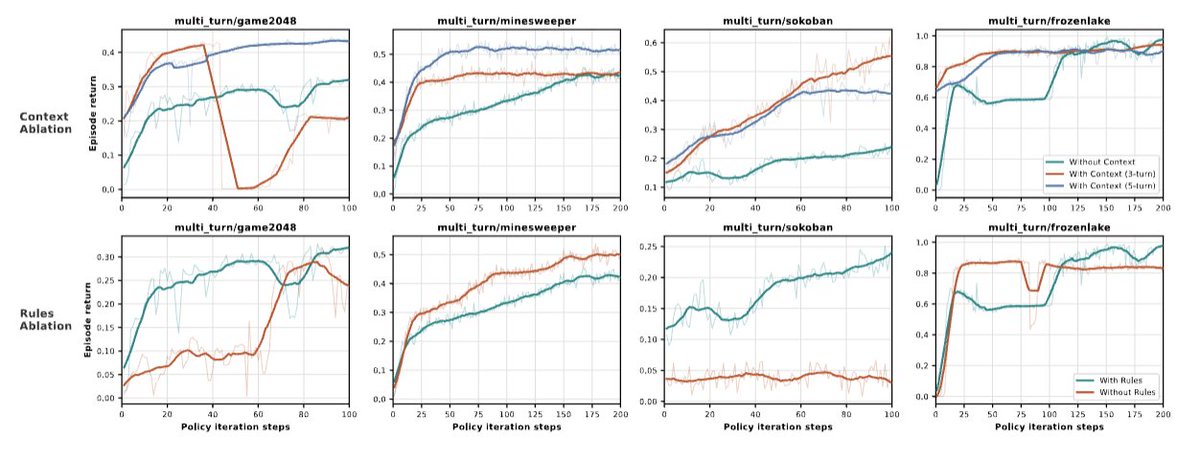

1. Observation scaffolding matters MORE than RL algorithm choice

2. Broad curricula transfer well; narrow training causes negative transfer

3. Multi-turn interaction amplifies everything

📄 Paper: arxiv.org/abs/2603.15432

💻 Code: github.com/ModalMinds/gym…

Open the thread for a deep dive! 🧵

English

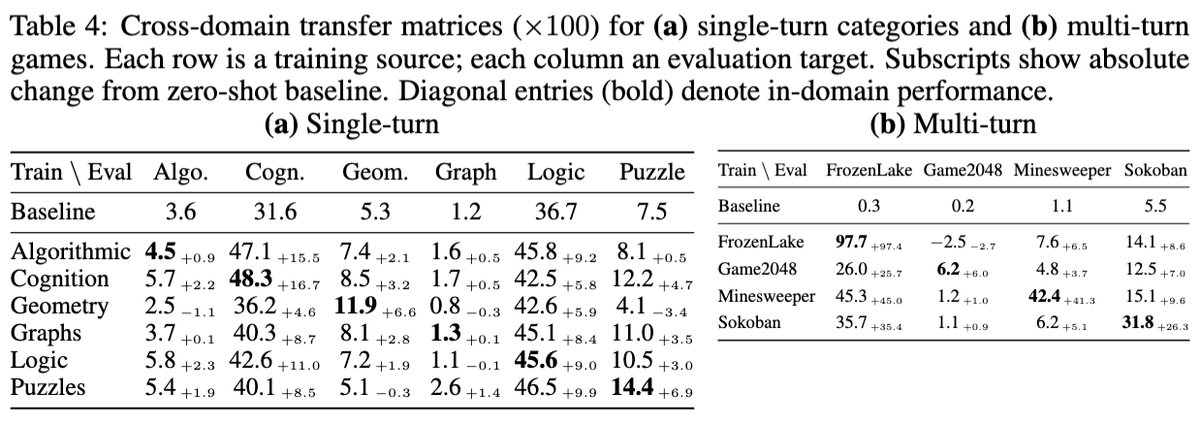

Does RL training on one domain help others?

✅ Broad curricula (Cognition, Puzzles) transfer broadly — covering diverse sub-skills pays off

❌ Narrow curricula (Geometry) can cause NEGATIVE transfer — domain-specific shortcuts actively hurt on new tasks

Transfer is asymmetric: Logic → Cognition yields +11.0, but Cognition → Logic only +5.8. Some competencies act as prerequisites rather than interchangeable skills.

Multi-turn amplifies everything — both the gains AND the damage.

English

Some finding:

Observation scaffolding is the most decisive factor for RL training success — more than algorithm choice.

✅ Adding captions to images → consistent improvement across ALL environments

❌ Removing game rules → can kill learning entirely

⚖️ GRPO vs GSPO vs SAPO? All improve, but no single algorithm dominates

HOW you present the task to the agent matters more than HOW you optimize it.

English

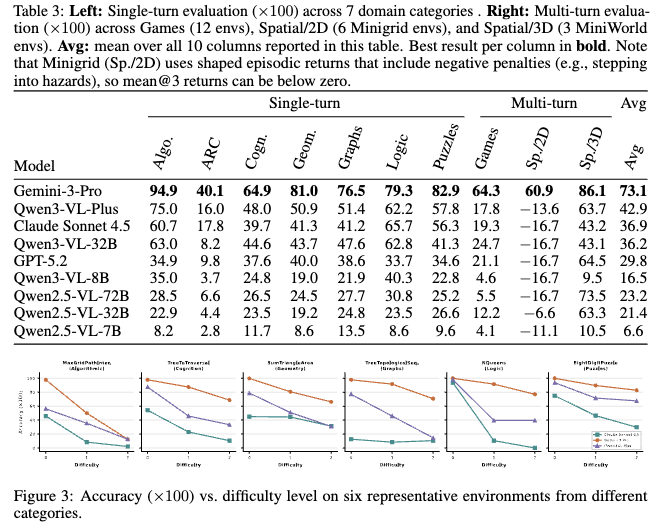

We evaluated 9 VLMs zero-shot across all categories.

🏆 Gemini-3-Pro dominates (73.1 avg)

🥈 Best open model Qwen3-VL-32B reaches only 36.2

📊 Newer 32B beats older 72B by 1.8× — training recipe > raw scale

The "difficulty cliff" is striking: on some tasks, accuracy drops to near-zero when complexity increases just one level. Even frontier models collapse — Gym-V is far from saturated.

English

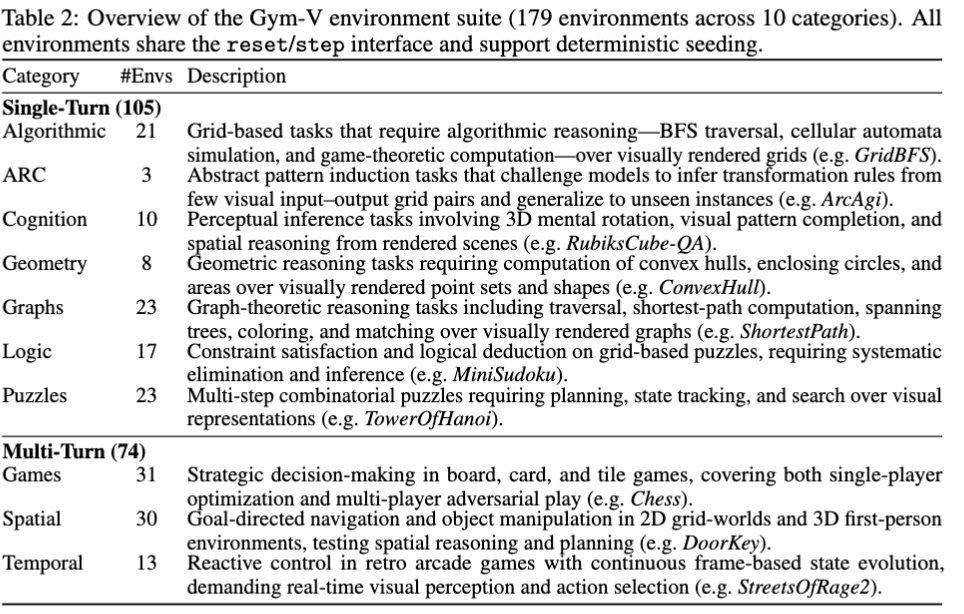

Gym-V spans 10 categories:

📐 Single-Turn (105 envs): Algorithmic, ARC, Cognition, Geometry, Graphs, Logic, Puzzles

🎮 Multi-Turn (74 envs): Games, Spatial (2D/3D), Temporal (retro arcade)

All environments are procedurally generated with deterministic seeding and parametric difficulty levels (0, 1, 2). From Sudoku to Sokoban, from Chess to Streets of Rage — vision agents face real visual reasoning challenges.

English