Wolfgang M. Schröder faustiii.bsky.social

31K posts

Wolfgang M. Schröder faustiii.bsky.social

@Faust_III

Philosopher & Professor, Political Theory, AI-Ethics, AI Standards @ https://t.co/gU9Q3zW8bK, @ CEN-CLC / JTC 21, Art Enthousiast, Author 🇪🇺 Private Account

Allemagne (Union Européenne) Katılım Eylül 2016

4.8K Takip Edilen3K Takipçiler

Wolfgang M. Schröder faustiii.bsky.social retweetledi

Wharton’s latest AI study points to a hard truth: “AI writes, humans review” model is breaking down

Why "just review the AI output" doesn't work anymore, our brains literally give up.

We have started doing "Cognitive Surrender" to AI - Wharton’s latest AI study points to a hard truth: reviewing AI output is not a reliable safeguard when cognition itself starts to defer to the machine.when you stop verifying what the AI tells you, and you don't even realize you stopped. It's different from offloading, like using a calculator.

With offloading you know the tool did the work. With surrender, your brain recodes the AI's answer as YOUR judgment. You genuinely believe you thought it through yourself.

Says AI is becoming a 3rd thinking system, and people often trust it too easily.

You know Kahneman's System 1 (fast intuition) and System 2 (slow analysis)? They're saying AI is now System 3, an external cognitive system that operates outside your brain. And when you use it enough, something happens that they call Cognitive Surrender.

Cognitive surrender is trickier: AI gives an answer, you stop really questioning it, and your brain starts treating that output as your own conclusion. It does not feel outsourced. It feels self-generated.

The data makes it hard to brush off. Across 3 preregistered studies with 1,372 participants and 9,593 trials, people turned to AI on over 50% of questions.

In Study 1, when AI was correct, people followed it 92.7% of the time. When it was wrong, they still followed it 79.8% of the time.

Without AI, baseline accuracy was 45.8%. With correct AI, it jumped to 71.0%. With incorrect AI, it dropped to 31.5%, worse than having no AI. Access to AI also boosted confidence by 11.7 percentage points, even when the answers were wrong.

Human review is supposed to be the safety net. But this research suggests the safety net has a hole in it: people do not just miss bad AI output; they become more confident in it.

Time pressure did not eliminate the effect. Incentives and feedback reduced it but did not remove it. And the people most resistant tended to score higher on fluid intelligence and need for cognition. That makes this feel less like a laziness problem and more like a cognitive architecture problem.

English

Wolfgang M. Schröder faustiii.bsky.social retweetledi

On Teaching Theorizing in the Social Sciences

PhD programs put a huge emphasis on methods related to empirical research.

Here are some older and newer book that provide different takes on what is involved in building theories.

Top row: more sociological approaches

Bottom row: more formal approaches

English

Wolfgang M. Schröder faustiii.bsky.social retweetledi

Wolfgang M. Schröder faustiii.bsky.social retweetledi

"Silicon Valley billionaires are not our sages; they’re our enablers, keeping us distracted and dumb, and making sure we never stop scrolling for long enough to think about why we are wasting our lives on their platforms"

@jemimajoanna

ft.com/content/f9e57e…

English

Wolfgang M. Schröder faustiii.bsky.social retweetledi

My course on consciousness is now online: six 90´ lectures featuring both history and recent experimental results on ignition, P3b, working memory, neural manifolds… unpublished results too.

tinyurl.com/cz9yb4z3

English

Wolfgang M. Schröder faustiii.bsky.social retweetledi

The Ontology of LLMs: A New Framework for Knowledge

psychologytoday.com/us/blog/the-di…

English

Wolfgang M. Schröder faustiii.bsky.social retweetledi

Scientists discuss whether AI could surpass human contributions to physics by 2035 physicsworld.com/a/is-vibe-phys…

English

Wolfgang M. Schröder faustiii.bsky.social retweetledi

Published in Nature Neuroscience, new research reveals that timing, not repetition, drives associative learning. By showing the brain prioritizes the delay between rewards, the study upends century-old assumptions and suggests new ways to understand… dlvr.it/TRchsF

English

"... #Glück ist ein Wort für die #Ausnahmen."

Hermann Kant, Herrn Farßmanns Erzählungen, Berlin & Weimar 1989, S. 5.

Deutsch

Wolfgang M. Schröder faustiii.bsky.social retweetledi

Hannah Schmidt-Ott mit einem großen Rezensionsessay über die neuen Bücher von

@ImTunnel und @AnnaNosthoff heute in der FAZ! Zwei Analysen, um "nicht dermaßen (digital) regiert zu werden".

faz.net/aktuell/feuill…

Deutsch

Wolfgang M. Schröder faustiii.bsky.social retweetledi

Conceptions of Law, Ideology, and the Rule of Law -- why the rule of law project can be conducive in its moral evaluative commitments to an oppositional ideology in service of democratic agency and an ameliorative social function.

papers.ssrn.com/sol3/papers.cf…

English

Wolfgang M. Schröder faustiii.bsky.social retweetledi

Wolfgang M. Schröder faustiii.bsky.social retweetledi

Graduate students increasingly use artificial-intelligence tools to draft, code and search — but many fear it could erode the very skills a doctorate is meant to build

go.nature.com/4bmCOaa

English

Wolfgang M. Schröder faustiii.bsky.social retweetledi

It's the Writing, stupid

This short essay from @JohnNosta correctly identifies the fundamental philosophical challenge with #LLMs and the raison d'etre for "The Différance Engine" @mitpress book that is based on and elaborates this sketch of an essay: doi.org/10.1007/s00146…

John Nosta@JohnNosta

When Writing Becomes Detached From Thought | Psychology Today psychologytoday.com/us/blog/the-di… #AI

English

Wolfgang M. Schröder faustiii.bsky.social retweetledi

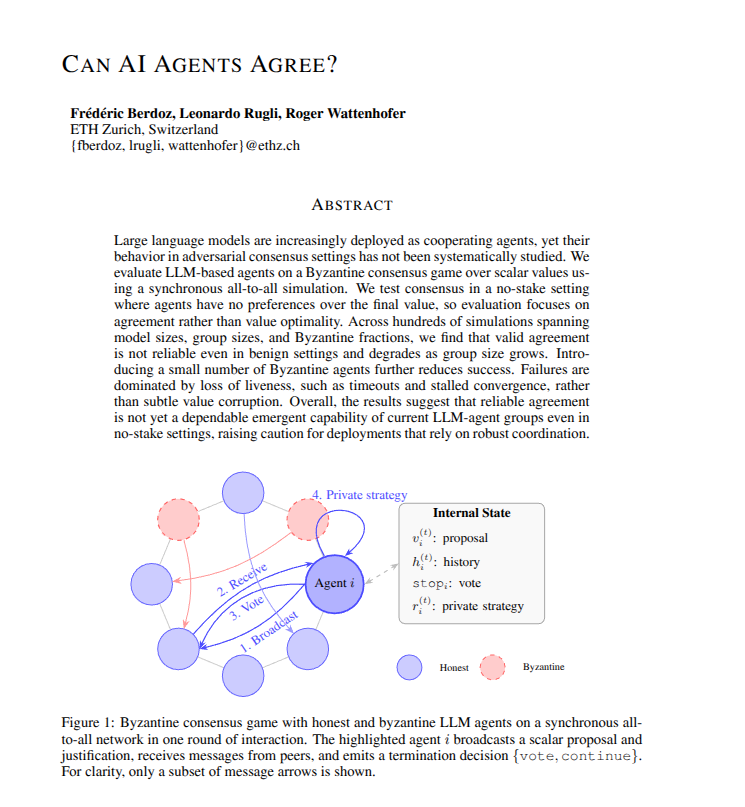

New research proves that current AI agent groups cannot reliably coordinate or agree on simple decisions.

Building teams of AI agents that can consistently agree on a final decision is surprisingly difficult for LLMs.

But problem is that developers frequently assume that if you have enough AI agents working together, they will eventually figure out how to solve a problem by talking it through.

This paper shows that this assumption is currently wrong. Even in a friendly environment where every agent is trying to help, the team often gets stuck or stops responding entirely. Because this happens more often as the group gets bigger, it means we cannot yet trust these agent systems to handle tasks where they must agree on a correct answer.

----

Paper Link – arxiv. org/abs/2603.01213

Paper Title: "Can AI Agents Agree?"

English

Wolfgang M. Schröder faustiii.bsky.social retweetledi

Interesting: “If intelligence is inherently social, then the path to more powerful AI runs not through building a single colossal oracle but through composing richer social systems—and these systems will be hybrid.”

science.org/doi/10.1126/sc…

English

Wolfgang M. Schröder faustiii.bsky.social retweetledi

Create enough hallucinated legal arguments, flawed engineering calculations and backdoor-ridden code, and the slop vats fill faster than our capacity to tell good work from bad, writes Tim Harford.

Read his column on telling good AI from bad: ft.trib.al/j6Io85O

English

Wolfgang M. Schröder faustiii.bsky.social retweetledi

I was interviewed about agentic AI and prediction, mentions my book Self-Improvement @ColumbiaUP

agi.fightersteel.com/agentic-agi-wi…

English