International Cyber Digest@IntCyberDigest

‼️🚨 BREAKING: An AI found a Linux kernel zero-day that roots every distribution since 2017. The exploit fits in 732 bytes of Python. Patch your kernel ASAP.

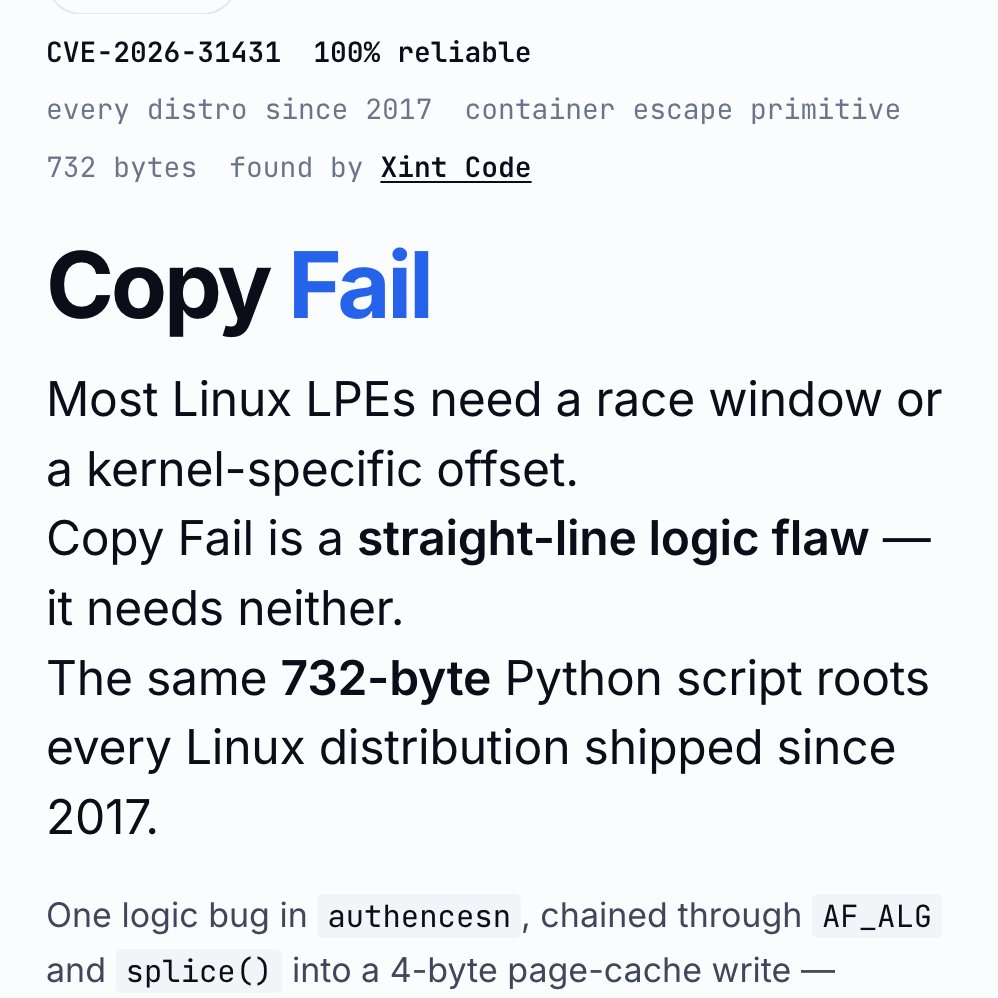

The vulnerability is CVE-2026-31431, nicknamed "Copy Fail," disclosed today by Theori. It has been sitting quietly in the Linux kernel for nine years.

Most Linux privilege-escalation bugs are picky. They need a precise timing window (a "race"), or specific kernel addresses leaked from somewhere, or careful tuning per distribution. Copy Fail needs none of that. It is a straight-line logic mistake that works on the first try, every time, on every mainstream Linux box.

The attacker just needs a normal user account on the machine. From there, the script asks the kernel to do some encryption work, abuses how that work is wired up, and ends up writing 4 bytes into a memory area called the "page cache" (Linux's high-speed copy of files in RAM). Those 4 bytes can be aimed at any program the system trusts, like /usr/bin/su, the shortcut to becoming root.

Result: the next time anyone runs that program, it lets the attacker in as root.

What should worry most: the corruption never touches the file on disk. It only exists in Linux's in-memory copy of that file. If you imaged the hard drive afterwards, the on-disk file would match the official package hash exactly. Reboot the machine, or just put it under memory pressure (any normal system load that needs the RAM), and the cached copy reloads fresh from disk.

Containers do not help either. The page cache is shared across the whole host, so a process inside a container can use this bug to compromise the underlying server and reach into other tenants.

The original sin was a 2017 "in-place optimization" in a kernel crypto module called algif_aead. It was meant to make encryption slightly faster. The change broke a critical safety assumption, and nobody noticed for nine years. That bug then rode every kernel update from 2017 to today.

This vulnerability affects the following:

🔴 Shared servers (dev boxes, jump hosts, build servers): any user becomes root

🔴 Kubernetes and container clusters: one compromised pod escapes to the host

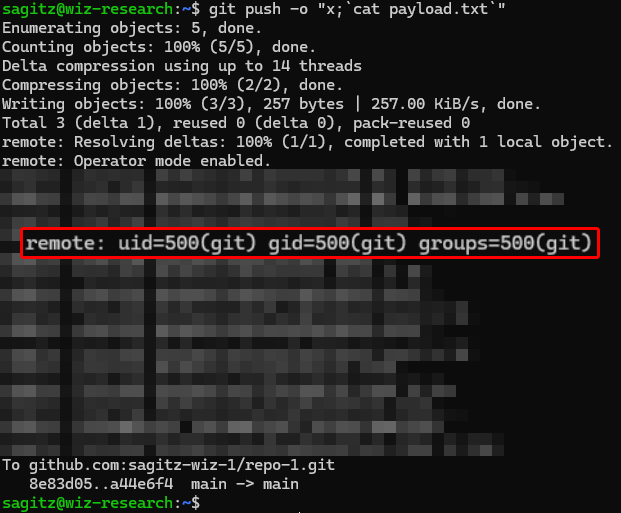

🔴 CI runners (GitHub Actions, GitLab, Jenkins): a malicious pull request becomes root on the runner

🔴 Cloud platforms running user code (notebooks, agent sandboxes, serverless functions): a tenant becomes host root

Timeline:

🔴 March 23, 2026: reported to the Linux kernel security team

🔴 April 1: patch committed to mainline (commit a664bf3d603d)

🔴 April 22: CVE assigned

🔴 April 29: public disclosure

Mitigation: update your kernel to a build that includes mainline commit a664bf3d603d. If you cannot patch immediately, turn off the vulnerable module:

echo "install algif_aead /bin/false" > /etc/modprobe.d/disable-algif.conf

rmmod algif_aead 2>/dev/null || true

For environments that run untrusted code (containers, sandboxes, CI runners), block access to the kernel's AF_ALG crypto interface entirely, even after patching. Almost nothing legitimate needs it, and blocking it shuts the door on this whole class of bug...