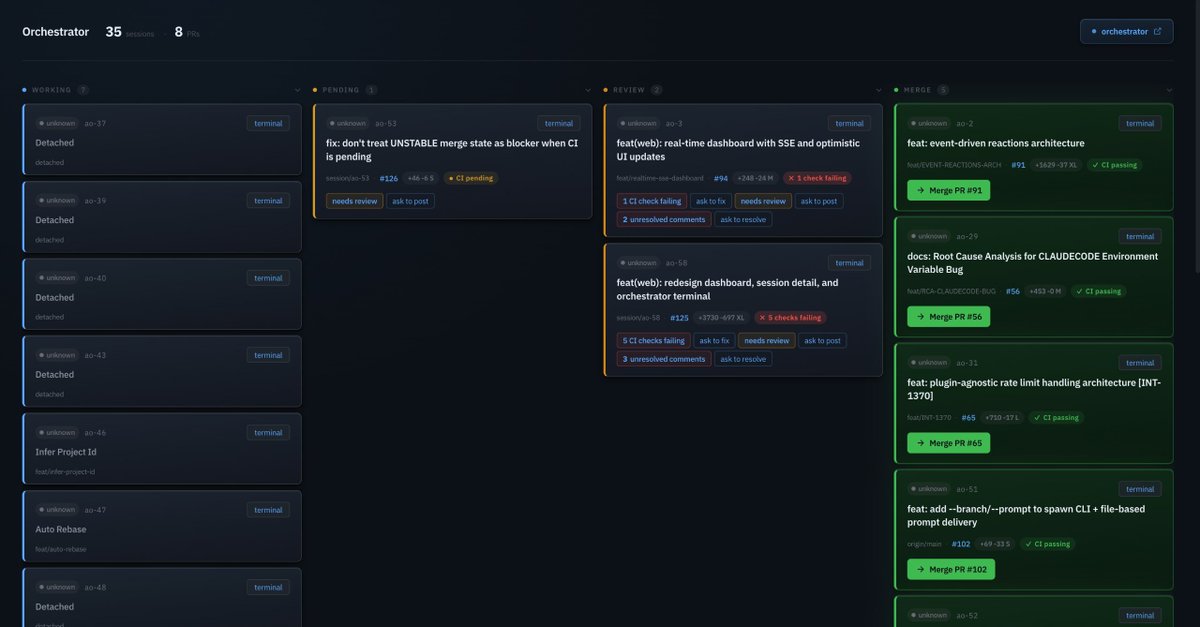

🚨Shocking: A 25,000-task experiment just proved that the entire multi-agent AI framework industry is built on the wrong assumption. Every major framework - CrewAI, AutoGen, MetaGPT, ChatDev - starts from the same premise: assign roles, define hierarchies, let a coordinator distribute work. Researchers tested 8 coordination protocols across 8 models and up to 256 agents. The protocol where agents were given NO assigned roles, NO hierarchy, and NO coordinator outperformed centralized coordination by 14%. The gap between the best and worst protocol was 44%. That's not noise. That's a completely different outcome depending on how you organize the agents - not which model you use. Here's what makes this uncomfortable: When agents were simply given a fixed turn order and told "figure it out," they spontaneously invented 5,006 unique specialized roles from just 8 agents. They voluntarily sat out tasks they weren't good at. They formed their own shallow hierarchies - without anyone designing them. The researchers call it the "endogeneity paradox." The best coordination isn't maximum control or maximum freedom. It's minimal scaffolding - just enough structure for self-organization to emerge. But there's a catch nobody building agents wants to hear: below a certain model capability threshold, the effect reverses. Weaker models actually need rigid structure. Autonomy only works when the model is smart enough to use it. Which means every agent framework shipping with one-size-fits-all hierarchies is wrong twice - over-constraining strong models and under-constraining weak ones. The $2B+ invested in agent orchestration tooling may be solving a problem that capable models solve better on their own.