Dmitry Petrov

1.2K posts

Dmitry Petrov

@FullStackML

🛠️ Building data infra for AI/ML. Ex-Data Scientist @Microsoft. Created DVC, now DataChain. PhD in CS. Serious about data. Less serious about everything else.

San Francisco, CA Katılım Ekim 2011

540 Takip Edilen2.2K Takipçiler

OpenAI's Data Agent and the S3 Gap open.substack.com/pub/datachaina…

English

I just started a Substack! You can subscribe to it here dmpetrov.substack.com/?r=6ixv9g&utm_…

English

@DeepValueBagger Agents are about to rediscover why data warehouses existed in the first place 😄

English

Turns out "Claude Code over files in S3" quickly becomes "rebuild half the data warehouse stack" 🫠

Schemas, datasets, lineage, file refs, etc.

OpenAI's Data Agent post made us feel slightly less insane 😄

Read more: datachain.ai/blog/openai-da…

English

@APRiCiTY1314520 Yeah 😄”Read files from S3” is the hello world.

The real fun starts when the agent forgets where the files came from, what schema they had, who can access them, and whether yesterday’s output was wrong.

English

@FullStackML Exactly. The hard part is not “code over S3 files.”

It is giving the agent enough data context: schemas, lineage, permissions, refs, and recovery.

That’s where the real complexity starts.

English

@dividedwefall02 .md files are the gateway drug to rebuilding a data platform 😅

English

@ryanlpeterman @grok list all the reasons why LLMs score 0% on his text-SQL benchmark

English

Mike Stonebraker is a Turing award winner famous for his fundamental contributions to databases (e.g. Postgres, C-Store and much more). I interviewed him recently about:

• The story behind Postgres & the hardest technical challenge in building it

• Where he disagreed with Google's technical decisions

• Future problems in databases

• Literature recommendations to learn databases

• Why LLMs score 0% on his text-SQL benchmark

• What if you replaced all state in an OS with a DB

Timestamps:

0:00 - Intro

1:03 - How he got into databases

6:43 - Competing with Oracle

9:07 - What made Postgres special

15:55 - One size fits none

21:37 - Why he disagreed with Google

29:14 - Why he chose academia over big tech

30:58 - Replacing state in an OS with a DB

42:02 - Future problems in databases

51:36 - Technical book recommendations to learn databases

52:20 - Advice for younger self

55:52 - Outro

Where to watch:

• YouTube: youtu.be/YPObBOwIrHk

• Spotify: open.spotify.com/episode/1zxBGj…

• Apple Podcasts: podcasts.apple.com/us/podcast/the…

• Transcript: developing.dev/p/turing-award…

YouTube

English

Milla Jovovich releasing a agent memory framework wasn’t on my 2026 bingo card

the Fifth Element but it stores everything instead of summarizing

Ben Sigman@bensig

Mempalace --> Multipass. Thanks to Anthropic, even Leeloo can code. github.com/milla-jovovich…

English

@karpathy running this + a fully dynamic (OpenCrawl) setup

don’t trust it to write into the knowledge base —> keeping it read-only

feels like a hack

how are people handling this?

English

LLM Knowledge Bases

Something I'm finding very useful recently: using LLMs to build personal knowledge bases for various topics of research interest. In this way, a large fraction of my recent token throughput is going less into manipulating code, and more into manipulating knowledge (stored as markdown and images). The latest LLMs are quite good at it. So:

Data ingest:

I index source documents (articles, papers, repos, datasets, images, etc.) into a raw/ directory, then I use an LLM to incrementally "compile" a wiki, which is just a collection of .md files in a directory structure. The wiki includes summaries of all the data in raw/, backlinks, and then it categorizes data into concepts, writes articles for them, and links them all. To convert web articles into .md files I like to use the Obsidian Web Clipper extension, and then I also use a hotkey to download all the related images to local so that my LLM can easily reference them.

IDE:

I use Obsidian as the IDE "frontend" where I can view the raw data, the the compiled wiki, and the derived visualizations. Important to note that the LLM writes and maintains all of the data of the wiki, I rarely touch it directly. I've played with a few Obsidian plugins to render and view data in other ways (e.g. Marp for slides).

Q&A:

Where things get interesting is that once your wiki is big enough (e.g. mine on some recent research is ~100 articles and ~400K words), you can ask your LLM agent all kinds of complex questions against the wiki, and it will go off, research the answers, etc. I thought I had to reach for fancy RAG, but the LLM has been pretty good about auto-maintaining index files and brief summaries of all the documents and it reads all the important related data fairly easily at this ~small scale.

Output:

Instead of getting answers in text/terminal, I like to have it render markdown files for me, or slide shows (Marp format), or matplotlib images, all of which I then view again in Obsidian. You can imagine many other visual output formats depending on the query. Often, I end up "filing" the outputs back into the wiki to enhance it for further queries. So my own explorations and queries always "add up" in the knowledge base.

Linting:

I've run some LLM "health checks" over the wiki to e.g. find inconsistent data, impute missing data (with web searchers), find interesting connections for new article candidates, etc., to incrementally clean up the wiki and enhance its overall data integrity. The LLMs are quite good at suggesting further questions to ask and look into.

Extra tools:

I find myself developing additional tools to process the data, e.g. I vibe coded a small and naive search engine over the wiki, which I both use directly (in a web ui), but more often I want to hand it off to an LLM via CLI as a tool for larger queries.

Further explorations:

As the repo grows, the natural desire is to also think about synthetic data generation + finetuning to have your LLM "know" the data in its weights instead of just context windows.

TLDR: raw data from a given number of sources is collected, then compiled by an LLM into a .md wiki, then operated on by various CLIs by the LLM to do Q&A and to incrementally enhance the wiki, and all of it viewable in Obsidian. You rarely ever write or edit the wiki manually, it's the domain of the LLM. I think there is room here for an incredible new product instead of a hacky collection of scripts.

English

@atmoio The internet feeds were coffee-addictive.

AI conversations are heroin-addictive.

English

I packaged up the "autoresearch" project into a new self-contained minimal repo if people would like to play over the weekend. It's basically nanochat LLM training core stripped down to a single-GPU, one file version of ~630 lines of code, then:

- the human iterates on the prompt (.md)

- the AI agent iterates on the training code (.py)

The goal is to engineer your agents to make the fastest research progress indefinitely and without any of your own involvement. In the image, every dot is a complete LLM training run that lasts exactly 5 minutes. The agent works in an autonomous loop on a git feature branch and accumulates git commits to the training script as it finds better settings (of lower validation loss by the end) of the neural network architecture, the optimizer, all the hyperparameters, etc. You can imagine comparing the research progress of different prompts, different agents, etc.

github.com/karpathy/autor…

Part code, part sci-fi, and a pinch of psychosis :)

English

@jamiequint Love the shift to context management!

Works best when the system exposes structure (repos, dbt DAGs).

Harder with datasets like images/video/docs where logic lives in code and dependencies are mostly implicit.

English

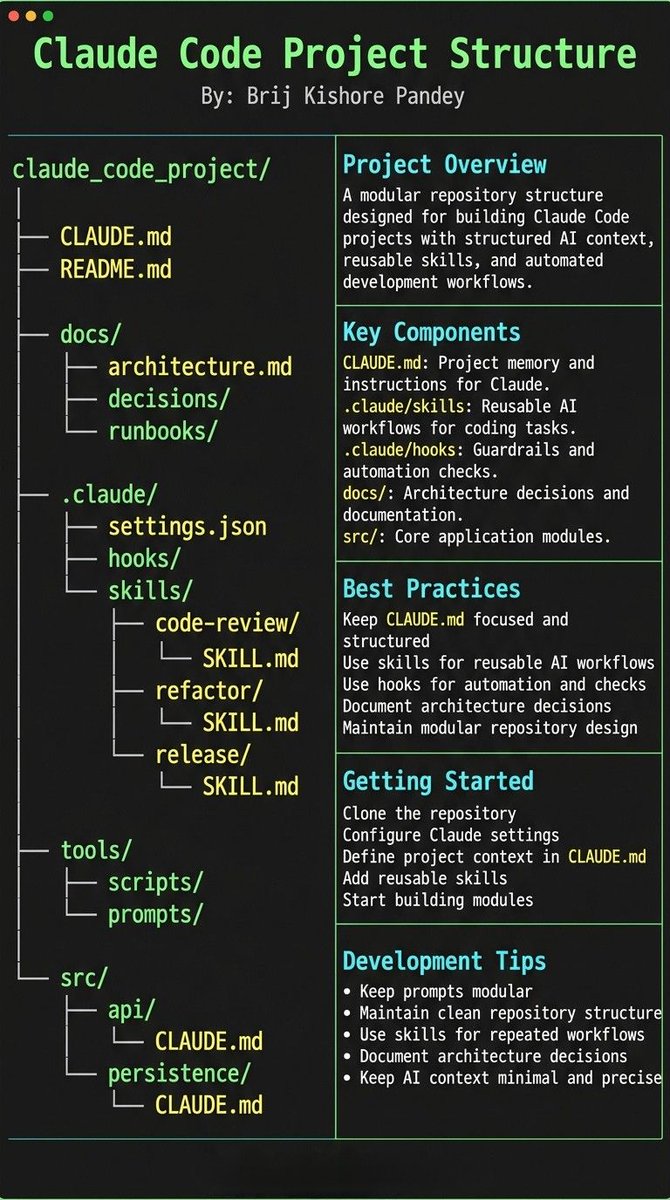

For data projects CLAUDE.md is must-have.

Meaning of data needs to be specified. Storage buckets to use (and not to touch). What files to use and semantic. Bonus point if it can point to code that produced the files - no need describing semantic manually.

Repo structure is the foundation. Data context layer most teams skip.

English

Most people treat CLAUDE.md like a prompt file.

That’s the mistake.

If you want Claude Code to feel like a senior engineer living inside your repo, your project needs structure.

Claude needs 4 things at all times:

• the why → what the system does

• the map → where things live

• the rules → what’s allowed / not allowed

• the workflows → how work gets done

I call this:

The Anatomy of a Claude Code Project 👇

━━━━━━━━━━━━━━━

1️⃣ CLAUDE.md = Repo Memory (keep it short)

This is the north star file.

Not a knowledge dump. Just:

• Purpose (WHY)

• Repo map (WHAT)

• Rules + commands (HOW)

If it gets too long, the model starts missing important context.

━━━━━━━━━━━━━━━

2️⃣ .claude/skills/ = Reusable Expert Modes

Stop rewriting instructions.

Turn common workflows into skills:

• code review checklist

• refactor playbook

• release procedure

• debugging flow

Result:

Consistency across sessions and teammates.

━━━━━━━━━━━━━━━

3️⃣ .claude/hooks/ = Guardrails

Models forget.

Hooks don’t.

Use them for things that must be deterministic:

• run formatter after edits

• run tests on core changes

• block unsafe directories (auth, billing, migrations)

━━━━━━━━━━━━━━━

4️⃣ docs/ = Progressive Context

Don’t bloat prompts.

Claude just needs to know where truth lives:

• architecture overview

• ADRs (engineering decisions)

• operational runbooks

━━━━━━━━━━━━━━━

5️⃣ Local CLAUDE.md for risky modules

Put small files near sharp edges:

src/auth/CLAUDE.md

src/persistence/CLAUDE.md

infra/CLAUDE.md

Now Claude sees the gotchas exactly when it works there.

━━━━━━━━━━━━━━━

Prompting is temporary.

Structure is permanent.

When your repo is organized this way, Claude stops behaving like a chatbot…

…and starts acting like a project-native engineer.

English

I’m at the MLOps conference today in Mounting View.

If you’re around, would love to connect!

MLOps Community@mlopscommunity

Coding Agents Conference. March 3rd at the Computer History Museum. luma.com/codingagents

English