Honestly, Falcon is the closest thing to an actual AI engineer we've built.

Live inside Future AGI. Built to debug, fix, and optimize your AI systems autonomously.

You type what you need. It does the rest.

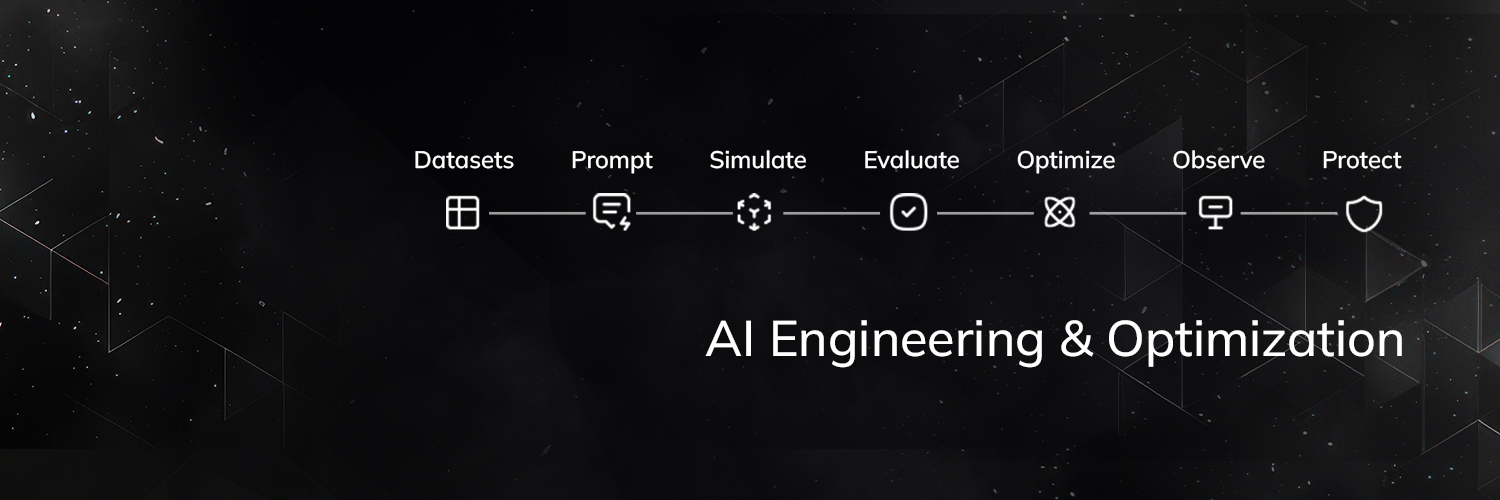

Simulations, evals, root cause analysis, prompt fixes, re-testing - one conversation, start to finish. No dashboards to navigate. No queries to write. No tabs to alt-through at 2am.

The thing that surprised even us: it got scary good at diagnosing failures. Not "your score is low" but "3 of your 6 failing scenarios trace to the same retrieval bug. Your FAQ page is outranking the policy doc on complaint-phrased queries."

Most in-product copilots answer questions. Falcon does the work. It breaks down complex tasks, holds context across your entire stack, and moves through it the way a senior engineer would - evals to traces to agents to gateway, in one conversation.

It knows what page you're on. It knows what to investigate before you ask. And when it finds something, it doesn't just report it. It fixes, re-tests, and shows you the before/after.

The hours your team used to spend hunting through traces and rerunning evals manually? Falcon takes those back.

While the world is shipping agents, we shipped the one that makes them better.

Letting Falcon loose. Go see what it catches.

Try the Falcon - shorturl.at/hoBHi

Product Doc - shorturl.at/wsZww

English